活动从视频和光学流数据识别使用深度学习gydF4y2Ba

这个例子首先展示了如何使用pretrained执行活动识别的三维(I3D)二束卷积神经网络分类器,然后展示了如何使用基于视频传输的学习培训这样一个视频分类器使用RGB和光学流数据从视频gydF4y2Ba[1]gydF4y2Ba。gydF4y2Ba

概述gydF4y2Ba

建立活动识别涉及到预测对象的行动,比如散步,游泳,或坐着,使用一组视频帧。活动识别的视频有很多应用,如人机交互、机器学习、异常检测、监视和目标检测。例如,在线预测的多个操作来自多个摄像头的视频可以为机器人的学习是很重要的。使用视频图像分类相比,动作识别是挑战模式由于不准确的地面实况视频数据的数据集,演员的各种手势视频可以执行,大量类不平衡数据集,和大量的数据需要从头开始训练一个健壮的分类器。深度学习技术,如I3D二束卷积网络gydF4y2Ba[1]gydF4y2BaR(2 + 1)维gydF4y2Ba4gydF4y2Ba],SlowFast [gydF4y2Ba5gydF4y2Ba]表明改进的性能在较小的数据集使用转移学习与网络pretrained大型视频活动识别的数据集,如动力学- 400 (gydF4y2Ba6gydF4y2Ba]。gydF4y2Ba

注意:gydF4y2Ba这个例子需要计算机视觉工具箱™模型Inflated-3D视频分类。你可以安装的计算机视觉工具箱模型Inflated-3D视频分类插件探险家。关于安装插件的更多信息,请参阅gydF4y2Ba获取和管理插件gydF4y2Ba。gydF4y2Ba

执行活动识别使用Pretrained Inflated-3D视频分类器gydF4y2Ba

下载pretrained Inflated-3D视频分类器以及一个视频文件来执行活动的认可。下载zip文件的大小大约是89 MB。gydF4y2Ba

downloadFolder = fullfile (tempdir,gydF4y2Ba“hmdb51”gydF4y2Ba,gydF4y2Ba“pretrained”gydF4y2Ba,gydF4y2Ba“I3D”gydF4y2Ba);gydF4y2Ba如果gydF4y2Ba~ isfolder (downloadFolder) mkdir (downloadFolder);gydF4y2Ba结束gydF4y2Ba文件名=gydF4y2Ba“activityRecognition-I3D-HMDB51-21b.zip”gydF4y2Ba;zipFile = fullfile (downloadFolder,文件名);gydF4y2Ba如果gydF4y2Ba~ isfile zipFile disp (gydF4y2Ba“下载pretrained网络…”gydF4y2Ba);downloadURL =gydF4y2Ba“https://ssd.mathworks.com/金宝appsupportfiles/vision/data/”gydF4y2Ba+文件名;websave (zipFile downloadURL);解压缩(zipFile downloadFolder);gydF4y2Ba结束gydF4y2Ba

加载pretrained Inflated-3D视频分类器。gydF4y2Ba

pretrainedDataFile = fullfile (downloadFolder,gydF4y2Ba“inflated3d-FiveClasses-hmdb51.mat”gydF4y2Ba);pretrained =负载(pretrainedDataFile);inflated3dPretrained = pretrained.data.inflated3d;gydF4y2Ba

显示的类标签名pretrained视频分类器。gydF4y2Ba

类= inflated3dPretrained.ClassesgydF4y2Ba

类=gydF4y2Ba5×1分类gydF4y2Ba吻笑倒伏地挺身gydF4y2Ba

读取和显示视频gydF4y2Bapour.avigydF4y2Ba使用gydF4y2BaVideoReadergydF4y2Ba和gydF4y2Bavision.VideoPlayergydF4y2Ba。gydF4y2Ba

videoFilename = fullfile (downloadFolder,gydF4y2Ba“pour.avi”gydF4y2Ba);videoReader = videoReader (videoFilename);放像机= vision.VideoPlayer;放像机。Name =gydF4y2Ba“倒”gydF4y2Ba;gydF4y2Ba而gydF4y2BahasFrame (videoReader)帧= readFrame (videoReader);gydF4y2Ba%调整显示的帧。gydF4y2Ba= imresize帧(帧,1.5);步骤(放像机、框架);gydF4y2Ba结束gydF4y2Ba释放(放像机);gydF4y2Ba

选择10个随机选择的视频序列分类视频,均匀覆盖整个文件的查找操作类中主要的视频。gydF4y2Ba

numSequences = 10;gydF4y2Ba

分类使用的视频文件gydF4y2BaclassifyVideoFilegydF4y2Ba函数。gydF4y2Ba

[actionLabel,分数]= classifyVideoFile (inflated3dPretrained videoFilename,gydF4y2Ba“NumSequences”gydF4y2BanumSequences)gydF4y2Ba

actionLabel =gydF4y2Ba分类gydF4y2Ba倒gydF4y2Ba

分数=gydF4y2Ba单gydF4y2Ba0.4482gydF4y2Ba

火车视频手势识别的分类器gydF4y2Ba

本节的例子显示了上面所示的视频分类器训练使用转移学习。设置gydF4y2BadoTraininggydF4y2Ba变量来gydF4y2Ba假gydF4y2Ba使用pretrained视频分类器,而不必等待培训完成。另外,如果你想训练视频分类器,设置gydF4y2BadoTraininggydF4y2Ba变量来gydF4y2Ba真正的gydF4y2Ba。gydF4y2Ba

doTraining = false;gydF4y2Ba

培训和验证数据下载gydF4y2Ba

这个例子列车Inflated-3D (I3D)视频分类器使用gydF4y2BaHMDB51gydF4y2Ba数据集,使用gydF4y2BadownloadHMDB51gydF4y2Ba金宝app支持函数,列出在这个例子中,将HMDB51数据集下载到一个文件夹命名gydF4y2Bahmdb51gydF4y2Ba。gydF4y2Ba

downloadFolder = fullfile (tempdir,gydF4y2Ba“hmdb51”gydF4y2Ba);downloadHMDB51 (downloadFolder);gydF4y2Ba

下载完成后,提取RAR文件gydF4y2Bahmdb51_org.rargydF4y2Ba到gydF4y2Bahmdb51gydF4y2Ba文件夹中。接下来,使用gydF4y2BacheckForHMDB51FoldergydF4y2Ba金宝app支持函数,列出在这个例子中,确认下载并提取文件。gydF4y2Ba

allClasses = checkForHMDB51Folder (downloadFolder);gydF4y2Ba

数据集包含了约7000年的2 GB的视频数据片段51类,如gydF4y2Ba喝gydF4y2Ba,gydF4y2Ba运行gydF4y2Ba,gydF4y2Ba握手gydF4y2Ba。每个视频帧的高度为240像素和176像素的最小宽度。帧的数量范围从18到大约1000年。gydF4y2Ba

减少训练时间,这个例子训练活动识别网络分类5 action类而不是所有51类的数据集。gydF4y2BauseAllDatagydF4y2Ba来gydF4y2Ba真正的gydF4y2Ba与所有51类培训。gydF4y2Ba

useAllData = false;gydF4y2Ba如果gydF4y2BauseAllData类= allClasses;gydF4y2Ba结束gydF4y2BadataFolder = fullfile (downloadFolder,gydF4y2Ba“hmdb51_org”gydF4y2Ba);gydF4y2Ba

将数据集分为训练分类器的训练集和测试集评估分类器。使用80%的数据为训练集和测试集。使用gydF4y2Bafolders2labelsgydF4y2Ba和gydF4y2BasplitlabelsgydF4y2Ba创建文件夹和标签信息将基于每个标签的数据分成训练集和测试数据集通过随机选择一个比例从每个标签的文件。gydF4y2Ba

(标签、文件)= folders2labels (fullfile (dataFolder,字符串(类)),gydF4y2Ba…gydF4y2Ba“IncludeSubfolders”gydF4y2Ba,真的,gydF4y2Ba…gydF4y2Ba“FileExtensions”gydF4y2Ba,gydF4y2Ba“.avi”gydF4y2Ba);指数= splitlabels(标签,0.8,gydF4y2Ba“随机”gydF4y2Ba);trainFilenames =文件(指标{1});testFilenames =文件(指标{2});gydF4y2Ba

规范化网络的输入数据,数据集的最小和最大值垫提供的文件gydF4y2BainputStatistics.matgydF4y2Ba,这个例子。发现的最大和最小值不同的数据集,使用gydF4y2BainputStatisticsgydF4y2Ba金宝app支持函数,列出在这个例子。gydF4y2Ba

inputStatsFilename =gydF4y2Ba“inputStatistics.mat”gydF4y2Ba;gydF4y2Ba如果gydF4y2Ba~存在(inputStatsFilenamegydF4y2Ba“文件”gydF4y2Ba)disp (gydF4y2Ba“阅读所有的训练数据输入统计……”gydF4y2Ba)inputStats = inputStatistics (dataFolder);gydF4y2Ba其他的gydF4y2Bad =负载(inputStatsFilename);inputStats = d.inputStats;gydF4y2Ba结束gydF4y2Ba

加载数据集gydF4y2Ba

这个例子使用一个数据存储读取视频场景,相应的光学流动数据,从视频文件和相应的标签。gydF4y2Ba

指定数据存储视频帧的数量应该配置为输出每次从数据库读取数据。gydF4y2Ba

numFrames = 64;gydF4y2Ba

这里使用价值64平衡内存使用和分类。共同的价值观考虑16,32,64,或128。使用更多的框架有助于捕捉额外的时间信息,但需要更多的内存。你可能需要降低这个值取决于您的系统资源。实证分析需要确定最优的帧数。gydF4y2Ba

接下来,指定高度和宽度的帧数据存储应该配置为输出。数据存储指定大小自动调整原始视频帧,启用批处理多个视频序列。gydF4y2Ba

frameSize = [112112];gydF4y2Ba

值(112 112)是用来捕捉视频场景帮助长时间关系分类活动与长时间的持续时间。常见的值大小(112 112),(224 224),或(256 256)。小尺寸使更多的视频帧的使用内存使用的成本,处理时间和空间分辨率。视频帧的最小高度和宽度HMDB51数据集的240年和176年,分别。如果你想指定数据存储读取一帧大小大于最小值,如(256、256),首先调整框架使用gydF4y2BaimresizegydF4y2Ba。与帧的数量,需要实证分析以确定最优值。gydF4y2Ba

指定数量的渠道gydF4y2Ba3gydF4y2BaRGB视频子网,gydF4y2Ba2gydF4y2Ba的光流子网I3D视频分类器。光流数据的两个渠道gydF4y2Ba

和gydF4y2Ba

组件的速度,gydF4y2Ba

和gydF4y2Ba

,分别。gydF4y2Ba

rgbChannels = 3;flowChannels = 2;gydF4y2Ba

使用helper函数,gydF4y2BacreateFileDatastoregydF4y2Ba配置两个gydF4y2BaFileDatastoregydF4y2Ba对象加载数据,一个用于培训,另一个用于验证。helper函数列的这个例子。每个数据存储读取视频文件提供RGB数据和相应的标签信息。gydF4y2Ba

isDataForTraining = true;dsTrain = createFileDatastore (trainFilenames numFrames rgbChannels,类,isDataForTraining);isDataForTraining = false;dsVal = createFileDatastore (testFilenames numFrames rgbChannels,类,isDataForTraining);gydF4y2Ba

定义网络体系结构gydF4y2Ba

I3D网络gydF4y2Ba

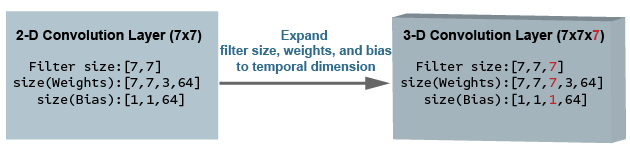

使用3 d CNN是一种自然的方法从视频中提取时空特性。您可以创建一个从pretrained I3D网络二维图像分类网络如《盗梦空间》v1或ResNet-50通过扩大二维过滤器和池内核到3 d。这个过程重用权重从图像分类任务引导的视频识别任务。gydF4y2Ba

下面的图是一个示例显示如何吹出了一个二维卷积层三维卷积层。通货膨胀包括扩大滤波器尺寸,重量和偏见通过添加第三个维度(时间维度)。gydF4y2Ba

二束I3D网络gydF4y2Ba

视频数据可以被认为有两个部分:一个空间组件和一个时间组件。gydF4y2Ba

空间组件组成的形状信息,纹理和颜色的视频对象。RGB数据包含这些信息。gydF4y2Ba

颞组件包括信息帧和描绘对象的运动之间的重要运动摄像机和一个场景中的物体。计算光流是一种常见的技术,从视频中提取时间信息。gydF4y2Ba

二束CNN包含空间子网和颞子网gydF4y2Ba[2]gydF4y2Ba。卷积神经网络训练密度光学流和视频数据流可以实现更好的性能有限的训练数据与原始堆叠RGB帧。下面的插图显示了一个典型的二束I3D网络。gydF4y2Ba

配置Inflated-3D (I3D)视频传输学习的分类器gydF4y2Ba

在本例中,您创建一个基于GoogLeNet I3D视频分类器架构,一个3 d卷积神经网络视频分类器pretrained动力学- 400数据集。gydF4y2Ba

指定GoogLeNet作为骨干卷积神经网络架构I3D视频分类器包含两个子网,一个用于视频数据,另一个用于光学流数据。gydF4y2Ba

baseNetwork =gydF4y2Ba“googlenet-video-flow”gydF4y2Ba;gydF4y2Ba

指定的输入大小Inflated-3D视频分类器。gydF4y2Ba

inputSize = [frameSize、rgbChannels numFrames];gydF4y2Ba

获得最小和最大的RGB值和光学流的数据gydF4y2BainputStatsgydF4y2Ba结构加载的gydF4y2BainputStatistics.matgydF4y2Ba文件。这些值正常化所需输入数据。gydF4y2Ba

oflowMin =挤压(inputStats.oflowMin)的;oflowMax =挤压(inputStats.oflowMax)的;rgbMin =挤压(inputStats.rgbMin)的;rgbMax =挤压(inputStats.rgbMax)的;stats.Video。Min = rgbMin;stats.Video。Max = rgbMax;stats.Video。意味着= [];stats.Video。StandardDeviation = []; stats.OpticalFlow.Min = oflowMin(1:flowChannels); stats.OpticalFlow.Max = oflowMax(1:flowChannels); stats.OpticalFlow.Mean = []; stats.OpticalFlow.StandardDeviation = [];

创建I3D视频分类器使用gydF4y2Bainflated3dVideoClassifiergydF4y2Ba函数。gydF4y2Ba

i3d = inflated3dVideoClassifier (baseNetwork字符串(类),gydF4y2Ba…gydF4y2Ba“InputSize”gydF4y2BainputSize,gydF4y2Ba…gydF4y2Ba“InputNormalizationStatistics”gydF4y2Ba、统计数据);gydF4y2Ba

指定一个视频分类器模型的名字。gydF4y2Ba

i3d。ModelName =gydF4y2Ba“Inflated-3D活动识别器使用视频和光学流”gydF4y2Ba;gydF4y2Ba

增加和训练数据进行预处理gydF4y2Ba

数据增强提供了一种方法使用有限的训练数据集。增加对视频数据的帧数必须相同,即一个视频序列,基于网络输入的大小。微小的变化,如翻译、裁剪或改变一个图像,提供新的,不同的,独特的图像,您可以用它来训练一个健壮的视频分类器。数据存储是一个便捷的途径,阅读和增强数据的集合。增加培训视频数据通过使用gydF4y2BaaugmentVideogydF4y2Ba金宝app支持函数,定义在这个例子。gydF4y2Ba

dsTrain =变换(dsTrain @augmentVideo);gydF4y2Ba

预处理的培训视频数据调整到Inflated-3D视频分类器输入大小,通过使用gydF4y2BapreprocessVideoClipsgydF4y2Ba定义在这个例子。指定gydF4y2BaInputNormalizationStatisticsgydF4y2Ba的视频预处理函数分类器和输入大小作为一个结构体字段值,gydF4y2BapreprocessInfogydF4y2Ba。的gydF4y2BaInputNormalizationStatisticsgydF4y2Ba属性用于重新调节视频帧和光学流数据1和1之间。输入用于调整视频帧大小使用gydF4y2BaimresizegydF4y2Ba基于gydF4y2BaSizingOptiongydF4y2Ba的价值gydF4y2Ba信息gydF4y2Ba结构体。或者,您可以使用gydF4y2Ba“randomcrop”gydF4y2Ba或gydF4y2Ba“centercrop”gydF4y2Ba随机的作物或中心作物的输入数据输入大小视频分类器。注意数据增加不是应用于测试和验证数据。理想情况下,测试和验证数据应该代表的原始数据和修改的公正的评价。gydF4y2Ba

preprocessInfo。统计= i3d.InputNormalizationStatistics;preprocessInfo。InputSize=我nputSize; preprocessInfo.SizingOption =“调整”gydF4y2Ba;dsTrain =变换(dsTrain @(数据)preprocessVideoClips(数据、preprocessInfo));dsVal =变换(dsVal @(数据)preprocessVideoClips(数据、preprocessInfo));gydF4y2Ba

定义模型梯度函数gydF4y2Ba

创建支持函数金宝appgydF4y2BamodelGradientsgydF4y2Ba年底上市,这个例子。的gydF4y2BamodelGradientsgydF4y2Ba函数作为输入I3D视频分类器gydF4y2Bai3dgydF4y2Bamini-batch的输入数据gydF4y2BadlRGBgydF4y2Ba和gydF4y2BadlFlowgydF4y2Ba和地面真理mini-batch标签数据gydF4y2Ba海底gydF4y2Ba。函数返回训练价值损失,损失的梯度对可学的分类器的参数,和mini-batch分类器的精度。gydF4y2Ba

计算出的损失计算的平均损失的叉从每个子网的预测。网络的输出预测概率在0和1之间的每个类。gydF4y2Ba

每个分类器计算的准确性通过RGB和光学流的平均预测,并对比地面实况标签的输入。gydF4y2Ba

指定培训选项gydF4y2Ba

火车mini-batch大小为20 600迭代。指定迭代之后,保存最好的视频分类器精度验证通过gydF4y2BaSaveBestAfterIterationgydF4y2Ba参数。gydF4y2Ba

指定cosine-annealing学习速率的时间表(gydF4y2Ba3gydF4y2Ba)参数:gydF4y2Ba

至少学习1的军医。gydF4y2Ba

1 e - 3的最大学习速率。gydF4y2Ba

余弦函数的迭代次数为100、200和300年,之后重启学习速率调度周期。的选项gydF4y2Ba

CosineNumIterationsgydF4y2Ba定义每一个余弦函数周期的宽度。gydF4y2Ba

为个指定的参数优化。初始化个优化参数初的培训:gydF4y2Ba

0.9的动力。gydF4y2Ba

一个初始速度参数初始化gydF4y2Ba

[]gydF4y2Ba。gydF4y2Ba0.0005的L2正则化因子。gydF4y2Ba

指定数据在后台使用派遣一个平行的池。如果gydF4y2BaDispatchInBackgroundgydF4y2Ba被设置为true,打开一个平行池并行的指定数量的工人,并创建一个gydF4y2BaDispatchInBackgroundDatastoregydF4y2Ba提供这个例子中,将在后台数据加速训练使用异步数据加载和预处理。默认情况下,这个示例使用GPU如果一个是可用的。否则,它使用一个CPU。使用GPU需要并行计算工具箱™和CUDA NVIDIA GPU®®启用。关于支持计算能力的信息,看到金宝appgydF4y2BaGPU计算的需求gydF4y2Ba(并行计算工具箱)gydF4y2Ba。gydF4y2Ba

参数个数。类=类;参数个数。MiniBatchSize = 20;参数个数。NumIterations = 600;参数个数。SaveBestAfterIteration = 400;参数个数。CosineNumIterations = (100、200、300); params.MinLearningRate = 1e-4; params.MaxLearningRate = 1e-3; params.Momentum = 0.9; params.VelocityRGB = []; params.VelocityFlow = []; params.L2Regularization = 0.0005; params.ProgressPlot = true; params.Verbose = true; params.ValidationData = dsVal; params.DispatchInBackground = false; params.NumWorkers = 4;

火车I3D视频分类器gydF4y2Ba

火车I3D视频分类器使用RGB视频数据和光学流数据。gydF4y2Ba

对于每个时代:gydF4y2Ba

洗牌的数据在循环mini-batches之前的数据。gydF4y2Ba

使用gydF4y2Ba

minibatchqueuegydF4y2Ba在mini-batches循环。支持函数金宝appgydF4y2BacreateMiniBatchQueuegydF4y2Ba列出在本例中,使用给定的训练数据存储创建一个gydF4y2BaminibatchqueuegydF4y2Ba。gydF4y2Ba使用验证数据gydF4y2Ba

dsValgydF4y2Ba验证网络。gydF4y2Ba显示每个时代的损失和准确性结果使用支持功能金宝appgydF4y2Ba

displayVerboseOutputEveryEpochgydF4y2Ba年底上市,这个例子。gydF4y2Ba

为每个mini-batch:gydF4y2Ba

视频数据转换或光学流数据和标签gydF4y2Ba

dlarraygydF4y2Ba对象与底层类型单一。gydF4y2Ba使加工的时间维度使用I3D视频分类器指定的视频数据时间序列维度,gydF4y2Ba

“T”gydF4y2Ba。指定尺寸的标签gydF4y2Ba“SSCTB”gydF4y2Ba(空间、空间、通道、时间、批)视频数据,和gydF4y2Ba“CB”gydF4y2Ba标签的数据。gydF4y2Ba

的gydF4y2BaminibatchqueuegydF4y2Ba使用支持函数对象金宝appgydF4y2BabatchVideoAndFlowgydF4y2Ba列出在本例中,批RGB视频和光学流数据。gydF4y2Ba

参数个数。ModelFilename =gydF4y2Ba“inflated3d-FiveClasses-hmdb51.mat”gydF4y2Ba;gydF4y2Ba如果gydF4y2BadoTraining时代= 1;bestLoss =最大浮点数;accTrain = [];accTrainRGB = [];accTrainFlow = [];lossTrain = [];迭代= 1;开始=抽搐;火车离站时刻表=开始;打乱= shuffleTrainDs (dsTrain);gydF4y2Ba%的输出是三个:一个用于RGB框架,一个用于光学流gydF4y2Ba%的数据,一个用于地面实况标签。gydF4y2BanumOutputs = 3;兆贝可= createMiniBatchQueue(重组、numOutputs params);gydF4y2Ba%使用initializeTrainingProgressPlot和initializeVerboseOutputgydF4y2Ba%支金宝app持功能,列出的例子,来初始化gydF4y2Ba%的培训发展情节和详细输出显示培训gydF4y2Ba%,训练精度,验证精度。gydF4y2Ba策划者= initializeTrainingProgressPlot (params);initializeVerboseOutput (params);gydF4y2Ba而gydF4y2Ba迭代< = params.NumIterationsgydF4y2Ba%遍历的数据集。gydF4y2Ba[dlVideo dlFlow,海底]=下一个(兆贝可);gydF4y2Ba%计算模型使用dlfeval梯度和损失。gydF4y2Ba[gradRGB gradFlow,损失,acc, accRGB accFlow, stateRGB, stateFlow] =gydF4y2Ba…gydF4y2Badlfeval (@modelGradients i3d、dlVideo dlFlow,海底);gydF4y2Ba%累积损失和精度。gydF4y2BalossTrain = (lossTrain、损失);accTrain = (accTrain, acc);accTrainRGB = [accTrainRGB, accRGB];accTrainFlow = [accTrainFlow, accFlow];gydF4y2Ba%更新网络状态。gydF4y2Bai3d。V我deoState = stateRGB; i3d.OpticalFlowState = stateFlow;%更新梯度和RGB和光学流的参数gydF4y2Ba%使用个子网优化器。gydF4y2Ba[i3d.VideoLearnables,参数个数。VelocityRGB] =gydF4y2Ba…gydF4y2BaupdateLearnables (i3d.VideoLearnables gradRGB params, params.VelocityRGB,迭代);[i3d.OpticalFlowLearnables,参数个数。VelocityFlow learnRate] =gydF4y2Ba…gydF4y2BaupdateLearnables (i3d.OpticalFlowLearnables gradFlow params, params.VelocityFlow,迭代);gydF4y2Ba如果gydF4y2Ba~ hasdata(兆贝可)| | = = params.NumIterations迭代gydF4y2Ba%完成当前的时代。做验证和更新进展。gydF4y2Ba火车离站时刻表= toc(火车离站时刻表);(cmat validationTime, lossValidation、accValidation accValidationRGB, accValidationFlow] =gydF4y2Ba…gydF4y2BadoValidation (params, i3d);accTrain =意味着(accTrain);accTrainRGB =意味着(accTrainRGB);accTrainFlow =意味着(accTrainFlow);lossTrain =意味着(lossTrain);gydF4y2Ba%更新培训的进展。gydF4y2BadisplayVerboseOutputEveryEpoch(参数、启动、learnRate时代,迭代,gydF4y2Ba…gydF4y2BaaccTrain、accTrainRGB accTrainFlow,gydF4y2Ba…gydF4y2BaaccValidation、accValidationRGB accValidationFlow,gydF4y2Ba…gydF4y2BalossTrain lossValidation,火车离站时刻表,validationTime);updateProgressPlot (params,策划者,时代,迭代,开始,lossTrain, accTrain, accValidation);gydF4y2Ba%保存训练视频分类器参数,这给了gydF4y2Ba%损失迄今为止最好的验证。使用saveData支持函数,金宝appgydF4y2Ba%的结尾部分,本文罗列了这个例子。gydF4y2BabestLoss = saveData (cmat i3d bestLoss,迭代,lossTrain, lossValidation,gydF4y2Ba…gydF4y2BaaccTrain、accValidation params);gydF4y2Ba结束gydF4y2Ba如果gydF4y2Ba~ hasdata(兆贝可)& & < params.NumIterations迭代gydF4y2Ba%完成当前的时代。初始化培训损失,准确性gydF4y2Ba%值,minibatchqueue为下一个时代。gydF4y2BaaccTrain = [];accTrainRGB = [];accTrainFlow = [];lossTrain = [];火车离站时刻表=抽搐;时代=时代+ 1;打乱= shuffleTrainDs (dsTrain);numOutputs = 3;兆贝可= createMiniBatchQueue(重组、numOutputs params);gydF4y2Ba结束gydF4y2Ba迭代=迭代+ 1;gydF4y2Ba结束gydF4y2Ba%完成训练时显示一条消息。gydF4y2BaendVerboseOutput (params);disp (gydF4y2Ba”模型保存到:“gydF4y2Ba+ params.ModelFilename);gydF4y2Ba结束gydF4y2Ba

评估培训网络gydF4y2Ba

使用测试数据集评估的准确性训练视频分类器。gydF4y2Ba

负载的最佳模型保存在训练或使用pretrained模型。gydF4y2Ba

如果gydF4y2BadoTraining transferLearned =负载(params.ModelFilename);inflated3dPretrained = transferLearned.data.inflated3d;gydF4y2Ba结束gydF4y2Ba

创建一个gydF4y2BaminibatchqueuegydF4y2Ba对象加载批次的测试数据。gydF4y2Ba

numOutputs = 3;兆贝可= createMiniBatchQueue(参数。V一个l我d一个t我onData, numOutputs, params);

每批测试数据,使用RGB和光学流网络做出预测,预测的平均值,计算出使用混淆矩阵预测精度。gydF4y2Ba

numClasses =元素个数(类);cmat =稀疏(numClasses numClasses);gydF4y2Ba而gydF4y2Bahasdata(兆贝可)[dlRGB dlFlow,海底]=下一个(兆贝可);gydF4y2Ba%通过视频输入RGB和光学流数据gydF4y2Ba%二束I3D视频分类器得到独立的预测。gydF4y2Ba[dlYPredRGB, dlYPredFlow] =预测(inflated3dPretrained、dlRGB dlFlow);gydF4y2Ba%保险丝的预测计算的平均预测。gydF4y2BadlYPred = (dlYPredRGB + dlYPredFlow) / 2;gydF4y2Ba%计算预测的准确性。gydF4y2Ba[~,欧美]= max(海底,[],1);[~,YPred] = max (dlYPred [], 1);欧美,cmat = aggregateConfusionMetric (cmat YPred);gydF4y2Ba结束gydF4y2Ba

计算的平均分类精度训练网络。gydF4y2Ba

accuracyEval =总和(诊断接头(cmat)。/笔(cmat,gydF4y2Ba“所有”gydF4y2Ba)gydF4y2Ba

accuracyEval = 0.8850gydF4y2Ba

显示混合矩阵。gydF4y2Ba

图图= confusionchart (cmat、类);gydF4y2Ba

pretrained Inflated-3D视频分类器的动力学- 400数据集,为人类活动提供了更好的性能认识转移学习。以上培训运行24 gb Titan-X GPU约100分钟。当从头训练小活动识别视频数据集,训练时间和收敛需要更长的时间比pretrained视频分类器。反式学习使用动力学- 400 pretrained Inflated-3D视频分类器时也避免过度拟合分类器跑更多的时代。然而,SlowFast视频分类器和R(2 + 1)维视频分类器上pretrained动力学- 400数据集提供更好的性能和更快的收敛比Inflated-3D视频分类器在训练。了解更多关于视频识别使用深度学习,明白了gydF4y2Ba开始使用视频分类使用深度学习gydF4y2Ba。gydF4y2Ba

金宝app支持功能gydF4y2Ba

inputStatisticsgydF4y2Ba

的gydF4y2BainputStatisticsgydF4y2Ba函数作为输入文件夹的名称包含HMDB51数据,并计算出最小和最大的RGB值数据和光学流数据。的最小值和最大值作为标准化输入的输入层网络。这个函数也获得帧的数量在每个视频文件使用后在训练和测试网络。为了找到的最大和最小值不同的数据集,使用这个函数包含数据集的一个文件夹的名字。gydF4y2Ba

函数gydF4y2BainputStats = inputStatistics (dataFolder) ds = createDatastore (dataFolder);ds。ReadFcn = @getMinMax;抽搐;tt =高(ds);varnames = {gydF4y2Ba“rgbMax”gydF4y2Ba,gydF4y2Ba“rgbMin”gydF4y2Ba,gydF4y2Ba“oflowMax”gydF4y2Ba,gydF4y2Ba“oflowMin”gydF4y2Ba};统计=收集(groupsummary (tt, [], {gydF4y2Ba“马克斯”gydF4y2Ba,gydF4y2Ba“最小值”gydF4y2Ba},varnames));inputStats。文件名=收集(tt.Filename);inputStats。NumFrames =收集(tt.NumFrames);inputStats。rgbMax = stats.max_rgbMax; inputStats.rgbMin = stats.min_rgbMin; inputStats.oflowMax = stats.max_oflowMax; inputStats.oflowMin = stats.min_oflowMin; save(“inputStatistics.mat”gydF4y2Ba,gydF4y2Ba“inputStats”gydF4y2Ba);toc;gydF4y2Ba结束gydF4y2Ba函数gydF4y2Badata = getMinMax(文件名)读者= VideoReader(文件名);opticFlow = opticalFlowFarneback;数据= [];gydF4y2Ba而gydF4y2BahasFrame(读者)帧= readFrame(读者);[rgb, oflow] = findMinMax(框架、opticFlow);data = assignMinMax(数据、rgb oflow);gydF4y2Ba结束gydF4y2BatotalFrames =地板(读者。持续时间* reader.FrameRate);totalFrames = min (totalFrames reader.NumFrames);[labelName,文件名]= getLabelFilename(文件名);数据。文件名= fullfile (labelName,文件名);数据。NumFrames = totalFrames;data = struct2table(数据、gydF4y2Ba“AsArray”gydF4y2Ba,真正的);gydF4y2Ba结束gydF4y2Ba函数gydF4y2Ba[labelName,文件名]= getLabelFilename(文件名)fileNameSplit = split(文件名,gydF4y2Ba' / 'gydF4y2Ba);labelName = fileNameSplit {end-1};文件名= fileNameSplit{}结束;gydF4y2Ba结束gydF4y2Ba函数gydF4y2Badata = assignMinMax(数据、rgb oflow)gydF4y2Ba如果gydF4y2Baisempty(数据)的数据。rgbMax = rgb.Max;数据。rgbMin = rgb.Min; data.oflowMax = oflow.Max; data.oflowMin = oflow.Min;返回gydF4y2Ba;gydF4y2Ba结束gydF4y2Ba数据。rgbMax = max(data.rgbMax, rgb.Max); data.rgbMin = min(data.rgbMin, rgb.Min); data.oflowMax = max(data.oflowMax, oflow.Max); data.oflowMin = min(data.oflowMin, oflow.Min);结束gydF4y2Ba函数gydF4y2Ba[rgbMinMax, oflowMinMax] = findMinMax rgbMinMax (rgb, opticFlow)。Max = Max (rgb, [], [1,2]);rgbMinMax。Min = Min (rgb, [], [1,2]);灰色= rgb2gray (rgb);流= estimateFlow (opticFlow、灰色);oflow =猫(3 flow.Vx flow.Vy flow.Magnitude);oflowMinMax。Max = Max (oflow [], [1,2]);oflowMinMax。Min = Min (oflow [], [1,2]);gydF4y2Ba结束gydF4y2Ba函数gydF4y2Bads = createDatastore(文件夹)ds = fileDatastore(文件夹,gydF4y2Ba…gydF4y2Ba“IncludeSubfolders”gydF4y2Ba,真的,gydF4y2Ba…gydF4y2Ba“FileExtensions”gydF4y2Ba,gydF4y2Ba“.avi”gydF4y2Ba,gydF4y2Ba…gydF4y2Ba“UniformRead”gydF4y2Ba,真的,gydF4y2Ba…gydF4y2Ba“ReadFcn”gydF4y2Ba,@getMinMax);disp (gydF4y2Ba”NumFiles:“gydF4y2Ba+元素个数(ds.Files));gydF4y2Ba结束gydF4y2Ba

createFileDatastoregydF4y2Ba

的gydF4y2BacreateFileDatastoregydF4y2Ba函数创建一个gydF4y2BaFileDatastoregydF4y2Ba对象使用给定的文件名。的gydF4y2BaFileDatastoregydF4y2Ba对象读取数据gydF4y2Ba“partialfile”gydF4y2Ba模式,所以每读可以返回部分读从视频帧。该功能有助于在阅读了大量的视频文件,如果所有的帧不适合在内存中。gydF4y2Ba

函数gydF4y2Ba数据存储= createFileDatastore (trainingFolder numFrames numChannels,类,isDataForTraining) readFcn = @ (f, u) readVideo (f, u, numFrames numChannels,类,isDataForTraining);数据存储= fileDatastore (trainingFolder,gydF4y2Ba…gydF4y2Ba“IncludeSubfolders”gydF4y2Ba,真的,gydF4y2Ba…gydF4y2Ba“FileExtensions”gydF4y2Ba,gydF4y2Ba“.avi”gydF4y2Ba,gydF4y2Ba…gydF4y2Ba“ReadFcn”gydF4y2BareadFcn,gydF4y2Ba…gydF4y2Ba“ReadMode”gydF4y2Ba,gydF4y2Ba“partialfile”gydF4y2Ba);gydF4y2Ba结束gydF4y2Ba

shuffleTrainDsgydF4y2Ba

的gydF4y2BashuffleTrainDsgydF4y2Ba函数打乱文件出现在训练数据存储gydF4y2BadsTraingydF4y2Ba。gydF4y2Ba

函数gydF4y2Ba打乱= shuffleTrainDs (dsTrain)打乱= (dsTrain)复印件;改变= isa(打乱,gydF4y2Ba“matlab.io.datastore.TransformedDatastore”gydF4y2Ba);gydF4y2Ba如果gydF4y2Ba转换文件= shuffled.UnderlyingDatastores {1} .Files;gydF4y2Ba其他的gydF4y2Ba文件= shuffled.Files;gydF4y2Ba结束gydF4y2Ban =元素个数(文件);shuffledIndices = randperm (n);gydF4y2Ba如果gydF4y2Ba改变shuffled.UnderlyingDatastores {1}。文件=文件(shuffledIndices);gydF4y2Ba其他的gydF4y2Ba重新洗了一遍。文件=文件(shuffledIndices);gydF4y2Ba结束gydF4y2Ba重置(重组);gydF4y2Ba结束gydF4y2Ba

readVideogydF4y2Ba

的gydF4y2BareadVideogydF4y2Ba函数读取视频帧,和相应的标签值对于一个给定的视频文件。在培训过程中,读函数读取特定数量的帧按照网络输入大小,随机选择起始帧。在测试期间,所有的框架都按顺序阅读。所需的视频帧大小到分类器网络输入大小训练、测试和验证。gydF4y2Ba

函数gydF4y2Ba(数据、用户数据做)= readVideo(文件名,用户数据、numFrames numChannels,类,isDataForTraining)gydF4y2Ba如果gydF4y2Baisempty(用户数据)用户数据。re一个der=VideoReader(f我len一个米e);用户数据。batchesRead = 0; userdata.label = getLabel(filename,classes); totalFrames = floor(userdata.reader.Duration * userdata.reader.FrameRate); totalFrames = min(totalFrames, userdata.reader.NumFrames); userdata.totalFrames = totalFrames; userdata.datatype = class(read(userdata.reader,1));结束gydF4y2Ba读者= userdata.reader;totalFrames = userdata.totalFrames;标签= userdata.label;batchesRead = userdata.batchesRead;gydF4y2Ba如果gydF4y2BaisDataForTraining视频= readForTraining(读者,numFrames totalFrames);gydF4y2Ba其他的gydF4y2Ba视频= readForValidation(读者、用户数据。d一个t一个type,numChannels, numFrames, totalFrames);结束gydF4y2Ba数据={视频标签};batchesRead = batchesRead + 1;用户数据。b一个tchesRead = batchesRead;如果gydF4y2BanumFrames > totalFrames numBatches = 1;gydF4y2Ba其他的gydF4y2BanumBatches =地板(totalFrames / numFrames);gydF4y2Ba结束gydF4y2Ba%完成标志设置为true,如果读者读过所有的帧或gydF4y2Ba%如果是培训。gydF4y2Ba做= batchesRead = = numBatches | | isDataForTraining;gydF4y2Ba结束gydF4y2Ba

readForTraininggydF4y2Ba

的gydF4y2BareadForTraininggydF4y2Ba函数读取视频帧分类器训练视频。函数读取特定的帧数按照网络输入大小,随机选择起始帧。如果没有足够的帧,视频序列重复垫所需数量的帧。gydF4y2Ba

函数gydF4y2Ba视频= readForTraining(读者,numFrames totalFrames)gydF4y2Ba如果gydF4y2BanumFrames > = totalFrames startIdx = 1;endIdx = totalFrames;gydF4y2Ba其他的gydF4y2BastartIdx = randperm (totalFrames - numFrames + 1);startIdx = startIdx (1);endIdx = startIdx + numFrames - 1;gydF4y2Ba结束gydF4y2Ba视频=阅读(读者,[startIdx endIdx]);gydF4y2Ba如果gydF4y2BanumFrames > totalFramesgydF4y2Ba%添加更多的帧来填补在网络输入的大小。gydF4y2Ba附加=装天花板(numFrames / totalFrames);视频= repmat(视频,1,1,1,额外的);视频=视频(:,:,:1:numFrames);gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

readForValidationgydF4y2Ba

的gydF4y2BareadForValidationgydF4y2Ba函数读取视频帧来评估分类器训练视频。函数读取特定的帧数顺序按照网络输入的大小。如果没有足够的帧,视频序列重复垫所需数量的帧。gydF4y2Ba

函数gydF4y2Ba视频= readForValidation(读者,数据类型,numChannels numFrames, totalFrames) H = reader.Height;W = reader.Width;探路者= min ([numFrames totalFrames]);视频= 0 (H, W, numChannels,探路者,数据类型);frameIndex = 0;gydF4y2Ba而gydF4y2BahasFrame(读者)& & frameIndex < numFrames帧= readFrame(读者);frameIndex = frameIndex + 1;视频(:,:,:,frameIndex) =框架;gydF4y2Ba结束gydF4y2Ba如果gydF4y2BaframeIndex < numFrames视频=视频(:,:,:,1:frameIndex);附加=装天花板(numFrames / frameIndex);视频= repmat(视频,1,1,1,额外的);视频=视频(:,:,:1:numFrames);gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

getLabelgydF4y2Ba

的gydF4y2BagetLabelgydF4y2Ba函数获取标签名称的完整路径文件名。的标签文件的文件夹的存在。例如,对于一个文件路径等gydF4y2Ba“/道路/ /数据/鼓掌/ video_0001.avi”gydF4y2Ba,标签的名字gydF4y2Ba“鼓掌”gydF4y2Ba。gydF4y2Ba

函数gydF4y2Ba标签= getLabel(文件名、类)文件夹= fileparts (string(文件名));[~,标签]= fileparts(文件夹);标签=分类(字符串(标签),字符串(类));gydF4y2Ba结束gydF4y2Ba

augmentVideogydF4y2Ba

的gydF4y2BaaugmentVideogydF4y2Ba提供的函数使用增强变换函数gydF4y2BaaugmentTransformgydF4y2Ba金宝app支持函数应用相同的增加在视频序列。gydF4y2Ba

函数gydF4y2Badata = augmentVideo(数据)numSequences =大小(数据,1);gydF4y2Ba为gydF4y2Ba2 = 1:numSequences视频= {ii, 1}数据;gydF4y2Ba% HxWxCgydF4y2Ba深圳=大小(视频中,[1,2,3]);gydF4y2Ba%一增强序列gydF4y2BaaugmentFcn = augmentTransform(深圳);{二世,1}= augmentFcn数据(视频);gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

augmentTransformgydF4y2Ba

的gydF4y2BaaugmentTransformgydF4y2Ba函数创建一个扩展方法与随机左右翻转和扩展的因素。gydF4y2Ba

函数gydF4y2BaaugmentFcn = augmentTransform(深圳)gydF4y2Ba%随机图像翻转和规模。gydF4y2Batform = randomAffine2d (gydF4y2Ba“XReflection”gydF4y2Ba,真的,gydF4y2Ba“规模”gydF4y2Ba1.1 [1]);tform溃败= affineOutputView(深圳,gydF4y2Ba“BoundsStyle”gydF4y2Ba,gydF4y2Ba“CenterOutput”gydF4y2Ba);augmentFcn = @(数据)augmentData(数据、tform溃败);gydF4y2Ba函数gydF4y2Badata = augmentData(数据、tform溃败)数据= imwarp(数据、tformgydF4y2Ba“OutputView”gydF4y2Ba,溃败);gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

preprocessVideoClipsgydF4y2Ba

的gydF4y2BapreprocessVideoClipsgydF4y2Ba函数进行预处理的培训视频数据调整到Inflated-3D视频分类器输入的大小。需要的gydF4y2BaInputNormalizationStatisticsgydF4y2Ba和gydF4y2BaInputSizegydF4y2Ba视频的属性分类器结构,gydF4y2Ba信息gydF4y2Ba。的gydF4y2BaInputNormalizationStatisticsgydF4y2Ba属性用于重新调节视频帧和光学流数据1和1之间。输入用于调整视频帧大小使用gydF4y2BaimresizegydF4y2Ba基于gydF4y2BaSizingOptiongydF4y2Ba的价值gydF4y2Ba信息gydF4y2Ba结构体。或者,您可以使用gydF4y2Ba“randomcrop”gydF4y2Ba或gydF4y2Ba“centercrop”gydF4y2Ba的值gydF4y2BaSizingOptiongydF4y2Ba随机的作物或中心作物的输入数据输入大小视频分类器。gydF4y2Ba

函数gydF4y2Ba预处理= preprocessVideoClips(数据、信息)inputSize = info.InputSize (1:2);sizingOption = info.SizingOption;gydF4y2Ba开关gydF4y2BasizingOptiongydF4y2Ba情况下gydF4y2Ba“调整”gydF4y2BasizingFcn = @ (x) imresize (x, inputSize);gydF4y2Ba情况下gydF4y2Ba“randomcrop”gydF4y2BasizingFcn = @ (x) cropVideo (x, @randomCropWindow2d inputSize);gydF4y2Ba情况下gydF4y2Ba“centercrop”gydF4y2BasizingFcn = @ (x) cropVideo (x, @centerCropWindow2d inputSize);gydF4y2Ba结束gydF4y2BanumClips =大小(数据,1);rgbMin = info.Statistics.Video.Min;rgbMax = info.Statistics.Video.Max;oflowMin = info.Statistics.OpticalFlow.Min;oflowMax = info.Statistics.OpticalFlow.Max;numChannels =长度(rgbMin);rgbMin =重塑(numChannels rgbMin 1 1);rgbMax =重塑(numChannels rgbMax 1 1);numChannels =长度(oflowMin);oflowMin =重塑(numChannels oflowMin 1 1); oflowMax = reshape(oflowMax, 1, 1, numChannels); preprocessed = cell(numClips, 3);为gydF4y2Ba2 = 1:numClips视频= {ii, 1}数据;大小= sizingFcn(视频);oflow = computeFlow(大小、inputSize);gydF4y2Ba%将单一的输入。gydF4y2Ba大小=单(大小);oflow =单(oflow);gydF4y2Ba% 1和1之间重新调节输入。gydF4y2Ba大小=重新调节(大小,1,1,gydF4y2Ba“InputMin”gydF4y2BargbMin,gydF4y2Ba“InputMax”gydF4y2Ba,rgbMax);oflow =重新调节(oflow, 1, 1,gydF4y2Ba“InputMin”gydF4y2BaoflowMin,gydF4y2Ba“InputMax”gydF4y2Ba,oflowMax);预处理{ii, 1} =调整;预处理{ii, 2} = oflow;数据预处理{2,3}= {ii, 2};gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba函数gydF4y2BaoutData = cropVideo(数据、cropFcn inputSize) imsz =大小(数据,[1,2]);cropWindow = cropFcn (imsz inputSize);numFrames =大小(数据,4);深圳= [inputSize、大小(数据,3),numFrames);outData = 0(深圳,gydF4y2Ba“喜欢”gydF4y2Ba、数据);gydF4y2Ba为gydF4y2Baf = 1: numFrames outData (:,:: f) = imcrop(数据(:,::f), cropWindow);gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

computeFlowgydF4y2Ba

的gydF4y2BacomputeFlowgydF4y2Ba函数作为输入视频序列,gydF4y2BavideoFramesgydF4y2Ba,并计算了相应的光学流数据gydF4y2BaopticalFlowDatagydF4y2Ba使用gydF4y2BaopticalFlowFarnebackgydF4y2Ba。光流数据包含两个渠道,对应gydF4y2Ba

- - -gydF4y2Ba

-组件的速度。gydF4y2Ba

函数gydF4y2BaopticalFlowData = computeFlow (videoFrames inputSize) opticalFlow = opticalFlowFarneback;numFrames =大小(videoFrames 4);深圳= [inputSize 2 numFrames];opticalFlowData = 0(深圳,gydF4y2Ba“喜欢”gydF4y2Ba,videoFrames);gydF4y2Ba为gydF4y2Baf = 1: numFrames灰色= rgb2gray (videoFrames (::,:, f));流= estimateFlow (opticalFlow、灰色);opticalFlowData (:,:: f) =猫(3 flow.Vx flow.Vy);gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

createMiniBatchQueuegydF4y2Ba

的gydF4y2BacreateMiniBatchQueuegydF4y2Ba函数创建一个gydF4y2BaminibatchqueuegydF4y2Ba对象,该对象提供gydF4y2BaminiBatchSizegydF4y2Ba从给定的数据存储的数据量。它还创建了一个gydF4y2BaDispatchInBackgroundDatastoregydF4y2Ba如果一个平行池是开放的。gydF4y2Ba

函数gydF4y2Ba兆贝可= createMiniBatchQueue(数据存储、numOutputs params)gydF4y2Ba如果gydF4y2Ba参数个数。DispatchInBackground & & isempty (gcp (gydF4y2Ba“nocreate”gydF4y2Ba))gydF4y2Ba%开始平行池,如果DispatchInBackground是真的,调度gydF4y2Ba%在后台数据使用并行池。gydF4y2Bac = parcluster (gydF4y2Ba“本地”gydF4y2Ba);c。NumWorkers = params.NumWorkers;parpool (gydF4y2Ba“本地”gydF4y2Ba,params.NumWorkers);gydF4y2Ba结束gydF4y2Bagcp (p =gydF4y2Ba“nocreate”gydF4y2Ba);gydF4y2Ba如果gydF4y2Ba~ isempty (p)数据存储= DispatchInBackgroundDatastore(数据存储,p.NumWorkers);gydF4y2Ba结束gydF4y2BainputFormat (1: numOutputs-1) =gydF4y2Ba“SSCTB”gydF4y2Ba;outputFormat =gydF4y2Ba“CB”gydF4y2Ba;numOutputs兆贝可= minibatchqueue(数据存储,gydF4y2Ba…gydF4y2Ba“MiniBatchSize”gydF4y2Baparams.MiniBatchSize,gydF4y2Ba…gydF4y2Ba“MiniBatchFcn”gydF4y2Ba@batchVideoAndFlow,gydF4y2Ba…gydF4y2Ba“MiniBatchFormat”gydF4y2BainputFormat, outputFormat]);gydF4y2Ba结束gydF4y2Ba

batchVideoAndFlowgydF4y2Ba

的gydF4y2BabatchVideoAndFlowgydF4y2Ba函数批量视频,光流,从细胞和标签数据数组。它使用gydF4y2BaonehotencodegydF4y2Ba函数地面实况分类标签编码到一个炎热的数组。在一个炎热的编码包含一个数组gydF4y2Ba1gydF4y2Ba在相对应的位置的类标签,和gydF4y2Ba0gydF4y2Ba在其他位置。gydF4y2Ba

函数gydF4y2Ba(视频、流标签)= batchVideoAndFlow(视频、流标签)gydF4y2Ba%批尺寸:5gydF4y2Ba视频=猫(5、视频{:});流流=猫(5日{:});gydF4y2Ba%批尺寸:2gydF4y2Ba标签=猫({}):2、标签;gydF4y2Ba%功能维度:1gydF4y2Ba= onehotencode标签(标签,1);gydF4y2Ba结束gydF4y2Ba

modelGradientsgydF4y2Ba

的gydF4y2BamodelGradientsgydF4y2Ba函数作为输入mini-batch RGB数据gydF4y2BadlRGBgydF4y2Ba,相应的光学流数据gydF4y2BadlFlowgydF4y2Ba,和相应的目标gydF4y2Ba海底gydF4y2Ba,并返回相应的损失,损失的梯度对可学的参数,和训练精度。计算梯度,评估gydF4y2BamodelGradientsgydF4y2Ba函数使用gydF4y2BadlfevalgydF4y2Ba功能训练循环。gydF4y2Ba

函数gydF4y2Ba[gradientsRGB gradientsFlow,损失,acc, accRGB accFlow, stateRGB, stateFlow] = modelGradients (i3d, dlRGB dlFlow Y)gydF4y2Ba%通过视频输入为RGB和光学流数据通过二束gydF4y2Ba%网络。gydF4y2Ba[dlYPredRGB, dlYPredFlow stateRGB stateFlow] =前进(i3d, dlRGB dlFlow);gydF4y2Ba%计算融合损失、渐变和准确性二束gydF4y2Ba%的预测。gydF4y2BargbLoss = crossentropy (dlYPredRGB Y);flowLoss = crossentropy (dlYPredFlow Y);gydF4y2Ba%保险丝的损失。gydF4y2Ba损失=意味着([rgbLoss flowLoss]);gradientsRGB = dlgradient (rgbLoss i3d.VideoLearnables);gradientsFlow = dlgradient (flowLoss i3d.OpticalFlowLearnables);gydF4y2Ba%保险丝的预测计算的平均预测。gydF4y2BadlYPred = (dlYPredRGB + dlYPredFlow) / 2;gydF4y2Ba%计算预测的准确性。gydF4y2Ba[~,欧美]= max (Y, [], 1);[~,YPred] = max (dlYPred [], 1);acc =收集(extractdata (sum(欧美= = YPred)。/元素个数(欧美)));gydF4y2Ba%计算RGB和流量预测的准确性。gydF4y2Ba[~,欧美]= max (Y, [], 1);[~,YPredRGB] = max (dlYPredRGB [], 1);[~,YPredFlow] = max (dlYPredFlow [], 1);accRGB =收集(extractdata (sum(欧美= = YPredRGB)。/元素个数(欧美)));accFlow =收集(extractdata (sum(欧美= = YPredFlow)。/元素个数(欧美)));gydF4y2Ba结束gydF4y2Ba

updateLearnablesgydF4y2Ba

的gydF4y2BaupdateLearnablesgydF4y2Ba功能更新提供gydF4y2Ba可学的gydF4y2Ba用个梯度和其他参数优化功能gydF4y2BasgdmupdategydF4y2Ba。gydF4y2Ba

函数gydF4y2Ba(可学的,速度,learnRate] = updateLearnables(可学的、渐变参数、速度迭代)gydF4y2Ba%确定学习速率使用cosine-annealing学习速率的时间表。gydF4y2BalearnRate = cosineAnnealingLearnRate(迭代、params);gydF4y2Ba%对权重应用L2正规化。gydF4y2Baidx =可学的。参数= =gydF4y2Ba“重量”gydF4y2Ba;梯度(idx:) = dlupdate (@ (g, w) g +参数。l2Regularization*w, gradients(idx,:), learnables(idx,:));%更新使用个优化网络参数。gydF4y2Ba(可学的,速度)= sgdmupdate(可学的、渐变速度,learnRate params.Momentum);gydF4y2Ba结束gydF4y2Ba

cosineAnnealingLearnRategydF4y2Ba

的gydF4y2BacosineAnnealingLearnRategydF4y2Ba函数计算基于当前迭代数,学习速率最低学习速率、最大学习速率,对退火的迭代次数gydF4y2Ba3gydF4y2Ba]。gydF4y2Ba

函数gydF4y2Balr = cosineAnnealingLearnRate(迭代参数)gydF4y2Ba如果gydF4y2Ba迭代= =参数。NumIterations lr = params.MinLearningRate;gydF4y2Ba返回gydF4y2Ba;gydF4y2Ba结束gydF4y2BacosineNumIter = [0, params.CosineNumIterations];csum = cumsum (cosineNumIter);块=找到(迭代csum > = 1gydF4y2Ba“第一”gydF4y2Ba);cosineIter =迭代- csum (block - 1);annealingIteration =国防部(cosineIter cosineNumIter(块));cosineIteration = cosineNumIter(块);minR = params.MinLearningRate;maxR = params.MaxLearningRate;cosMult = 1 + cos(π* annealingIteration / cosineIteration);lr = minR + ((maxR - minR) * cosMult / 2);gydF4y2Ba结束gydF4y2Ba

aggregateConfusionMetricgydF4y2Ba

的gydF4y2BaaggregateConfusionMetricgydF4y2Ba功能逐步填补了混淆矩阵的基础上,预测结果gydF4y2BaYPredgydF4y2Ba和预期结果gydF4y2Ba欧美gydF4y2Ba。gydF4y2Ba

函数gydF4y2Ba欧美,cmat = aggregateConfusionMetric (cmat YPred)欧美=收集(extractdata(欧美));YPred =收集(extractdata (YPred));[m, n] =大小(cmat);cmat = cmat +满(稀疏(欧美YPred 1, m, n));gydF4y2Ba结束gydF4y2Ba

doValidationgydF4y2Ba

的gydF4y2BadoValidationgydF4y2Ba功能验证视频分类器使用验证数据。gydF4y2Ba

函数gydF4y2Ba(cmat validationTime, lossValidation、accValidation accValidationRGB, accValidationFlow] = doValidation (params i3d) validationTime =抽搐;numOutputs = 3;兆贝可= createMiniBatchQueue(参数。V一个l我d一个t我onData, numOutputs, params); lossValidation = []; numClasses = numel(params.Classes); cmat = sparse(numClasses,numClasses); cmatRGB = sparse(numClasses,numClasses); cmatFlow = sparse(numClasses,numClasses);而gydF4y2Bahasdata(兆贝可)[dlX1 dlX2,海底]=下一个(兆贝可);[损失,欧美,YPred、YPredRGB YPredFlow] = predictValidation (i3d, dlX1 dlX2,海底);lossValidation = (lossValidation、损失);欧美,cmat = aggregateConfusionMetric (cmat YPred);欧美,cmatRGB = aggregateConfusionMetric (cmatRGB YPredRGB);欧美,cmatFlow = aggregateConfusionMetric (cmatFlow YPredFlow);gydF4y2Ba结束gydF4y2BalossValidation =意味着(lossValidation);accValidation =总和(诊断接头(cmat)。/笔(cmat,gydF4y2Ba“所有”gydF4y2Ba);accValidationRGB =总和(诊断接头(cmatRGB)。/笔(cmatRGB,gydF4y2Ba“所有”gydF4y2Ba);accValidationFlow =总和(诊断接头(cmatFlow)。/笔(cmatFlow,gydF4y2Ba“所有”gydF4y2Ba);validationTime = toc (validationTime);gydF4y2Ba结束gydF4y2Ba

predictValidationgydF4y2Ba

的gydF4y2BapredictValidationgydF4y2Ba损失函数计算和预测为RGB值使用提供的视频分类器和光学流数据。gydF4y2Ba

函数gydF4y2Ba[损失,欧美,YPred、YPredRGB YPredFlow] = predictValidation (i3d, dlRGB dlFlow Y)gydF4y2Ba%的视频输入通过二束Inflated-3D视频分类器。gydF4y2Ba[dlYPredRGB, dlYPredFlow] =预测(i3d、dlRGB dlFlow);gydF4y2Ba%计算熵分别为二束输出。gydF4y2BargbLoss = crossentropy (dlYPredRGB Y);flowLoss = crossentropy (dlYPredFlow Y);gydF4y2Ba%保险丝的损失。gydF4y2Ba损失=意味着([rgbLoss flowLoss]);gydF4y2Ba%保险丝的预测计算的平均预测。gydF4y2BadlYPred = (dlYPredRGB + dlYPredFlow) / 2;gydF4y2Ba%计算预测的准确性。gydF4y2Ba[~,欧美]= max (Y, [], 1);[~,YPred] = max (dlYPred [], 1);[~,YPredRGB] = max (dlYPredRGB [], 1);[~,YPredFlow] = max (dlYPredFlow [], 1);gydF4y2Ba结束gydF4y2Ba

saveDatagydF4y2Ba

的gydF4y2BasaveDatagydF4y2Ba函数保存给定Inflated-3d视频分类器,准确性,MAT-file损失和其他训练参数。gydF4y2Ba

函数gydF4y2BabestLoss = saveData (cmat inflated3d bestLoss,迭代,lossTrain, lossValidation,gydF4y2Ba…gydF4y2BaaccTrain、accValidation params)gydF4y2Ba如果gydF4y2Ba迭代> =参数。SaveBestAfterIteration lossValidtion = extractdata(收集(lossValidation));gydF4y2Ba如果gydF4y2BalossValidtion < bestLoss params = rmfield (params,gydF4y2Ba“VelocityRGB”gydF4y2Ba);params = rmfield (params,gydF4y2Ba“VelocityFlow”gydF4y2Ba);bestLoss = lossValidtion;inflated3d = gatherFromGPUToSave (inflated3d);数据。BestLoss = BestLoss;数据。TrainingLoss = extractdata(收集(lossTrain));数据。TrainingAccuracy = accTrain;数据。V一个lidationAccuracy = accValidation; data.ValidationConfmat= cmat; data.inflated3d = inflated3d; data.Params = params; save(params.ModelFilename,“数据”gydF4y2Ba);gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

gatherFromGPUToSavegydF4y2Ba

的gydF4y2BagatherFromGPUToSavegydF4y2Ba函数从GPU为了收集数据的视频分类器保存到磁盘。gydF4y2Ba

函数gydF4y2Ba分类器= gatherFromGPUToSave(标识符)gydF4y2Ba如果gydF4y2Ba~ canUseGPUgydF4y2Ba返回gydF4y2Ba;gydF4y2Ba结束gydF4y2Bap =字符串(属性(分类));p = p (endsWith (p [gydF4y2Ba“可学的”gydF4y2Ba,gydF4y2Ba“状态”gydF4y2Ba)));gydF4y2Ba为gydF4y2Bajj = 1:元素个数(p)支持= p (jj);分类器。(道具)= gatherValues(分类器(道具));gydF4y2Ba结束gydF4y2Ba函数gydF4y2Ba台= gatherValues(台)gydF4y2Ba为gydF4y2Ba2 = 1:高度(台)台。V一个lue{ii} = gather(tbl.Value{ii});结束gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

checkForHMDB51FoldergydF4y2Ba

的gydF4y2BacheckForHMDB51FoldergydF4y2Ba函数检查下载的下载文件夹中的数据。gydF4y2Ba

函数gydF4y2Ba类= checkForHMDB51Folder (dataLoc) hmdbFolder = fullfile (dataLoc,gydF4y2Ba“hmdb51_org”gydF4y2Ba);gydF4y2Ba如果gydF4y2Ba~ isfolder (hmdbFolder)错误(gydF4y2Ba“下载hmdb51_org。r一个r' file using the supporting function 'downloadHMDB51' before running the example and extract the RAR file.");gydF4y2Ba结束gydF4y2Ba类= [gydF4y2Ba“brush_hair”gydF4y2Ba,gydF4y2Ba“车轮”gydF4y2Ba,gydF4y2Ba“抓”gydF4y2Ba,gydF4y2Ba“咀嚼”gydF4y2Ba,gydF4y2Ba“鼓掌”gydF4y2Ba,gydF4y2Ba“爬”gydF4y2Ba,gydF4y2Ba“climb_stairs”gydF4y2Ba,gydF4y2Ba…gydF4y2Ba“潜水”gydF4y2Ba,gydF4y2Ba“draw_sword”gydF4y2Ba,gydF4y2Ba“口水”gydF4y2Ba,gydF4y2Ba“喝”gydF4y2Ba,gydF4y2Ba“吃”gydF4y2Ba,gydF4y2Ba“fall_floor”gydF4y2Ba,gydF4y2Ba“击剑”gydF4y2Ba,gydF4y2Ba…gydF4y2Ba“flic_flac”gydF4y2Ba,gydF4y2Ba“高尔夫球”gydF4y2Ba,gydF4y2Ba“倒立”gydF4y2Ba,gydF4y2Ba“打”gydF4y2Ba,gydF4y2Ba“拥抱”gydF4y2Ba,gydF4y2Ba“跳”gydF4y2Ba,gydF4y2Ba“踢”gydF4y2Ba,gydF4y2Ba“kick_ball”gydF4y2Ba,gydF4y2Ba…gydF4y2Ba“吻”gydF4y2Ba,gydF4y2Ba“笑”gydF4y2Ba,gydF4y2Ba“选择”gydF4y2Ba,gydF4y2Ba“倒”gydF4y2Ba,gydF4y2Ba“引体向上”gydF4y2Ba,gydF4y2Ba“打”gydF4y2Ba,gydF4y2Ba“推”gydF4y2Ba,gydF4y2Ba“俯卧撑”gydF4y2Ba,gydF4y2Ba“ride_bike”gydF4y2Ba,gydF4y2Ba…gydF4y2Ba“ride_horse”gydF4y2Ba,gydF4y2Ba“运行”gydF4y2Ba,gydF4y2Ba“shake_hands”gydF4y2Ba,gydF4y2Ba“shoot_ball”gydF4y2Ba,gydF4y2Ba“shoot_bow”gydF4y2Ba,gydF4y2Ba“shoot_gun”gydF4y2Ba,gydF4y2Ba…gydF4y2Ba“坐”gydF4y2Ba,gydF4y2Ba“仰卧起坐”gydF4y2Ba,gydF4y2Ba“微笑”gydF4y2Ba,gydF4y2Ba“烟”gydF4y2Ba,gydF4y2Ba“筋斗”gydF4y2Ba,gydF4y2Ba“站”gydF4y2Ba,gydF4y2Ba“swing_baseball”gydF4y2Ba,gydF4y2Ba“剑”gydF4y2Ba,gydF4y2Ba…gydF4y2Ba“sword_exercise”gydF4y2Ba,gydF4y2Ba“交谈”gydF4y2Ba,gydF4y2Ba“扔”gydF4y2Ba,gydF4y2Ba“转”gydF4y2Ba,gydF4y2Ba“走”gydF4y2Ba,gydF4y2Ba“波”gydF4y2Ba];expectFolders = fullfile (hmdbFolder、类);gydF4y2Ba如果gydF4y2Ba~所有(arrayfun (@ (x)存在(x,gydF4y2Ba“dir”gydF4y2Ba),expectFolders)错误(gydF4y2Ba“下载hmdb51_org。r一个r使用the supporting function 'downloadHMDB51' before running the example and extract the RAR file.");gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

downloadHMDB51gydF4y2Ba

的gydF4y2BadownloadHMDB51gydF4y2Ba功能下载数据集保存到一个目录。gydF4y2Ba

函数gydF4y2BadownloadHMDB51 (dataLoc)gydF4y2Ba如果gydF4y2Ba输入参数个数= = 0 dataLoc = pwd;gydF4y2Ba结束gydF4y2BadataLoc =字符串(dataLoc);gydF4y2Ba如果gydF4y2Ba~ isfolder (dataLoc) mkdir (dataLoc);gydF4y2Ba结束gydF4y2BadataUrl =gydF4y2Ba“http://serre-lab.clps.brown.edu/wp-content/uploads/2013/10/hmdb51_org.rar”gydF4y2Ba;选择= weboptions (gydF4y2Ba“超时”gydF4y2Ba、正);rarFileName = fullfile (dataLoc,gydF4y2Ba“hmdb51_org.rar”gydF4y2Ba);gydF4y2Ba%下载RAR文件并将其保存到下载文件夹中。gydF4y2Ba如果gydF4y2Ba~ isfile rarFileName disp (gydF4y2Ba“下载hmdb51_org。r一个r(2GB) to the folder:")disp dataLoc disp (gydF4y2Ba“这下载可以花几分钟……”gydF4y2Ba)websave (rarFileName dataUrl选项);disp (gydF4y2Ba“下载完成了。”gydF4y2Ba)disp (gydF4y2Ba“提取hmdb51_org。r一个rf我lecontent年代到folder: ")disp (dataLoc)gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

initializeTrainingProgressPlotgydF4y2Ba

的gydF4y2BainitializeTrainingProgressPlotgydF4y2Ba功能配置两个情节显示训练,训练精度,验证精度。gydF4y2Ba

函数gydF4y2Ba策划者= initializeTrainingProgressPlot (params)gydF4y2Ba如果gydF4y2Baparams.ProgressPlotgydF4y2Ba%画出损失,训练精度,验证精度。gydF4y2Ba图gydF4y2Ba%损失情节gydF4y2Ba次要情节(2,1,1)策划者。lo年代年代Plotter=一个n我米一个tedline; xlabel(“迭代”gydF4y2Ba)ylabel (gydF4y2Ba“损失”gydF4y2Ba)gydF4y2Ba%精度图gydF4y2Ba次要情节(2,1,2)策划者。TrainAccPlotter = animatedline (gydF4y2Ba“颜色”gydF4y2Ba,gydF4y2Ba“b”gydF4y2Ba);策划者。V一个lAccPlotter = animatedline(“颜色”gydF4y2Ba,gydF4y2Ba‘g’gydF4y2Ba);传奇(gydF4y2Ba“训练的准确性”gydF4y2Ba,gydF4y2Ba“验证准确性”gydF4y2Ba,gydF4y2Ba“位置”gydF4y2Ba,gydF4y2Ba“西北”gydF4y2Ba);包含(gydF4y2Ba“迭代”gydF4y2Ba)ylabel (gydF4y2Ba“准确性”gydF4y2Ba)gydF4y2Ba其他的gydF4y2Ba策划者= [];gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

updateProgressPlotgydF4y2Ba

的gydF4y2BaupdateProgressPlotgydF4y2Ba功能更新进展情节与损失和在训练精度信息。gydF4y2Ba

函数gydF4y2BaupdateProgressPlot (params,策划者,时代,迭代,开始,lossTrain, accuracyTrain, accuracyValidation)gydF4y2Ba如果gydF4y2Baparams.ProgressPlotgydF4y2Ba%更新培训的进展。gydF4y2BaD =持续时间(0,0,toc(开始),gydF4y2Ba“格式”gydF4y2Ba,gydF4y2Ba“hh: mm: ss”gydF4y2Ba);标题(plotters.LossPlotter.Parent,gydF4y2Ba”时代:“gydF4y2Ba+时代+gydF4y2Ba”,过去:“gydF4y2Ba+字符串(D));addpoints (plotters.LossPlotter、迭代、双(收集(extractdata (lossTrain))));addpoints (plotters.TrainAccPlotter迭代,accuracyTrain);addpoints (plotters.ValAccPlotter迭代,accuracyValidation);drawnowgydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

initializeVerboseOutputgydF4y2Ba

的gydF4y2BainitializeVerboseOutputgydF4y2Ba函数显示表的列标题的训练价值,这显示了时代,mini-batch准确性,和其他训练价值。gydF4y2Ba

函数gydF4y2BainitializeVerboseOutput (params)gydF4y2Ba如果gydF4y2Ba参数个数。Verbo年代edisp (gydF4y2Ba”“gydF4y2Ba)gydF4y2Ba如果gydF4y2BacanUseGPU disp (gydF4y2Ba“训练在GPU上。”gydF4y2Ba)gydF4y2Ba其他的gydF4y2Badisp (gydF4y2Ba“训练在CPU上。”gydF4y2Ba)gydF4y2Ba结束gydF4y2Bagcp (p =gydF4y2Ba“nocreate”gydF4y2Ba);gydF4y2Ba如果gydF4y2Ba~ isempty (p) disp (gydF4y2Ba并行集群上的“培训”gydF4y2Ba+ p.Cluster。概要文件+gydF4y2Ba“”。”gydF4y2Ba)gydF4y2Ba结束gydF4y2Badisp (gydF4y2Ba”NumIterations:“gydF4y2Ba+字符串(params.NumIterations));disp (gydF4y2Ba”MiniBatchSize:“gydF4y2Ba+字符串(params.MiniBatchSize));disp (gydF4y2Ba“类:”gydF4y2Ba+加入(字符串(params.Classes),gydF4y2Ba”、“gydF4y2Ba));disp (gydF4y2Ba“| = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = |”gydF4y2Ba)disp (gydF4y2Ba| | |时代迭代时间| | Mini-Batch精度验证准确性| Mini-Batch学习| | |验证基地训练时间|验证时间|”gydF4y2Ba)disp (gydF4y2Ba“| | | (hh: mm: ss) | (Avg: RGB:流)| (Avg: RGB:流)| | | |率损失损失(hh: mm: ss) | (hh: mm: ss) |”gydF4y2Ba)disp (gydF4y2Ba“| = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = |”gydF4y2Ba)gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

displayVerboseOutputEveryEpochgydF4y2Ba

的gydF4y2BadisplayVerboseOutputEveryEpochgydF4y2Ba函数显示培训的详细输出值,如时代、mini-batch精度,验证准确性和mini-batch损失。gydF4y2Ba

函数gydF4y2BadisplayVerboseOutputEveryEpoch(参数、启动、learnRate时代,迭代,gydF4y2Ba…gydF4y2BaaccTrain、accTrainRGB accTrainFlow、accValidation accValidationRGB, accValidationFlow, lossTrain, lossValidation,火车离站时刻表,validationTime)gydF4y2Ba如果gydF4y2Ba参数个数。Verbo年代eD =持续时间(0,0,toc(开始),gydF4y2Ba“格式”gydF4y2Ba,gydF4y2Ba“hh: mm: ss”gydF4y2Ba);火车离站时刻表=持续时间(0,0,火车离站时刻表,gydF4y2Ba“格式”gydF4y2Ba,gydF4y2Ba“hh: mm: ss”gydF4y2Ba);validationTime =持续时间(0,0,validationTime,gydF4y2Ba“格式”gydF4y2Ba,gydF4y2Ba“hh: mm: ss”gydF4y2Ba);lossValidation =收集(extractdata (lossValidation));lossValidation =组成(gydF4y2Ba“% .4f”gydF4y2Ba,lossValidation);accValidation = composePadAccuracy (accValidation);accValidationRGB = composePadAccuracy (accValidationRGB);accValidationFlow = composePadAccuracy (accValidationFlow);accVal =加入([accValidation、accValidationRGB accValidationFlow),gydF4y2Ba”:“gydF4y2Ba);lossTrain =收集(extractdata (lossTrain));lossTrain =组成(gydF4y2Ba“% .4f”gydF4y2Ba,lossTrain);accTrain = composePadAccuracy (accTrain);accTrainRGB = composePadAccuracy (accTrainRGB);accTrainFlow = composePadAccuracy (accTrainFlow);accTrain =加入([accTrain、accTrainRGB accTrainFlow),gydF4y2Ba”:“gydF4y2Ba);learnRate =组成(gydF4y2Ba“% .13f”gydF4y2Ba,learnRate);disp (gydF4y2Ba“|”gydF4y2Ba+gydF4y2Ba…gydF4y2Ba垫(string(时代),5,gydF4y2Ba“两个”gydF4y2Ba)+gydF4y2Ba“|”gydF4y2Ba+gydF4y2Ba…gydF4y2Ba垫(字符串(迭代)9gydF4y2Ba“两个”gydF4y2Ba)+gydF4y2Ba“|”gydF4y2Ba+gydF4y2Ba…gydF4y2Ba垫(string (D) 12gydF4y2Ba“两个”gydF4y2Ba)+gydF4y2Ba“|”gydF4y2Ba+gydF4y2Ba…gydF4y2Ba垫(string (accTrain), 26岁,gydF4y2Ba“两个”gydF4y2Ba)+gydF4y2Ba“|”gydF4y2Ba+gydF4y2Ba…gydF4y2Ba垫(string (accVal), 26岁,gydF4y2Ba“两个”gydF4y2Ba)+gydF4y2Ba“|”gydF4y2Ba+gydF4y2Ba…gydF4y2Ba垫(string (lossTrain) 10gydF4y2Ba“两个”gydF4y2Ba)+gydF4y2Ba“|”gydF4y2Ba+gydF4y2Ba…gydF4y2Ba垫(string (lossValidation) 10gydF4y2Ba“两个”gydF4y2Ba)+gydF4y2Ba“|”gydF4y2Ba+gydF4y2Ba…gydF4y2Ba垫(string (learnRate), 13日gydF4y2Ba“两个”gydF4y2Ba)+gydF4y2Ba“|”gydF4y2Ba+gydF4y2Ba…gydF4y2Ba垫(string(火车离站时刻表)10gydF4y2Ba“两个”gydF4y2Ba)+gydF4y2Ba“|”gydF4y2Ba+gydF4y2Ba…gydF4y2Ba垫(string (validationTime) 15gydF4y2Ba“两个”gydF4y2Ba)+gydF4y2Ba“|”gydF4y2Ba)gydF4y2Ba结束gydF4y2Ba函数gydF4y2Baacc = composePadAccuracy (acc) acc =组成(gydF4y2Ba“% .2f”gydF4y2Ba,100年acc *) +gydF4y2Ba“%”gydF4y2Ba;acc =垫(字符串(acc) 6gydF4y2Ba“左”gydF4y2Ba);gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

endVerboseOutputgydF4y2Ba

的gydF4y2BaendVerboseOutputgydF4y2Ba函数显示详细输出在训练结束。gydF4y2Ba

函数gydF4y2BaendVerboseOutput (params)gydF4y2Ba如果gydF4y2Ba参数个数。Verbo年代edisp (gydF4y2Ba“| = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = |”gydF4y2Ba)gydF4y2Ba结束gydF4y2Ba结束gydF4y2Ba

引用gydF4y2Ba

[1]Carreira,若昂,安德鲁Zisserman。“君在何处,动作识别?一个新的模型和动力学数据集。”gydF4y2Ba《IEEE计算机视觉与模式识别会议gydF4y2Ba(CVPR): 6299 ? 6308。火奴鲁鲁,你好:IEEE 2017。gydF4y2Ba

[2]Simonyan,凯伦和安德鲁Zisserman。“二束卷积网络行动识别视频。”gydF4y2Ba先进的神经信息处理系统gydF4y2Ba27日,长滩,CA:少量的酒,2017年。gydF4y2Ba

[3]Loshchilov, Ilya和弗兰克Hutter。“SGDR:随机梯度下降法与温暖重启。”gydF4y2Ba2017年国际Conferencee学习表示gydF4y2Ba。法国土伦:ICLR, 2017。gydF4y2Ba

[4]Du Tran恒王,洛伦佐Torresani, Yann LeCun(杰米•雷马诺Paluri。“仔细看看时空旋转动作识别”。《IEEE计算机视觉与模式识别会议(CVPR), 2018年,页6450 - 6459。gydF4y2Ba

[5]Christoph Feichtenhofer浩气粉丝,Jitendra Malik,他和开明。“SlowFast网络视频识别。”gydF4y2Ba《IEEE计算机视觉与模式识别会议gydF4y2Ba(CVPR), 2019年。gydF4y2Ba

[6]将凯,若昂Carreira Karen Simonyan布莱恩,克洛伊希利尔,Sudheendra Vijayanarasimhan,法比奥Viola,蒂姆•格林特,保罗Natsev,安德鲁Zisserman Mustafa苏莱曼。“人类行为动力学的视频数据集”。gydF4y2BaarXiv预印本arXiv: 1705.06950gydF4y2Ba,2017年。gydF4y2Ba