Train Classifier Using Hyperparameter Optimization in Classification Learner App

这个例子展示了如何tune hyperparameters of a classification support vector machine (SVM) model by using hyperparameter optimization in the Classification Learner app. Compare the test set performance of the trained optimizable SVM to that of the best-performing preset SVM model.

In the MATLAB®Command Window, load the

ionospheredata set, and create a table containing the data.loadionospheretbl = array2table(X); tbl.Y = Y;Open Classification Learner. Click theAppstab, and then click the arrow at the right of theAppssection to open the apps gallery. In theMachine Learning and Deep Learninggroup, clickClassification Learner.

On theClassification Learnertab, in theFilesection, selectNew Session > From Workspace.

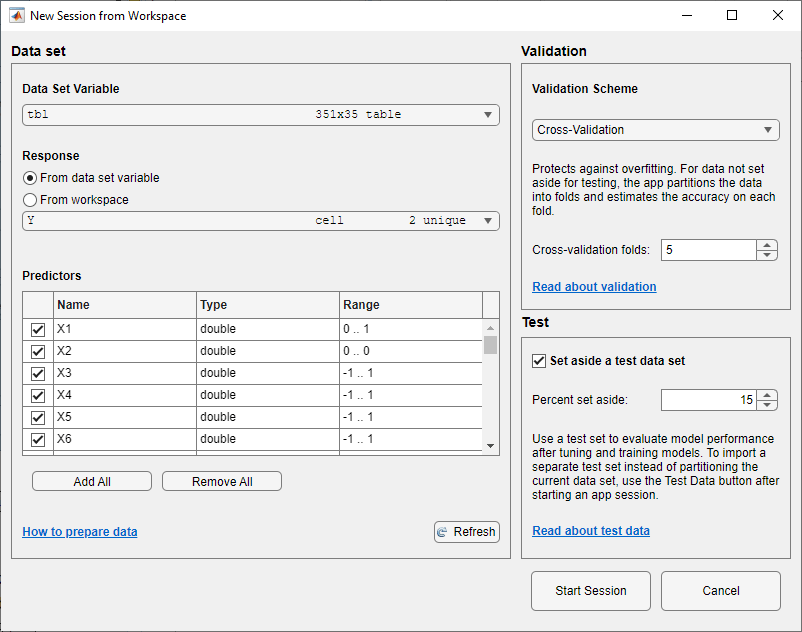

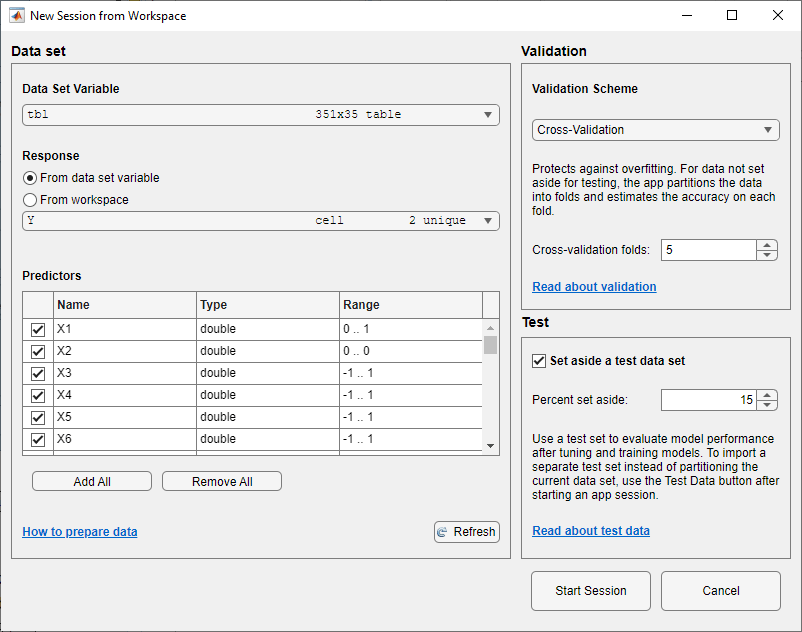

In the New Session from Workspace dialog box, select

tblfrom theData Set Variablelist. The app selects the response and predictor variables. The default response variable isY. The default validation option is 5-fold cross-validation, to protect against overfitting.In theTestsection, click the check box to set aside a test data set. Specify to use

15percent of the imported data as a test set.

To accept the options and continue, clickStart Session.

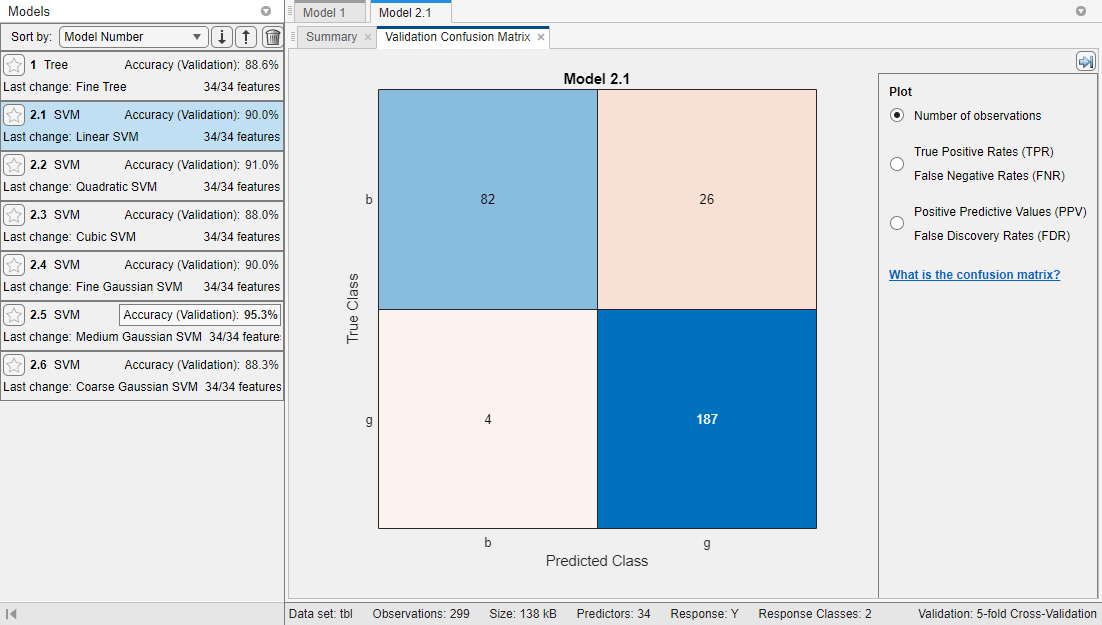

Train all preset SVM models. On theClassification Learnertab, in theModels部分,单击箭头打开的n the gallery. In theSupport Vector Machinesgroup, clickAll SVMs. In theTrainsection, clickTrain Alland selectTrain All. The app trains one of each SVM model type, as well as the default fine tree model, and displays the models in theModelspane.

Note

If you have Parallel Computing Toolbox™, then the app has theUse Parallelbutton toggled on by default. After you clickTrain Alland selectTrain AllorTrain Selected, the app opens a parallel pool of workers. During this time, you cannot interact with the software. After the pool opens, you can continue to interact with the app while models train in parallel.

If you do not have Parallel Computing Toolbox, then the app has theUse Background Trainingcheck box in theTrain Allmenu selected by default. After you click to train models, the app opens a background pool. After the pool opens, you can continue to interact with the app while models train in the background.

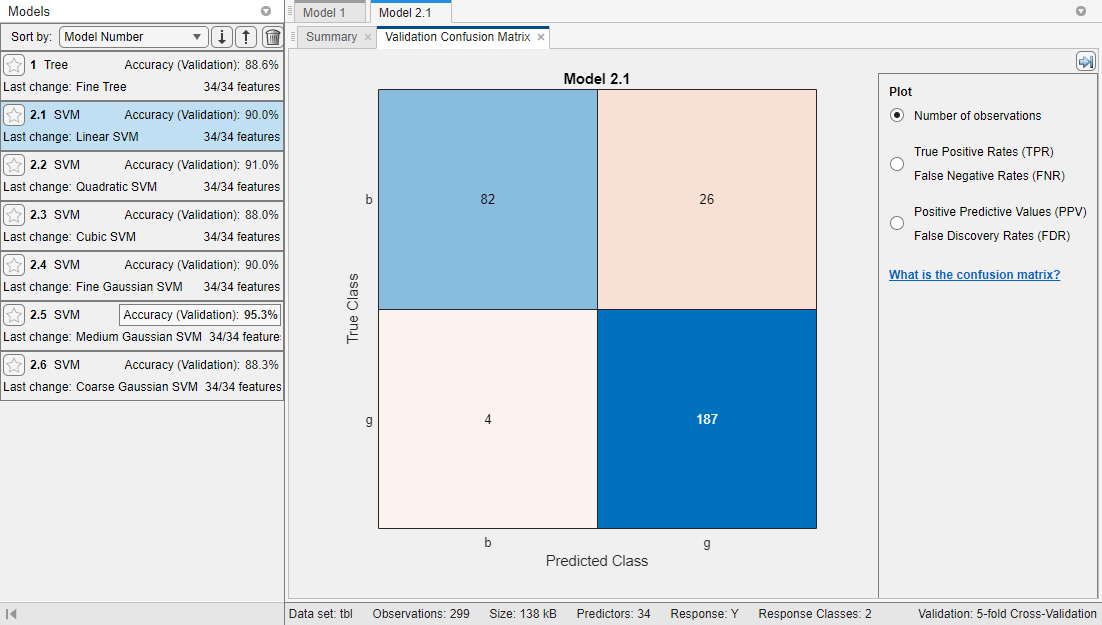

这个应用程序显示一个validation confusion matrix for the first SVM model (model 2.1). Blue values indicate correct classifications, and red values indicate incorrect classifications. TheModelspane on the left shows the validation accuracy for each model.

Note

Validation introduces some randomness into the results. Your model validation results can vary from the results shown in this example.

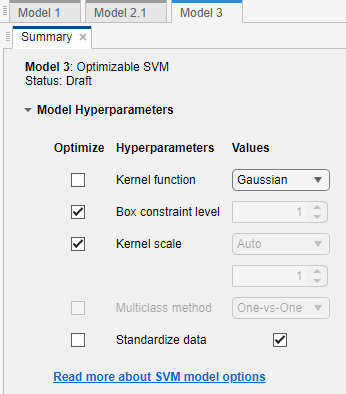

Select an optimizable SVM model to train. On theClassification Learnertab, in theModels部分,单击箭头打开的n the gallery. In theSupport Vector Machinesgroup, clickOptimizable SVM.

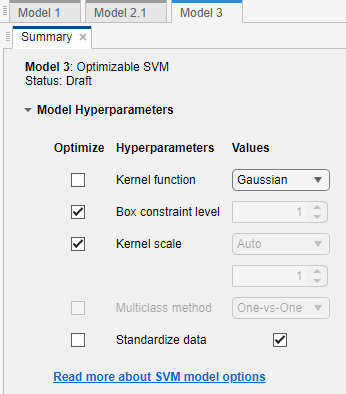

Select the model hyperparameters to optimize. In theSummarytab, you can selectOptimizecheck boxes for the hyperparameters that you want to optimize. By default, all the check boxes for the available hyperparameters are selected. For this example, clear theOptimizecheck boxes forKernel functionandStandardize data. By default, the app disables theOptimizecheck box forKernel scalewhenever the kernel function has a fixed value other than

Gaussian. Select aGaussiankernel function, and select theOptimizecheck box forKernel scale.

Train the optimizable model. In theTrainsection of theClassification Learnertab, clickTrain Alland selectTrain Selected.

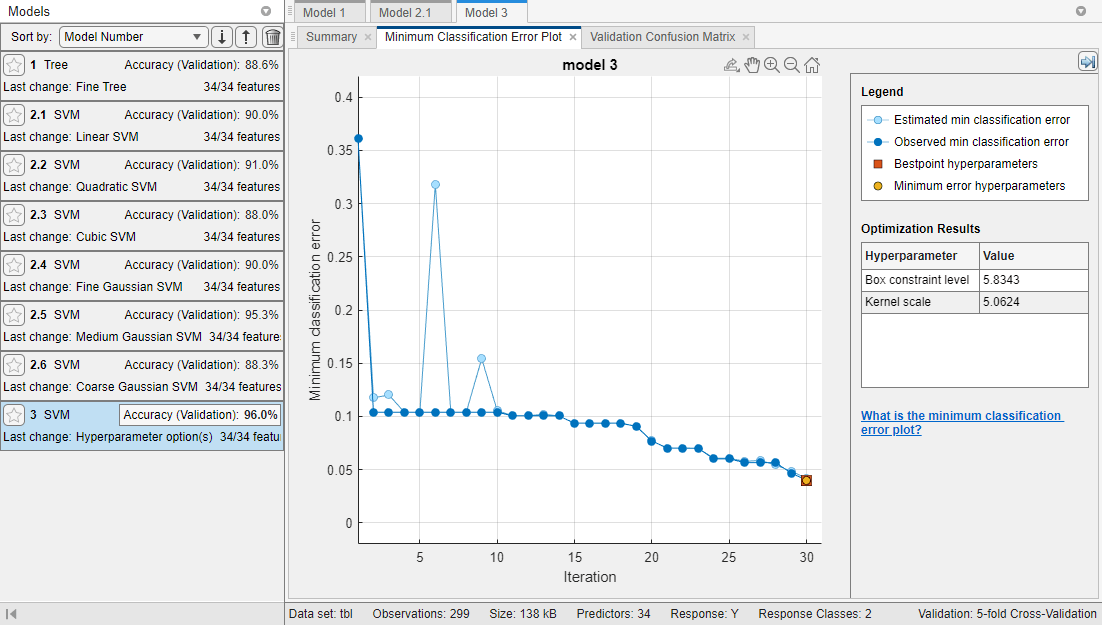

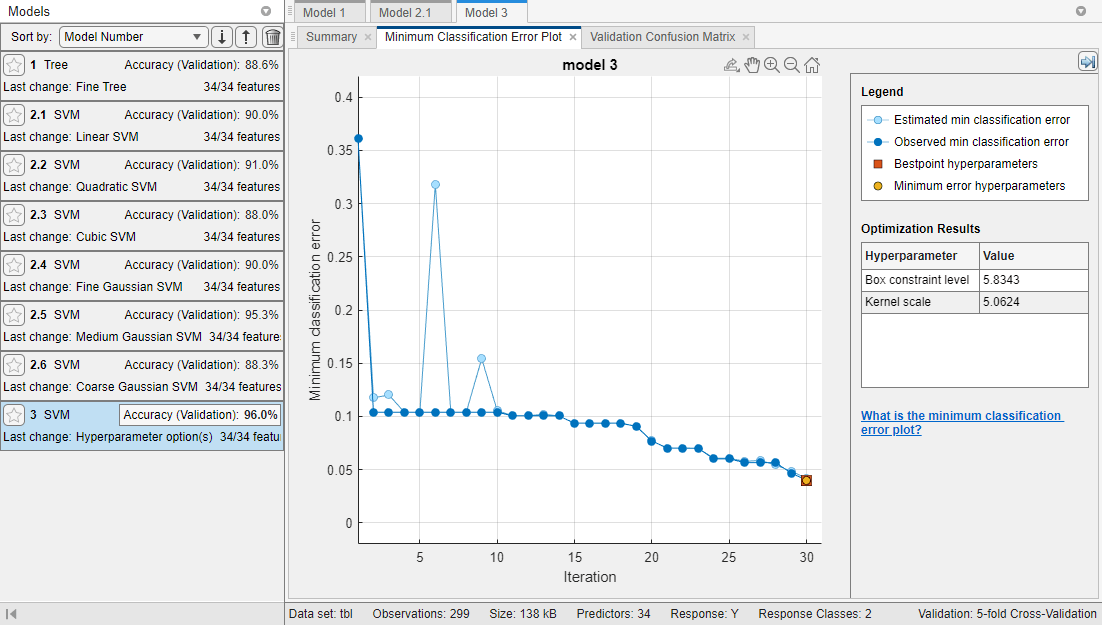

这个应用程序显示一个Minimum Classification Error Plotas it runs the optimization process. At each iteration, the app tries a different combination of hyperparameter values and updates the plot with the minimum validation classification error observed up to that iteration, indicated in dark blue. When the app completes the optimization process, it selects the set of optimized hyperparameters, indicated by a red square. For more information, seeMinimum Classification Error Plot.

The app lists the optimized hyperparameters in both theOptimization Resultssection to the right of the plot and theOptimizable SVM Model Hyperparameterssection of the modelSummarytab.

Note

In general, the optimization results are not reproducible.

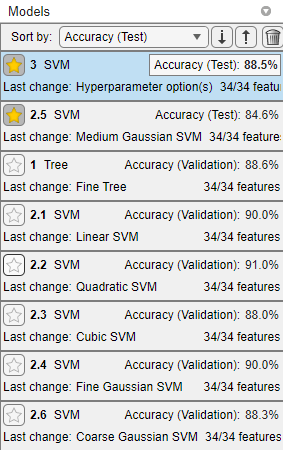

Compare the trained preset SVM models to the trained optimizable model. In theModelspane, the app highlights the highestAccuracy (Validation)by outlining it in a box. In this example, the trained optimizable SVM model outperforms the six preset models.

A trained optimizable model does not always have a higher accuracy than the trained preset models. If a trained optimizable model does not perform well, you can try to get better results by running the optimization for longer. On theClassification Learnertab, in theOptionssection, clickOptimizer. In the dialog box, increase theIterationsvalue. For example, you can double-click the default value of

30and enter a value of60. Then clickSave and Apply. The options will be applied to future optimizable models created using theModelsgallery.Because hyperparameter tuning often leads to overfitted models, check the performance of the optimizable SVM model on a test set and compare it to the performance of the best preset SVM model. Use the data you reserved for testing when you imported data into the app.

First, in theModelspane, click the star icons next to theMedium Gaussian SVMmodel and theOptimizable SVMmodel.

For each model, select the model in theModelspane. In theTestsection of theClassification Learnertab, clickTest Alland then selectTest Selected. The app computes the test set performance of the model trained on the rest of the data, namely the training and validation data.

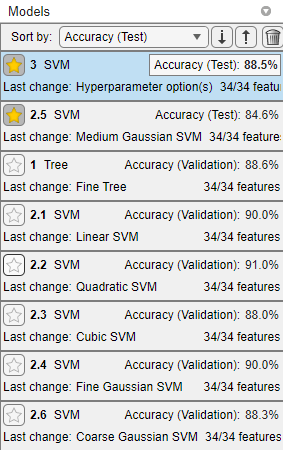

Sort the models based on the test set accuracy. In theModelspane, open theSort bylist and select

Accuracy (Test).In this example, the trained optimizable model still outperforms the trained preset model on the test set data. However, neither model has a test accuracy as high as its validation accuracy.

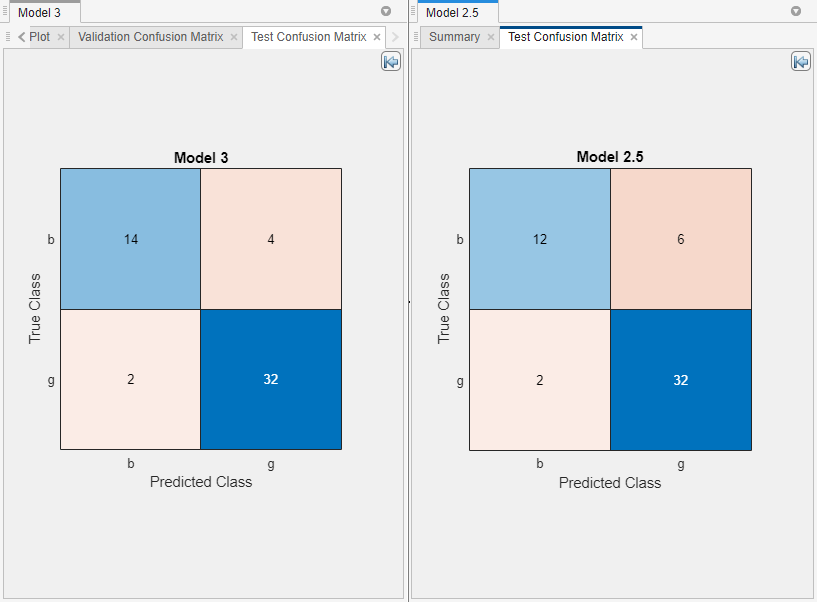

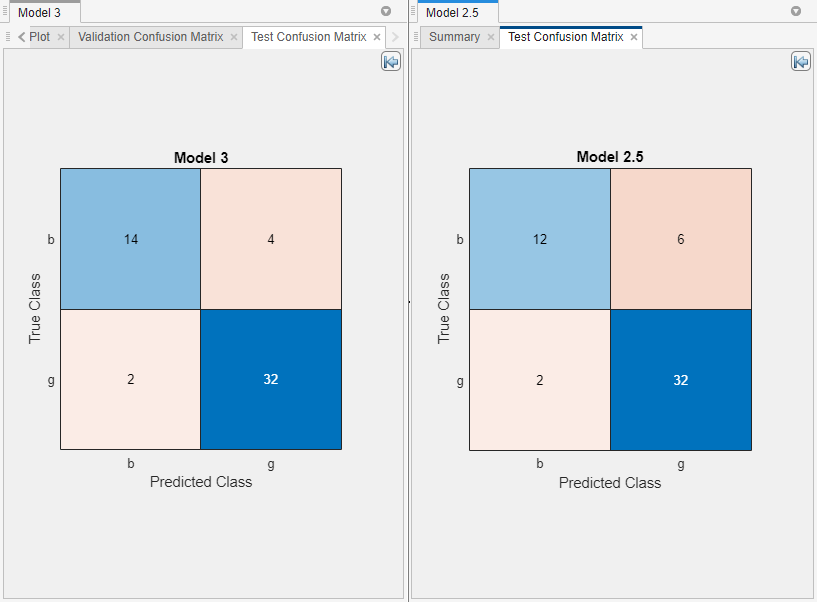

Visually compare the test set performance of the models. For each of the starred models, select the model in theModelspane. On theClassification Learnertab, in thePlots部分,单击箭头打开的n the gallery, and then clickConfusion Matrix (Test)in theTest Resultsgroup.

Rearrange the layout of the plots to better compare them. First, close the plot and summary tabs for all models exceptModel 2.5andModel 3. Then, in thePlotssection, click theLayoutbutton and selectCompare models. Click the Hide plot options button

at the top right of the plots to make more room for the plots.

at the top right of the plots to make more room for the plots.

To return to the original layout, you can click theLayoutbutton and selectSingle model (Default).

Related Topics

- Hyperparameter Optimization in Classification Learner App

- Train Classification Models in Classification Learner App

- Select Data for Classification or Open Saved App Session

- Choose Classifier Options

- Assess Classifier Performance in Classification Learner

- Export Classification Model to Predict New Data

- Bayesian Optimization Workflow