物体检测使用YOLO V3深度学习

这个例子展示了如何训练YOLO V3.对象探测器。

深度学习是一种强大的机器学习技术,您可以用来培训强大的对象探测器。存在用于对象检测的几种技术,包括更快的R-CNN,您只需看一次(YOLO)V2和单次检测器(SSD)。此示例显示如何训练YOLO V3对象检测器。YOLO V3通过在多个尺度上添加检测来改善YOLO V2,以帮助检测较小的物体。此外,用于训练的损失函数被分成平均方形误差,用于对象分类的边界框回归和二进制交叉熵,以帮助提高检测精度。

注意:这个例子需要计算机视觉工具箱™模型用于YOLO v3对象检测。您可以从附加组件资源管理器中安装用于YOLO v3对象检测的计算机视觉工具箱模型。有关安装附加组件的详细信息,请参见获取和管理附加组件.

下载Pretrained网络

使用helper函数下载预先训练过的网络downloadPretrainedYOLOv3Detector以避免等待训练完成。如果你想训练网络,设置用圆形变量到真正的.

doTraining = true;如果〜DOTRAINATEPPRETRINEDETTECTOR = DOWNLOWPRETRAYYOLOV3DETCHER();结尾

加载数据

此示例使用包含295个图像的小标记数据集。这些图像中的许多图片来自Caltech汽车1999和2001年数据集,可在Caltech计算视觉上提供网站由Pietro Perona创建并与许可一起使用。每个图像包含车辆的一个或两个标记的实例。一个小型数据集可用于探索YOLO V3培训程序,但在实践中,需要更具标记的图像来培训强大的网络。

解压缩车辆图像,加载车辆地面真实数据。

解压缩vevicledatasetimages.zip.data = load(“vehicleDatasetGroundTruth.mat”);vevicledataset = data.vehicledataset;%添加本地车辆数据文件夹的完整路径。vevicledataset.imagefilename = fullfile(pwd,vevicledataset.imagefilename);

注意:在多个类的情况下,数据还可以组织为三列,其中第一列包含带有路径的图像文件名,第二列包含边界框,第三列必须是包含与每个边界框对应的标签名称的单元格向量。有关如何排列边框和标签的更多信息,请参见boxlabeldatastore..

所有边界框必须处于表格中[x y宽度高度].这个向量指定左上角和边界框的大小(以像素为单位)。

将数据集分割为训练网络的训练集和评估网络的测试集。将60%的数据用于训练集,其余数据用于测试集。

rng (0);shuffledIndices = randperm(高度(vehicleDataset));idx = floor(0.6 * length(shuffledIndices));trainingDataTbl =车辆数据集(shuffledIndices(1:idx),:);testDataTbl = vehicleDataset(shuffledIndices(idx+1:end),:);

创建用于加载图像的图像数据存储。

imdstrain = imageageatastore(trainingdatatbl.imagefilename);imdstest = imageageatastore(testdatatbl.imagefilename);

为地面真相边界框创建一个数据存储。

BLDStrain = BoxLabeldAtastore(TrainingDatatbl(:,2:结束));Bldstest = boxlabeldataStore(testdatatbl(:,2:结束));

合并图像和框标签数据存储。

trainingData = combine(imdsTrain, bldsTrain);testData = combine(imdsTest, bldsTest);

采用validateinputdata.检测无效的图像,边界框或标签i.e.,

图像格式无效或包含NAN的示例

含有零/ NANS / INFS /空的边界框

丢失/非分类标签。

边界盒的值应为有限,正,非分,非NaN,并且应在正高度和宽度的图像边界内。任何无效的样本必须被丢弃或固定以进行适当的培训。

validateinputdata(trainingdata);validateinputdata(testdata);

数据增强

数据增强用于通过在培训期间随机转换原始数据来提高网络精度。通过使用数据增强,您可以为培训数据添加更多品种而实际上必须增加标记的训练样本的数量。

采用变换函数将自定义数据增强应用于训练数据。这augmentData辅助函数在示例的末尾列出,将以下增强应用于输入数据。

HSV空间中的颜色抖动增强

随机水平翻转

随机缩放10%

augmentedTrainingData = transform(trainingData, @augmentData);

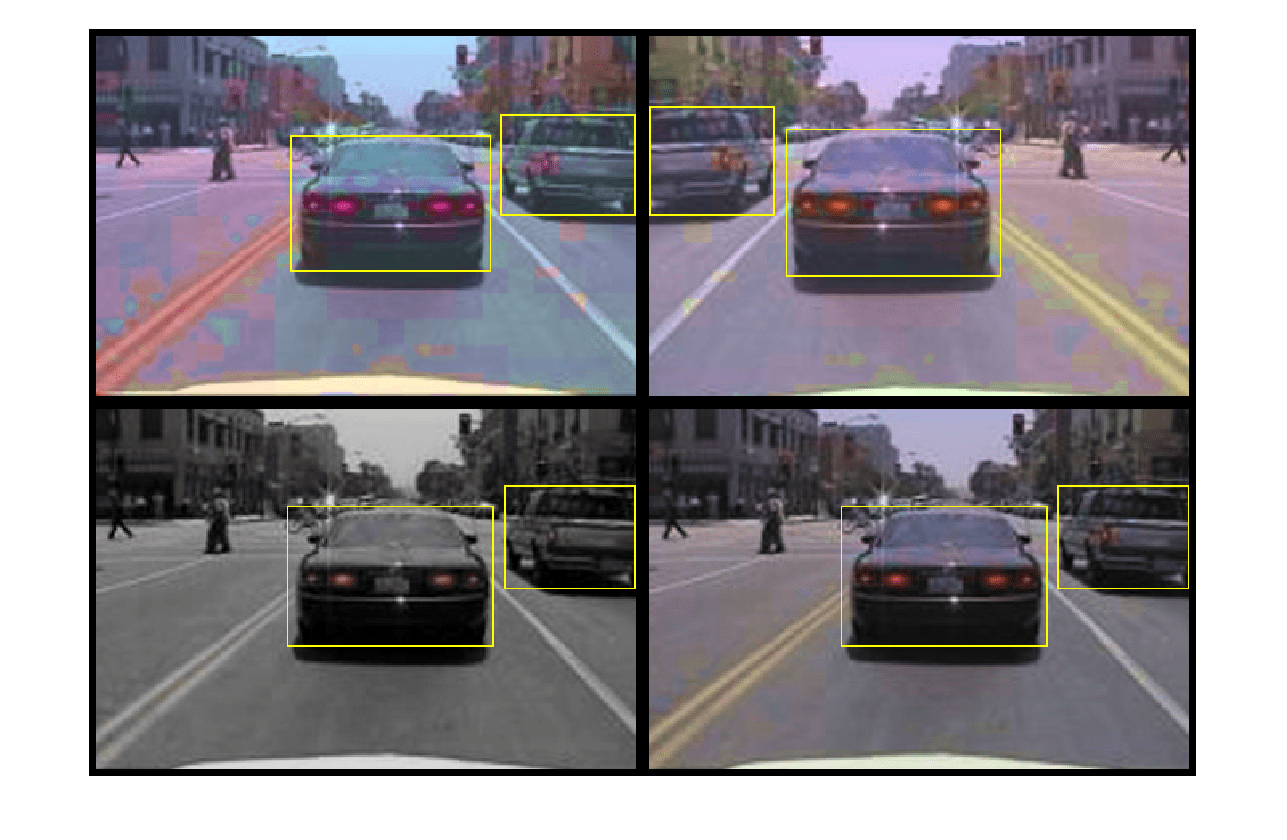

读相同的图像四次,并显示增强的训练数据。

%可视化增强图像。AugmentedData = Cell(4,1);为了k = 1:4数据=读取(AugmentedTrainingData);AugmentedData {K} = InsertShape(数据{1,1},'矩形',数据{1,2});重置(AugmentedTrainingData);结尾图蒙太奇(AugmentedData,'毗邻',10)

定义YOLO v3对象检测器

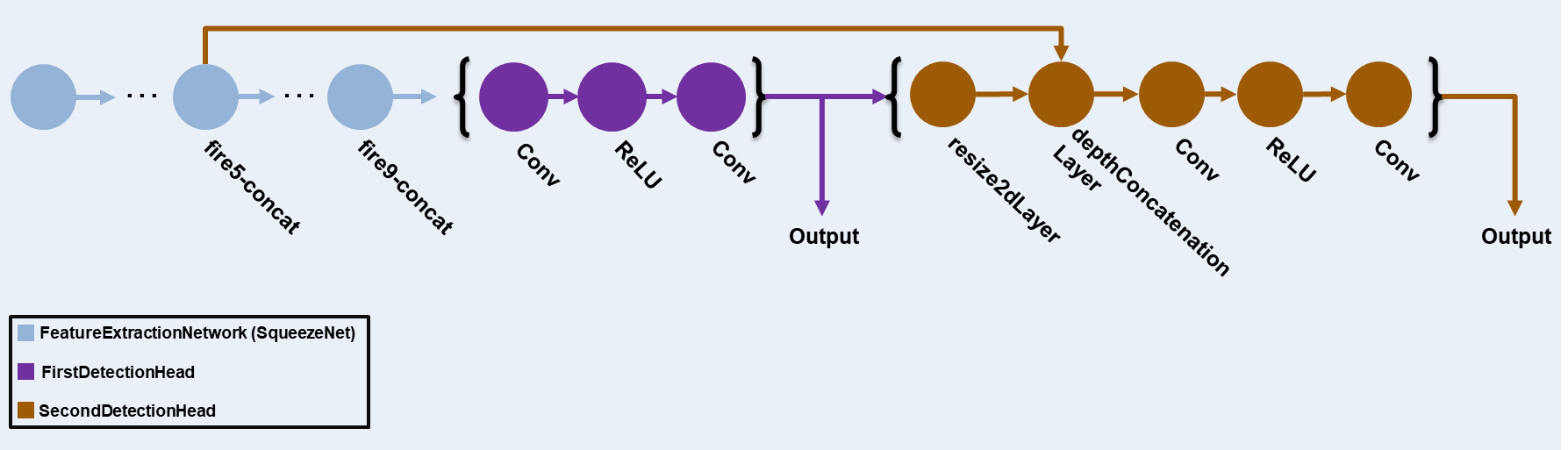

本例中的YOLO v3探测器基于SqueezeNet,采用了SqueezeNet中的特征提取网络,并在最后增加了两个检测头。第二个检测头的尺寸是第一个检测头的两倍,所以它能更好地检测小物体。注意,您可以根据您想要检测的对象的大小指定任意数量的不同大小的检测头。YOLO v3检测器使用使用训练数据估计的锚盒,以获得更好的对应于数据集类型的初始先验,并帮助检测器学习准确预测锚盒。有关锚框的信息,请参见用于对象检测的锚盒.

yolo V3检测器中存在的YOLO V3网络在下图中示出。

您可以使用深网络设计师(深度学习工具箱)创建图表中显示的网络。

指定网络输入大小。在选择网络输入尺寸时,考虑网络本身运行所需的最小尺寸、训练图像的尺寸以及在选定尺寸下处理数据所需要的计算代价。在可行的情况下,选择一个与训练图像尺寸相近且大于网络所需输入尺寸的网络输入尺寸。为了减少运行示例的计算成本,指定网络输入大小为[227 227]。

networkInputSize = [227 227 3];

首先,使用变换为了预处理用于计算锚盒的训练数据,因为该示例中使用的训练图像大于227×227并且尺寸变化。指定锚点的数量为6,以实现锚点数与平均值之间的良好权衡。使用estimateAnchorBoxes估计锚盒的功能。有关估算锚盒的详细信息,请参阅根据训练数据估计锚盒.在使用普拉维拉yolov3对象检测器的情况下,需要指定在特定训练数据集上计算的锚盒。请注意,估计过程不是确定性的。为了防止估计的锚盒在调整其他封路时在调整其他普通参数时在使用RNG之前设置随机种子。

RNG(0)TrainingDataforeStimation = Transform(TrainingData,@(数据)预处理数据(数据,NetworkInputSize));numanchors = 6;[锚,烟囱] = extimateanchorboxes(TrainingDataforestimation,Numanchors)

锚=6×242 34 161 130 97 93 143 124 33 24 69 66

Meaniou = 0.8423

指定锚盒在两个检测头中使用。锚盒为[mx]单元阵列,其中M为检测头数。每个检测头由的[Nx2]矩阵组成锚,其中N是要使用的锚的数量。选择锚盒基于特征图大小对每个检测头进行检测。使用更大的锚较低规模和更小锚在更高的规模。为此,对锚首先使用较大的锚盒,并将前三个锚盒分配给第一个检测头,将后三个锚盒分配给第二个检测头。

区域=锚(:,1)。*锚(:,2);[〜,IDX] =排序(区域,“下降”);锚=锚(Idx,:);anchorboxes = {锚(1:3,:)锚(4:6,:)};

在Imagenet数据集上加载预先训练好的SqueezeNet网络,然后指定类名。您还可以选择在COCO数据集上加载不同的预训练网络,例如Tiny-Yolov3-Coco或Darknet53-Coco.或想象成数据集,例如MobileNet-V2或Reset-18。当您使用磨具网络时,YOLO V3执行更好并提高培训。

Basenetwork =挤压;classnames = trainingdatatbl.properties.variablenames(2:结束);

接下来,创建yolov3ObjectDetector通过添加检测网络Soruce来实现对象。选择最佳检测网络源需要试验和错误,您可以使用分析找到网络内的潜在检测网络源的名称。对于此示例,使用Fire9-Concat.和Fire5-Concat.层数侦查intworksource..

yolov3detector = yolov3objectdetector(Basenetwork,ClassNames,Charorboxes,“DetectionNetworkSource”,{“fire9-concat”那“fire5-concat”});

另外,与上面使用SqueezeNet创建的网络不同,使用MS-COCO等更大的数据集训练的其他预先训练的YOLOv3架构可以用来在自定义目标检测任务中迁移学习检测器。迁移学习可以通过改变类名和锚盒来实现。如果自定义目标检测的类作为预训练网络中训练的类之一或类的子类出现,则推荐迁移学习工作流。

训练数据进行预处理

预处理增强培训数据准备培训。这预处理方法yolov3ObjectDetector,将以下预处理操作应用于输入数据。

通过保持宽高比来调整图像到网络输入的大小。

缩放范围内的图像像素

[0 1].

preprocesedtrainingdata = transform(augmentedTrainingData, @(data)preprocess(yolov3Detector, data));

阅读预处理的培训数据。

数据=读取(PreprocessedTrainingData);

使用边界框显示图像。

i =数据{1,1};bbox = data {1,2};AnnotatedImage = instrshape(我,'矩形',bbox);AnnotatedImage = IMResize(AnnotatedImage,2);图imshow(AnnotatedImage)

重置数据存储。

重置(预处理触发Data);

指定培训选项

指定这些培训选项。

设置纪元数为80。

将迷你批量尺寸设置为

8..当使用较高的迷你批量尺寸时,可以使用较高的学习速度进行稳定培训.虽然,应根据可用内存设置。学习率设置为0.001。

将预热时间设置为

1000迭代。此参数表示迭代的数量,以基于公式呈指数级增强的学习速率 .它有助于在较高的学习速率下稳定梯度。设置L2正则化系数为0.0005。

将惩罚阈值指定为0.5。与地面真相重叠小于0.5的检测受到惩罚。

初始化梯度的速度为

[].SGDM使用这将存储梯度的速度。

numepochs = 80;minibatchsize = 8;学会= 0.001;Harmupperiod = 1000;L2Regularization = 0.0005;罚款= 0.5;速度= [];

火车模型

在GPU上训练(如果有的话)。使用GPU需要并行计算工具箱™和支持CUDA®的NVIDIA®GPU。有关支持的计算能力的信息,请参见金宝appGPU通金宝app过发布支持(并行计算工具箱).

使用minibatchqueue函数,对预处理后的训练数据进行批量分割,并提供支持功能金宝appcreateBatchData返回批量图像和边界框与相应的类ID组合。为了更快地提取批量数据进行培训,DisparctinBackground.应设置为“真实”,确保使用并行池。

minibatchqueue自动检测GPU的可用性。如果您没有GPU,或者不想用一个用于培训,请设置outputenvironment.参数“中央处理器”.

如果canUseParallelPool dispatchInBackground = true;别的dispatchInBackground = false;结尾Mbqtrain = minibatchqueue(Preprocessedtrainingdata,2,...“迷你atchsize”miniBatchSize,...“minibatchfcn”, @(images, boxes, labels) createBatchData(images, boxes, labels, classNames),...“minibatchformat”,[“SSCB”那“],...“disparctinbackground”dispatchInBackground,...“Outporcast”,[“那“替身”]);

使用支持功能创建培训进度绘图仪金宝appconfiguretringsprogressplotter.在使用自定义训练循环训练检测器对象时查看情节。

最后,指定自定义训练循环。对于每次迭代:

读取数据

minibatchqueue。如果没有任何数据,则重置minibatchqueue和洗牌。使用模型梯度评估使用

dlfeval和MapicalGRADENTERS.功能。功能MapicalGRADENTERS.已列为支持函数,返回有关学习金宝app参数的丢失渐变网,对应的小批损失,以及当前批的状态。为更强大的培训应用重量衰减因子以正规化。

根据使用的迭代确定学习率

piecewiseLearningRateWithWarmup金宝app支持功能。方法更新检测器参数

sgdmupdate.功能。更新

状态探测器参数随移动平均线。显示每次迭代的学习速率、总损耗和个体损耗(盒损耗、对象损耗和类损耗)。这些可以用来解释在每个迭代中各自的损失是如何变化的。例如,在几次迭代后盒子丢失的突然峰值意味着预测中有Inf或nan。

更新培训进度图。

如果损失饱和少量时期,也可以终止培训。

如果用圆形%为学习率和迷你批量丢失创建子图。图=图;[WORDERPLOTTER,SQUIMINGROTERTERTER] = CONFIGURETRINGSPROGRINGRPLER(图);迭代= 0;%自定义训练循环。为了epoch = 1:numepochs复位(mbqtrain);洗牌(MBQTrain);尽管(hasdata(MBQTrain))迭代=迭代+ 1;[xtrain,ytrain] =下一个(Mbqtrain);使用dlfeval和%MACEMEGRADENTERS功能。[渐变,州,lossinfo] = dlfeval(@modelgradients,yolov3detector,xtrain,ytrain,罚款);%应用L2正则化。渐变= dlupdate(@(g,w)g + l2regularization * w,渐变,yolov3detector.learnables);%确定当前的学习率值。currentlr = Procewiselearningratewithwarmup(迭代,epoch,学会,harmupperiod,numepochs);%使用SGDM Optimizer更新探测器学习参数。[yolov3detector.learnables,速度] = sgdmupdate(yolov3detector.learnables,梯度,速度,currentlr);%更新dlnetwork的状态参数。yolov3Detector。=状态;%显示进度。displaylossinfo(时代,迭代,currentlr,lossinfo);%更新培训情节与新点。更新平面(loctionPlotter,LorightratePlotter,迭代,CurrentLr,lossInfo.totalloss);结尾结尾别的yolov3detector = pretrowddetector;结尾

时代:1 |迭代:1 |学习率:1E-15 |总损失:2034.4574 |箱体损失:1.2703 |物体损失:2032.5195 |票价:0.66761时期:1 |迭代:2 |学习率:1.6E-14 |总损失:2040.5183 | Box Loss : 5.8543 | Object Loss : 2033.9915 | Class Loss : 0.67264 Epoch : 1 | Iteration : 3 | Learning Rate : 8.1e-14 | Total Loss : 2039.3861 | Box Loss : 5.0515 | Object Loss : 2033.4391 | Class Loss : 0.89554 Epoch : 1 | Iteration : 4 | Learning Rate : 2.56e-13 | Total Loss : 2045.5454 | Box Loss : 2.5824 | Object Loss : 2042.353 | Class Loss : 0.61002 Epoch : 1 | Iteration : 5 | Learning Rate : 6.25e-13 | Total Loss : 2034.3147 | Box Loss : 4.6497 | Object Loss : 2028.9595 | Class Loss : 0.70542 Epoch : 1 | Iteration : 6 | Learning Rate : 1.296e-12 | Total Loss : 2038.448 | Box Loss : 6.7472 | Object Loss : 2031.0361 | Class Loss : 0.66469 Epoch : 1 | Iteration : 7 | Learning Rate : 2.401e-12 | Total Loss : 2043.5757 | Box Loss : 2.474 | Object Loss : 2040.5457 | Class Loss : 0.55609 Epoch : 1 | Iteration : 8 | Learning Rate : 4.096e-12 | Total Loss : 2044.3937 | Box Loss : 6.5438 | Object Loss : 2037.5107 | Class Loss : 0.33914 Epoch : 1 | Iteration : 9 | Learning Rate : 6.561e-12 | Total Loss : 2029.511 | Box Loss : 2.3748 | Object Loss : 2026.4377 | Class Loss : 0.69853 Epoch : 1 | Iteration : 10 | Learning Rate : 1e-11 | Total Loss : 2026.5184 | Box Loss : 2.2127 | Object Loss : 2023.8136 | Class Loss : 0.49224 Epoch : 1 | Iteration : 11 | Learning Rate : 1.4641e-11 | Total Loss : 2052.4109 | Box Loss : 4.4924 | Object Loss : 2047.2883 | Class Loss : 0.63001 Epoch : 1 | Iteration : 12 | Learning Rate : 2.0736e-11 | Total Loss : 2039.0267 | Box Loss : 4.2858 | Object Loss : 2034.1895 | Class Loss : 0.55147 Epoch : 1 | Iteration : 13 | Learning Rate : 2.8561e-11 | Total Loss : 2053.4932 | Box Loss : 2.1127 | Object Loss : 2050.6885 | Class Loss : 0.69185 Epoch : 1 | Iteration : 14 | Learning Rate : 3.8416e-11 | Total Loss : 2040.917 | Box Loss : 2.8612 | Object Loss : 2037.4712 | Class Loss : 0.58456 Epoch : 1 | Iteration : 15 | Learning Rate : 5.0625e-11 | Total Loss : 2043.094 | Box Loss : 2.8008 | Object Loss : 2039.9056 | Class Loss : 0.3876 Epoch : 1 | Iteration : 16 | Learning Rate : 6.5536e-11 | Total Loss : 2031.8059 | Box Loss : 3.2756 | Object Loss : 2028.0403 | Class Loss : 0.49002 Epoch : 1 | Iteration : 17 | Learning Rate : 8.3521e-11 | Total Loss : 2044.72 | Box Loss : 1.6522 | Object Loss : 2042.4524 | Class Loss : 0.61532 Epoch : 1 | Iteration : 18 | Learning Rate : 1.0498e-10 | Total Loss : 2050.0471 | Box Loss : 4.1119 | Object Loss : 2045.4639 | Class Loss : 0.47138 Epoch : 1 | Iteration : 19 | Learning Rate : 1.3032e-10 | Total Loss : 2033.7067 | Box Loss : 2.8427 | Object Loss : 2030.1394 | Class Loss : 0.72465 Epoch : 1 | Iteration : 20 | Learning Rate : 1.6e-10 | Total Loss : 2036.5347 | Box Loss : 3.1793 | Object Loss : 2032.9583 | Class Loss : 0.39718 Epoch : 1 | Iteration : 21 | Learning Rate : 1.9448e-10 | Total Loss : 2029.7346 | Box Loss : 3.6625 | Object Loss : 2025.3025 | Class Loss : 0.76967 Epoch : 1 | Iteration : 22 | Learning Rate : 2.3426e-10 | Total Loss : 2026.9553 | Box Loss : 2.9675 | Object Loss : 2023.5861 | Class Loss : 0.4017 Epoch : 1 | Iteration : 23 | Learning Rate : 2.7984e-10 | Total Loss : 2019.0176 | Box Loss : 0.84919 | Object Loss : 2017.3557 | Class Loss : 0.81262 Epoch : 2 | Iteration : 24 | Learning Rate : 3.3178e-10 | Total Loss : 2033.5848 | Box Loss : 3.0358 | Object Loss : 2029.8921 | Class Loss : 0.65696 Epoch : 2 | Iteration : 25 | Learning Rate : 3.9063e-10 | Total Loss : 2045.8374 | Box Loss : 3.4959 | Object Loss : 2041.6012 | Class Loss : 0.74038 Epoch : 2 | Iteration : 26 | Learning Rate : 4.5698e-10 | Total Loss : 2036.3354 | Box Loss : 2.1746 | Object Loss : 2033.5701 | Class Loss : 0.59084 Epoch : 2 | Iteration : 27 | Learning Rate : 5.3144e-10 | Total Loss : 2036.7156 | Box Loss : 3.0053 | Object Loss : 2032.9835 | Class Loss : 0.72679 Epoch : 2 | Iteration : 28 | Learning Rate : 6.1466e-10 | Total Loss : 2030.1866 | Box Loss : 3.4409 | Object Loss : 2026.0948 | Class Loss : 0.65091 Epoch : 2 | Iteration : 29 | Learning Rate : 7.0728e-10 | Total Loss : 2026.7745 | Box Loss : 1.0128 | Object Loss : 2025.1301 | Class Loss : 0.63154 Epoch : 2 | Iteration : 30 | Learning Rate : 8.1e-10 | Total Loss : 2039.3251 | Box Loss : 3.1312 | Object Loss : 2035.4562 | Class Loss : 0.73767 Epoch : 2 | Iteration : 31 | Learning Rate : 9.2352e-10 | Total Loss : 2034.1394 | Box Loss : 4.8098 | Object Loss : 2028.5234 | Class Loss : 0.8062 Epoch : 2 | Iteration : 32 | Learning Rate : 1.0486e-09 | Total Loss : 2035.0363 | Box Loss : 4.7082 | Object Loss : 2029.7371 | Class Loss : 0.59096 Epoch : 2 | Iteration : 33 | Learning Rate : 1.1859e-09 | Total Loss : 2053.9387 | Box Loss : 3.5839 | Object Loss : 2049.7886 | Class Loss : 0.56609 Epoch : 2 | Iteration : 34 | Learning Rate : 1.3363e-09 | Total Loss : 2041.5179 | Box Loss : 2.808 | Object Loss : 2038.3765 | Class Loss : 0.33344 Epoch : 2 | Iteration : 35 | Learning Rate : 1.5006e-09 | Total Loss : 2035.2411 | Box Loss : 2.7223 | Object Loss : 2032.0865 | Class Loss : 0.43231 Epoch : 2 | Iteration : 36 | Learning Rate : 1.6796e-09 | Total Loss : 2050.2747 | Box Loss : 5.3999 | Object Loss : 2044.2727 | Class Loss : 0.60193 Epoch : 2 | Iteration : 37 | Learning Rate : 1.8742e-09 | Total Loss : 2043.5969 | Box Loss : 5.5765 | Object Loss : 2037.3926 | Class Loss : 0.6279 Epoch : 2 | Iteration : 38 | Learning Rate : 2.0851e-09 | Total Loss : 2038.2933 | Box Loss : 3.4637 | Object Loss : 2034.1479 | Class Loss : 0.6816 Epoch : 2 | Iteration : 39 | Learning Rate : 2.3134e-09 | Total Loss : 2038.1877 | Box Loss : 2.5275 | Object Loss : 2035.101 | Class Loss : 0.55933 Epoch : 2 | Iteration : 40 | Learning Rate : 2.56e-09 | Total Loss : 2036.8016 | Box Loss : 2.0805 | Object Loss : 2034.0248 | Class Loss : 0.69634 Epoch : 2 | Iteration : 41 | Learning Rate : 2.8258e-09 | Total Loss : 2032.4956 | Box Loss : 2.0393 | Object Loss : 2030.0411 | Class Loss : 0.41518 Epoch : 2 | Iteration : 42 | Learning Rate : 3.1117e-09 | Total Loss : 2036.3785 | Box Loss : 3.8184 | Object Loss : 2032.1405 | Class Loss : 0.41965 Epoch : 2 | Iteration : 43 | Learning Rate : 3.4188e-09 | Total Loss : 2037.4792 | Box Loss : 2.2771 | Object Loss : 2034.524 | Class Loss : 0.67814 Epoch : 2 | Iteration : 44 | Learning Rate : 3.7481e-09 | Total Loss : 2036.874 | Box Loss : 2.9961 | Object Loss : 2033.1942 | Class Loss : 0.68376 Epoch : 2 | Iteration : 45 | Learning Rate : 4.1006e-09 | Total Loss : 2042.7714 | Box Loss : 5.42 | Object Loss : 2036.751 | Class Loss : 0.60037 Epoch : 2 | Iteration : 46 | Learning Rate : 4.4775e-09 | Total Loss : 2040.7478 | Box Loss : 15.8955 | Object Loss : 2024.0149 | Class Loss : 0.83739 Epoch : 3 | Iteration : 47 | Learning Rate : 4.8797e-09 | Total Loss : 2029.9019 | Box Loss : 5.3115 | Object Loss : 2023.8076 | Class Loss : 0.78269 Epoch : 3 | Iteration : 48 | Learning Rate : 5.3084e-09 | Total Loss : 2037.0276 | Box Loss : 3.8952 | Object Loss : 2032.524 | Class Loss : 0.60828 Epoch : 3 | Iteration : 49 | Learning Rate : 5.7648e-09 | Total Loss : 2024.6521 | Box Loss : 2.2851 | Object Loss : 2021.6416 | Class Loss : 0.72537 Epoch : 3 | Iteration : 50 | Learning Rate : 6.25e-09 | Total Loss : 2030.0686 | Box Loss : 3.7038 | Object Loss : 2025.936 | Class Loss : 0.42867 Epoch : 3 | Iteration : 51 | Learning Rate : 6.7652e-09 | Total Loss : 2033.3076 | Box Loss : 1.6001 | Object Loss : 2031.0587 | Class Loss : 0.64882 Epoch : 3 | Iteration : 52 | Learning Rate : 7.3116e-09 | Total Loss : 2031.2271 | Box Loss : 2.8679 | Object Loss : 2027.5931 | Class Loss : 0.76597 Epoch : 3 | Iteration : 53 | Learning Rate : 7.8905e-09 | Total Loss : 2026.3621 | Box Loss : 1.5324 | Object Loss : 2024.1782 | Class Loss : 0.65154 Epoch : 3 | Iteration : 54 | Learning Rate : 8.5031e-09 | Total Loss : 2031.1776 | Box Loss : 3.7035 | Object Loss : 2026.9082 | Class Loss : 0.56593 Epoch : 3 | Iteration : 55 | Learning Rate : 9.1506e-09 | Total Loss : 2034.3107 | Box Loss : 2.6567 | Object Loss : 2030.9513 | Class Loss : 0.70268 Epoch : 3 | Iteration : 56 | Learning Rate : 9.8345e-09 | Total Loss : 2021.8693 | Box Loss : 3.34 | Object Loss : 2017.9197 | Class Loss : 0.60955 Epoch : 3 | Iteration : 57 | Learning Rate : 1.0556e-08 | Total Loss : 2024.7795 | Box Loss : 5.4342 | Object Loss : 2018.5682 | Class Loss : 0.77708 Epoch : 3 | Iteration : 58 | Learning Rate : 1.1316e-08 | Total Loss : 2021.3302 | Box Loss : 2.4311 | Object Loss : 2018.3871 | Class Loss : 0.5121 Epoch : 3 | Iteration : 59 | Learning Rate : 1.2117e-08 | Total Loss : 2028.3229 | Box Loss : 3.0344 | Object Loss : 2024.6876 | Class Loss : 0.60097 Epoch : 3 | Iteration : 60 | Learning Rate : 1.296e-08 | Total Loss : 2029.2771 | Box Loss : 3.7049 | Object Loss : 2025.1646 | Class Loss : 0.40764 Epoch : 3 | Iteration : 61 | Learning Rate : 1.3846e-08 | Total Loss : 2023.4316 | Box Loss : 2.836 | Object Loss : 2020.1001 | Class Loss : 0.49552 Epoch : 3 | Iteration : 62 | Learning Rate : 1.4776e-08 | Total Loss : 2018.3862 | Box Loss : 3.4428 | Object Loss : 2014.299 | Class Loss : 0.64438 Epoch : 3 | Iteration : 63 | Learning Rate : 1.5753e-08 | Total Loss : 1997.1346 | Box Loss : 5.3684 | Object Loss : 1991.0413 | Class Loss : 0.72492 Epoch : 3 | Iteration : 64 | Learning Rate : 1.6777e-08 | Total Loss : 2008.8459 | Box Loss : 3.0047 | Object Loss : 2005.2698 | Class Loss : 0.57158 Epoch : 3 | Iteration : 65 | Learning Rate : 1.7851e-08 | Total Loss : 2003.0519 | Box Loss : 3.0569 | Object Loss : 1999.3745 | Class Loss : 0.62048 Epoch : 3 | Iteration : 66 | Learning Rate : 1.8975e-08 | Total Loss : 1996.9225 | Box Loss : 5.2107 | Object Loss : 1991.1674 | Class Loss : 0.54448 Epoch : 3 | Iteration : 67 | Learning Rate : 2.0151e-08 | Total Loss : 1993.1699 | Box Loss : 2.067 | Object Loss : 1990.5317 | Class Loss : 0.5712 Epoch : 3 | Iteration : 68 | Learning Rate : 2.1381e-08 | Total Loss : 1996.2117 | Box Loss : 1.808 | Object Loss : 1993.8293 | Class Loss : 0.57438 Epoch : 3 | Iteration : 69 | Learning Rate : 2.2667e-08 | Total Loss : 1960.97 | Box Loss : 3.5167 | Object Loss : 1957.0793 | Class Loss : 0.37408 Epoch : 4 | Iteration : 70 | Learning Rate : 2.401e-08 | Total Loss : 1987.9667 | Box Loss : 3.758 | Object Loss : 1983.6907 | Class Loss : 0.518 Epoch : 4 | Iteration : 71 | Learning Rate : 2.5412e-08 | Total Loss : 1985.2295 | Box Loss : 1.5088 | Object Loss : 1983.1033 | Class Loss : 0.6174 Epoch : 4 | Iteration : 72 | Learning Rate : 2.6874e-08 | Total Loss : 1986.2605 | Box Loss : 5.0329 | Object Loss : 1980.4592 | Class Loss : 0.76839 Epoch : 4 | Iteration : 73 | Learning Rate : 2.8398e-08 | Total Loss : 1983.7778 | Box Loss : 2.5958 | Object Loss : 1980.4906 | Class Loss : 0.69158 Epoch : 4 | Iteration : 74 | Learning Rate : 2.9987e-08 | Total Loss : 1983.8669 | Box Loss : 3.2597 | Object Loss : 1980.2219 | Class Loss : 0.38521 Epoch : 4 | Iteration : 75 | Learning Rate : 3.1641e-08 | Total Loss : 1965.298 | Box Loss : 2.8892 | Object Loss : 1962.0405 | Class Loss : 0.3683 Epoch : 4 | Iteration : 76 | Learning Rate : 3.3362e-08 | Total Loss : 1975.8003 | Box Loss : 4.5828 | Object Loss : 1970.3978 | Class Loss : 0.81973 Epoch : 4 | Iteration : 77 | Learning Rate : 3.5153e-08 | Total Loss : 1956.8281 | Box Loss : 3.4007 | Object Loss : 1952.7102 | Class Loss : 0.71722 Epoch : 4 | Iteration : 78 | Learning Rate : 3.7015e-08 | Total Loss : 1947.5746 | Box Loss : 3.6963 | Object Loss : 1943.3844 | Class Loss : 0.49393 Epoch : 4 | Iteration : 79 | Learning Rate : 3.895e-08 | Total Loss : 1945.3359 | Box Loss : 2.7079 | Object Loss : 1941.9802 | Class Loss : 0.64784 Epoch : 4 | Iteration : 80 | Learning Rate : 4.096e-08 | Total Loss : 1936.6976 | Box Loss : 3.5695 | Object Loss : 1932.4708 | Class Loss : 0.65735 Epoch : 4 | Iteration : 81 | Learning Rate : 4.3047e-08 | Total Loss : 1940.713 | Box Loss : 3.8212 | Object Loss : 1936.5292 | Class Loss : 0.36271 Epoch : 4 | Iteration : 82 | Learning Rate : 4.5212e-08 | Total Loss : 1920.8802 | Box Loss : 3.2441 | Object Loss : 1917.2192 | Class Loss : 0.41683 Epoch : 4 | Iteration : 83 | Learning Rate : 4.7458e-08 | Total Loss : 1911.2277 | Box Loss : 3.8905 | Object Loss : 1906.6838 | Class Loss : 0.65335 Epoch : 4 | Iteration : 84 | Learning Rate : 4.9787e-08 | Total Loss : 1911.212 | Box Loss : 3.6702 | Object Loss : 1906.9504 | Class Loss : 0.59146 Epoch : 4 | Iteration : 85 | Learning Rate : 5.2201e-08 | Total Loss : 1890.0442 | Box Loss : 2.015 | Object Loss : 1887.3788 | Class Loss : 0.65035 Epoch : 4 | Iteration : 86 | Learning Rate : 5.4701e-08 | Total Loss : 1894.0687 | Box Loss : 2.3115 | Object Loss : 1891.0864 | Class Loss : 0.67088 Epoch : 4 | Iteration : 87 | Learning Rate : 5.729e-08 | Total Loss : 1882.7527 | Box Loss : 3.0078 | Object Loss : 1878.8282 | Class Loss : 0.91661 Epoch : 4 | Iteration : 88 | Learning Rate : 5.997e-08 | Total Loss : 1878.7745 | Box Loss : 4.5958 | Object Loss : 1873.644 | Class Loss : 0.53463 Epoch : 4 | Iteration : 89 | Learning Rate : 6.2742e-08 | Total Loss : 1874.4493 | Box Loss : 3.7955 | Object Loss : 1870.1482 | Class Loss : 0.50562 Epoch : 4 | Iteration : 90 | Learning Rate : 6.561e-08 | Total Loss : 1849.9515 | Box Loss : 3.1729 | Object Loss : 1846.0564 | Class Loss : 0.72234 Epoch : 4 | Iteration : 91 | Learning Rate : 6.8575e-08 | Total Loss : 1832.1216 | Box Loss : 1.972 | Object Loss : 1829.5789 | Class Loss : 0.57063 Epoch : 4 | Iteration : 92 | Learning Rate : 7.1639e-08 | Total Loss : 1825.4591 | Box Loss : 16.0346 | Object Loss : 1808.7312 | Class Loss : 0.69319 Epoch : 5 | Iteration : 93 | Learning Rate : 7.4805e-08 | Total Loss : 1822.5862 | Box Loss : 3.3594 | Object Loss : 1818.7134 | Class Loss : 0.51327 Epoch : 5 | Iteration : 94 | Learning Rate : 7.8075e-08 | Total Loss : 1806.3173 | Box Loss : 4.9844 | Object Loss : 1800.8016 | Class Loss : 0.53124 Epoch : 5 | Iteration : 95 | Learning Rate : 8.1451e-08 | Total Loss : 1791.694 | Box Loss : 2.6037 | Object Loss : 1788.7158 | Class Loss : 0.37446 Epoch : 5 | Iteration : 96 | Learning Rate : 8.4935e-08 | Total Loss : 1788.7694 | Box Loss : 3.4347 | Object Loss : 1784.615 | Class Loss : 0.71978 Epoch : 5 | Iteration : 97 | Learning Rate : 8.8529e-08 | Total Loss : 1765.125 | Box Loss : 2.1152 | Object Loss : 1762.3104 | Class Loss : 0.69946 Epoch : 5 | Iteration : 98 | Learning Rate : 9.2237e-08 | Total Loss : 1750.5256 | Box Loss : 2.0819 | Object Loss : 1747.7401 | Class Loss : 0.70358 Epoch : 5 | Iteration : 99 | Learning Rate : 9.606e-08 | Total Loss : 1743.0345 | Box Loss : 1.6052 | Object Loss : 1741.0526 | Class Loss : 0.37671 Epoch : 5 | Iteration : 100 | Learning Rate : 1e-07 | Total Loss : 1731.6486 | Box Loss : 2.262 | Object Loss : 1728.8052 | Class Loss : 0.5813 Epoch : 5 | Iteration : 101 | Learning Rate : 1.0406e-07 | Total Loss : 1721.0155 | Box Loss : 2.7817 | Object Loss : 1717.8625 | Class Loss : 0.37132 Epoch : 5 | Iteration : 102 | Learning Rate : 1.0824e-07 | Total Loss : 1703.8527 | Box Loss : 1.5948 | Object Loss : 1701.7136 | Class Loss : 0.54421 Epoch : 5 | Iteration : 103 | Learning Rate : 1.1255e-07 | Total Loss : 1681.6267 | Box Loss : 3.4908 | Object Loss : 1677.3575 | Class Loss : 0.77836 Epoch : 5 | Iteration : 104 | Learning Rate : 1.1699e-07 | Total Loss : 1663.0557 | Box Loss : 6.277 | Object Loss : 1656.0092 | Class Loss : 0.76947 Epoch : 5 | Iteration : 105 | Learning Rate : 1.2155e-07 | Total Loss : 1651.8859 | Box Loss : 3.8958 | Object Loss : 1647.5018 | Class Loss : 0.48832 Epoch : 5 | Iteration : 106 | Learning Rate : 1.2625e-07 | Total Loss : 1637.6196 | Box Loss : 3.4216 | Object Loss : 1633.6617 | Class Loss : 0.53624 Epoch : 5 | Iteration : 107 | Learning Rate : 1.3108e-07 | Total Loss : 1611.4268 | Box Loss : 2.0408 | Object Loss : 1608.6917 | Class Loss : 0.69436 Epoch : 5 | Iteration : 108 | Learning Rate : 1.3605e-07 | Total Loss : 1588.9783 | Box Loss : 4.4034 | Object Loss : 1584.0145 | Class Loss : 0.56031 Epoch : 5 | Iteration : 109 | Learning Rate : 1.4116e-07 | Total Loss : 1577.9961 | Box Loss : 3.5424 | Object Loss : 1573.731 | Class Loss : 0.7226 Epoch : 5 | Iteration : 110 | Learning Rate : 1.4641e-07 | Total Loss : 1554.6068 | Box Loss : 2.9358 | Object Loss : 1551.1073 | Class Loss : 0.56367 Epoch : 5 | Iteration : 111 | Learning Rate : 1.5181e-07 | Total Loss : 1545.191 | Box Loss : 2.9433 | Object Loss : 1541.4181 | Class Loss : 0.82967 Epoch : 5 | Iteration : 112 | Learning Rate : 1.5735e-07 | Total Loss : 1516.0305 | Box Loss : 2.7912 | Object Loss : 1512.7938 | Class Loss : 0.44542 Epoch : 5 | Iteration : 113 | Learning Rate : 1.6305e-07 | Total Loss : 1504.9351 | Box Loss : 6.1784 | Object Loss : 1498.2 | Class Loss : 0.55669 Epoch : 5 | Iteration : 114 | Learning Rate : 1.689e-07 | Total Loss : 1481.5167 | Box Loss : 3.3483 | Object Loss : 1477.4575 | Class Loss : 0.71095 Epoch : 5 | Iteration : 115 | Learning Rate : 1.749e-07 | Total Loss : 1446.4066 | Box Loss : 4.169 | Object Loss : 1441.9678 | Class Loss : 0.26987 Epoch : 6 | Iteration : 116 | Learning Rate : 1.8106e-07 | Total Loss : 1447.542 | Box Loss : 4.7388 | Object Loss : 1442.2832 | Class Loss : 0.52001 Epoch : 6 | Iteration : 117 | Learning Rate : 1.8739e-07 | Total Loss : 1410.4972 | Box Loss : 4.8979 | Object Loss : 1404.7754 | Class Loss : 0.8239 Epoch : 6 | Iteration : 118 | Learning Rate : 1.9388e-07 | Total Loss : 1401.3512 | Box Loss : 2.5921 | Object Loss : 1398.2458 | Class Loss : 0.51321 Epoch : 6 | Iteration : 119 | Learning Rate : 2.0053e-07 | Total Loss : 1370.1278 | Box Loss : 1.8787 | Object Loss : 1367.7649 | Class Loss : 0.48423 Epoch : 6 | Iteration : 120 | Learning Rate : 2.0736e-07 | Total Loss : 1352.739 | Box Loss : 4.8197 | Object Loss : 1347.353 | Class Loss : 0.56634 Epoch : 6 | Iteration : 121 | Learning Rate : 2.1436e-07 | Total Loss : 1333.5609 | Box Loss : 2.1018 | Object Loss : 1330.7599 | Class Loss : 0.69922 Epoch : 6 | Iteration : 122 | Learning Rate : 2.2153e-07 | Total Loss : 1299.8704 | Box Loss : 2.1882 | Object Loss : 1297.166 | Class Loss : 0.51607 Epoch : 6 | Iteration : 123 | Learning Rate : 2.2889e-07 | Total Loss : 1282.7609 | Box Loss : 3.0205 | Object Loss : 1279.2159 | Class Loss : 0.52443 Epoch : 6 | Iteration : 124 | Learning Rate : 2.3642e-07 | Total Loss : 1272.4924 | Box Loss : 2.5574 | Object Loss : 1269.4204 | Class Loss : 0.51467 Epoch : 6 | Iteration : 125 | Learning Rate : 2.4414e-07 | Total Loss : 1250.6395 | Box Loss : 4.9803 | Object Loss : 1244.9894 | Class Loss : 0.66982 Epoch : 6 | Iteration : 126 | Learning Rate : 2.5205e-07 | Total Loss : 1215.4714 | Box Loss : 4.1274 | Object Loss : 1210.7234 | Class Loss : 0.62054 Epoch : 6 | Iteration : 127 | Learning Rate : 2.6014e-07 | Total Loss : 1198.6125 | Box Loss : 3.395 | Object Loss : 1194.76 | Class Loss : 0.45757 Epoch : 6 | Iteration : 128 | Learning Rate : 2.6844e-07 | Total Loss : 1166.0612 | Box Loss : 1.8501 | Object Loss : 1163.7635 | Class Loss : 0.44757 Epoch : 6 | Iteration : 129 | Learning Rate : 2.7692e-07 | Total Loss : 1145.3256 | Box Loss : 5.843 | Object Loss : 1138.7683 | Class Loss : 0.71427 Epoch : 6 | Iteration : 130 | Learning Rate : 2.8561e-07 | Total Loss : 1121.3236 | Box Loss : 1.5061 | Object Loss : 1119.2224 | Class Loss : 0.59509 Epoch : 6 | Iteration : 131 | Learning Rate : 2.945e-07 | Total Loss : 1107.7411 | Box Loss : 1.7676 | Object Loss : 1105.3784 | Class Loss : 0.59508 Epoch : 6 | Iteration : 132 | Learning Rate : 3.036e-07 | Total Loss : 1084.3871 | Box Loss : 3.8119 | Object Loss : 1079.9816 | Class Loss : 0.59367 Epoch : 6 | Iteration : 133 | Learning Rate : 3.129e-07 | Total Loss : 1061.6428 | Box Loss : 2.3155 | Object Loss : 1058.6475 | Class Loss : 0.67995 Epoch : 6 | Iteration : 134 | Learning Rate : 3.2242e-07 | Total Loss : 1032.1377 | Box Loss : 2.9454 | Object Loss : 1028.4749 | Class Loss : 0.71751 Epoch : 6 | Iteration : 135 | Learning Rate : 3.3215e-07 | Total Loss : 1010.8308 | Box Loss : 5.0547 | Object Loss : 1005.1586 | Class Loss : 0.61748 Epoch : 6 | Iteration : 136 | Learning Rate : 3.421e-07 | Total Loss : 980.6289 | Box Loss : 2.3295 | Object Loss : 977.5277 | Class Loss : 0.77165 Epoch : 6 | Iteration : 137 | Learning Rate : 3.5228e-07 | Total Loss : 954.4264 | Box Loss : 1.2685 | Object Loss : 952.7402 | Class Loss : 0.41771 Epoch : 6 | Iteration : 138 | Learning Rate : 3.6267e-07 | Total Loss : 950.8206 | Box Loss : 5.479 | Object Loss : 944.7975 | Class Loss : 0.54406 Epoch : 7 | Iteration : 139 | Learning Rate : 3.733e-07 | Total Loss : 920.5997 | Box Loss : 3.7647 | Object Loss : 916.1279 | Class Loss : 0.70721 Epoch : 7 | Iteration : 140 | Learning Rate : 3.8416e-07 | Total Loss : 886.7099 | Box Loss : 2.1462 | Object Loss : 884.063 | Class Loss : 0.50076 Epoch : 7 | Iteration : 141 | Learning Rate : 3.9525e-07 | Total Loss : 874.2281 | Box Loss : 3.4884 | Object Loss : 870.3276 | Class Loss : 0.41216 Epoch : 7 | Iteration : 142 | Learning Rate : 4.0659e-07 | Total Loss : 856.9265 | Box Loss : 2.3927 | Object Loss : 854.1731 | Class Loss : 0.3606 Epoch : 7 | Iteration : 143 | Learning Rate : 4.1816e-07 | Total Loss : 830.4821 | Box Loss : 2.4321 | Object Loss : 827.4305 | Class Loss : 0.61948 Epoch : 7 | Iteration : 144 | Learning Rate : 4.2998e-07 | Total Loss : 803.5051 | Box Loss : 4.0953 | Object Loss : 798.4871 | Class Loss : 0.92264 Epoch : 7 | Iteration : 145 | Learning Rate : 4.4205e-07 | Total Loss : 787.3389 | Box Loss : 2.6817 | Object Loss : 784.1239 | Class Loss : 0.53327 Epoch : 7 | Iteration : 146 | Learning Rate : 4.5437e-07 | Total Loss : 766.8655 | Box Loss : 4.5345 | Object Loss : 761.6417 | Class Loss : 0.68924 Epoch : 7 | Iteration : 147 | Learning Rate : 4.6695e-07 | Total Loss : 747.8558 | Box Loss : 1.6245 | Object Loss : 745.6534 | Class Loss : 0.57786 Epoch : 7 | Iteration : 148 | Learning Rate : 4.7979e-07 | Total Loss : 724.8915 | Box Loss : 4.2896 | Object Loss : 720.0224 | Class Loss : 0.57949 Epoch : 7 | Iteration : 149 | Learning Rate : 4.9288e-07 | Total Loss : 707.7861 | Box Loss : 3.0635 | Object Loss : 704.1108 | Class Loss : 0.61181 Epoch : 7 | Iteration : 150 | Learning Rate : 5.0625e-07 | Total Loss : 682.8471 | Box Loss : 1.9388 | Object Loss : 680.3842 | Class Loss : 0.5242 Epoch : 7 | Iteration : 151 | Learning Rate : 5.1989e-07 | Total Loss : 661.091 | Box Loss : 2.2959 | Object Loss : 658.3548 | Class Loss : 0.44027 Epoch : 7 | Iteration : 152 | Learning Rate : 5.3379e-07 | Total Loss : 649.2206 | Box Loss : 2.3278 | Object Loss : 646.3533 | Class Loss : 0.53957 Epoch : 7 | Iteration : 153 | Learning Rate : 5.4798e-07 | Total Loss : 628.3187 | Box Loss : 2.3732 | Object Loss : 625.4421 | Class Loss : 0.50344 Epoch : 7 | Iteration : 154 | Learning Rate : 5.6245e-07 | Total Loss : 611.2161 | Box Loss : 1.4037 | Object Loss : 609.1978 | Class Loss : 0.61459 Epoch : 7 | Iteration : 155 | Learning Rate : 5.772e-07 | Total Loss : 592.9767 | Box Loss : 2.4407 | Object Loss : 589.9943 | Class Loss : 0.54181 Epoch : 7 | Iteration : 156 | Learning Rate : 5.9224e-07 | Total Loss : 575.8509 | Box Loss : 4.8618 | Object Loss : 570.1839 | Class Loss : 0.80518 Epoch : 7 | Iteration : 157 | Learning Rate : 6.0757e-07 | Total Loss : 558.6267 | Box Loss : 2.241 | Object Loss : 555.8766 | Class Loss : 0.50903 Epoch : 7 | Iteration : 158 | Learning Rate : 6.232e-07 | Total Loss : 545.6277 | Box Loss : 1.87 | Object Loss : 542.9493 | Class Loss : 0.8084 Epoch : 7 | Iteration : 159 | Learning Rate : 6.3913e-07 | Total Loss : 533.227 | Box Loss : 2.6881 | Object Loss : 530.0394 | Class Loss : 0.49956 Epoch : 7 | Iteration : 160 | Learning Rate : 6.5536e-07 | Total Loss : 510.7053 | Box Loss : 3.0112 | Object Loss : 507.2845 | Class Loss : 0.4097 Epoch : 7 | Iteration : 161 | Learning Rate : 6.719e-07 | Total Loss : 500.6588 | Box Loss : 1.882 | Object Loss : 498.0595 | Class Loss : 0.71724 Epoch : 8 | Iteration : 162 | Learning Rate : 6.8875e-07 | Total Loss : 482.7756 | Box Loss : 4.3151 | Object Loss : 477.9934 | Class Loss : 0.46708 Epoch : 8 | Iteration : 163 | Learning Rate : 7.0591e-07 | Total Loss : 468.5723 | Box Loss : 3.9805 | Object Loss : 463.912 | Class Loss : 0.67974 Epoch : 8 | Iteration : 164 | Learning Rate : 7.2339e-07 | Total Loss : 455.5461 | Box Loss : 2.7851 | Object Loss : 452.0892 | Class Loss : 0.67181 Epoch : 8 | Iteration : 165 | Learning Rate : 7.412e-07 | Total Loss : 440.152 | Box Loss : 1.8796 | Object Loss : 437.8831 | Class Loss : 0.3893 Epoch : 8 | Iteration : 166 | Learning Rate : 7.5933e-07 | Total Loss : 424.8647 | Box Loss : 0.95922 | Object Loss : 423.4963 | Class Loss : 0.40921 Epoch : 8 | Iteration : 167 | Learning Rate : 7.778e-07 | Total Loss : 413.4631 | Box Loss : 1.684 | Object Loss : 411.2394 | Class Loss : 0.53968 Epoch : 8 | Iteration : 168 | Learning Rate : 7.9659e-07 | Total Loss : 402.9919 | Box Loss : 3.5577 | Object Loss : 398.8732 | Class Loss : 0.56104 Epoch : 8 | Iteration : 169 | Learning Rate : 8.1573e-07 | Total Loss : 388.0714 | Box Loss : 2.3663 | Object Loss : 385.1064 | Class Loss : 0.59866 Epoch : 8 | Iteration : 170 | Learning Rate : 8.3521e-07 | Total Loss : 374.811 | Box Loss : 1.3496 | Object Loss : 373.0375 | Class Loss : 0.42392 Epoch : 8 | Iteration : 171 | Learning Rate : 8.5504e-07 | Total Loss : 365.7278 | Box Loss : 3.9867 | Object Loss : 361.0733 | Class Loss : 0.66776 Epoch : 8 | Iteration : 172 | Learning Rate : 8.7521e-07 | Total Loss : 356.4079 | Box Loss : 2.453 | Object Loss : 353.4033 | Class Loss : 0.55167 Epoch : 8 | Iteration : 173 | Learning Rate : 8.9575e-07 | Total Loss : 343.1337 | Box Loss : 2.5413 | Object Loss : 339.976 | Class Loss : 0.61644 Epoch : 8 | Iteration : 174 | Learning Rate : 9.1664e-07 | Total Loss : 334.107 | Box Loss : 2.193 | Object Loss : 331.0905 | Class Loss : 0.82353 Epoch : 8 | Iteration : 175 | Learning Rate : 9.3789e-07 | Total Loss : 328.5016 | Box Loss : 4.1864 | Object Loss : 323.8095 | Class Loss : 0.50573 Epoch : 8 | Iteration : 176 | Learning Rate : 9.5951e-07 | Total Loss : 311.0597 | Box Loss : 1.8204 | Object Loss : 308.9083 | Class Loss : 0.33105 Epoch : 8 | Iteration : 177 | Learning Rate : 9.8151e-07 | Total Loss : 307.1644 | Box Loss : 3.8359 | Object Loss : 302.7261 | Class Loss : 0.60237 Epoch : 8 | Iteration : 178 | Learning Rate : 1.0039e-06 | Total Loss : 295.8521 | Box Loss : 1.7389 | Object Loss : 293.512 | Class Loss : 0.60122 Epoch : 8 | Iteration : 179 | Learning Rate : 1.0266e-06 | Total Loss : 290.2374 | Box Loss : 2.9788 | Object Loss : 286.4553 | Class Loss : 0.80327 Epoch : 8 | Iteration : 180 | Learning Rate : 1.0498e-06 | Total Loss : 278.6443 | Box Loss : 1.6312 | Object Loss : 276.5125 | Class Loss : 0.50063 Epoch : 8 | Iteration : 181 | Learning Rate : 1.0733e-06 | Total Loss : 272.516 | Box Loss : 1.741 | Object Loss : 270.2593 | Class Loss : 0.51579 Epoch : 8 | Iteration : 182 | Learning Rate : 1.0972e-06 | Total Loss : 262.8264 | Box Loss : 2.4952 | Object Loss : 259.7936 | Class Loss : 0.53762 Epoch : 8 | Iteration : 183 | Learning Rate : 1.1215e-06 | Total Loss : 255.7794 | Box Loss : 1.3871 | Object Loss : 254.0543 | Class Loss : 0.33794 Epoch : 8 | Iteration : 184 | Learning Rate : 1.1462e-06 | Total Loss : 249.4258 | Box Loss : 4.6335 | Object Loss : 244.1304 | Class Loss : 0.6619 Epoch : 9 | Iteration : 185 | Learning Rate : 1.1714e-06 | Total Loss : 245.537 | Box Loss : 6.6451 | Object Loss : 238.3023 | Class Loss : 0.58964 Epoch : 9 | Iteration : 186 | Learning Rate : 1.1969e-06 | Total Loss : 238.5719 | Box Loss : 2.0229 | Object Loss : 236.0748 | Class Loss : 0.47414 Epoch : 9 | Iteration : 187 | Learning Rate : 1.2228e-06 | Total Loss : 224.8348 | Box Loss : 1.2722 | Object Loss : 223.0252 | Class Loss : 0.53734 Epoch : 9 | Iteration : 188 | Learning Rate : 1.2492e-06 | Total Loss : 221.2518 | Box Loss : 1.8325 | Object Loss : 218.8065 | Class Loss : 0.61271 Epoch : 9 | Iteration : 189 | Learning Rate : 1.276e-06 | Total Loss : 215.7116 | Box Loss : 1.0737 | Object Loss : 214.1493 | Class Loss : 0.48865 Epoch : 9 | Iteration : 190 | Learning Rate : 1.3032e-06 | Total Loss : 208.2681 | Box Loss : 3.0667 | Object Loss : 204.7304 | Class Loss : 0.47088 Epoch : 9 | Iteration : 191 | Learning Rate : 1.3309e-06 | Total Loss : 204.9479 | Box Loss : 2.8997 | Object Loss : 201.2484 | Class Loss : 0.79992 Epoch : 9 | Iteration : 192 | Learning Rate : 1.359e-06 | Total Loss : 194.3042 | Box Loss : 1.6634 | Object Loss : 192.0485 | Class Loss : 0.59222 Epoch : 9 | Iteration : 193 | Learning Rate : 1.3875e-06 | Total Loss : 192.862 | Box Loss : 2.6475 | Object Loss : 189.624 | Class Loss : 0.59048 Epoch : 9 | Iteration : 194 | Learning Rate : 1.4165e-06 | Total Loss : 185.9765 | Box Loss : 1.4347 | Object Loss : 184.0554 | Class Loss : 0.48638 Epoch : 9 | Iteration : 195 | Learning Rate : 1.4459e-06 | Total Loss : 181.3524 | Box Loss : 2.5645 | Object Loss : 178.0999 | Class Loss : 0.68799 Epoch : 9 | Iteration : 196 | Learning Rate : 1.4758e-06 | Total Loss : 175.0837 | Box Loss : 1.1376 | Object Loss : 173.5423 | Class Loss : 0.40379 Epoch : 9 | Iteration : 197 | Learning Rate : 1.5061e-06 | Total Loss : 172.47 | Box Loss : 2.7052 | Object Loss : 169.3542 | Class Loss : 0.41053 Epoch : 9 | Iteration : 198 | Learning Rate : 1.537e-06 | Total Loss : 167.8906 | Box Loss : 2.1754 | Object Loss : 165.1792 | Class Loss : 0.53594 Epoch : 9 | Iteration : 199 | Learning Rate : 1.5682e-06 | Total Loss : 163.7262 | Box Loss : 3.1509 | Object Loss : 160.1136 | Class Loss : 0.46167 Epoch : 9 | Iteration : 200 | Learning Rate : 1.6e-06 | Total Loss : 157.0047 | Box Loss : 1.8896 | Object Loss : 154.6646 | Class Loss : 0.45044 Epoch : 9 | Iteration : 201 | Learning Rate : 1.6322e-06 | Total Loss : 155.4718 | Box Loss : 2.9187 | Object Loss : 151.9122 | Class Loss : 0.64093 Epoch : 9 | Iteration : 202 | Learning Rate : 1.665e-06 | Total Loss : 150.253 | Box Loss : 1.4516 | Object Loss : 148.2571 | Class Loss : 0.54436 Epoch : 9 | Iteration : 203 | Learning Rate : 1.6982e-06 | Total Loss : 147.4376 | Box Loss : 1.4957 | Object Loss : 145.1635 | Class Loss : 0.77833 Epoch : 9 | Iteration : 204 | Learning Rate : 1.7319e-06 | Total Loss : 143.4381 | Box Loss : 1.4524 | Object Loss : 141.2332 | Class Loss : 0.75252 Epoch : 9 | Iteration : 205 | Learning Rate : 1.7661e-06 | Total Loss : 139.4781 | Box Loss : 2.1622 | Object Loss : 136.841 | Class Loss : 0.47489 Epoch : 9 | Iteration : 206 | Learning Rate : 1.8008e-06 | Total Loss : 135.4959 | Box Loss : 1.7917 | Object Loss : 133.076 | Class Loss : 0.62819 Epoch : 9 | Iteration : 207 | Learning Rate : 1.836e-06 | Total Loss : 151.6894 | Box Loss : 16.8452 | Object Loss : 133.559 | Class Loss : 1.2852 Epoch : 10 | Iteration : 208 | Learning Rate : 1.8718e-06 | Total Loss : 130.3298 | Box Loss : 3.2905 | Object Loss : 126.4972 | Class Loss : 0.54209 Epoch : 10 | Iteration : 209 | Learning Rate : 1.908e-06 | Total Loss : 123.955 | Box Loss : 0.64779 | Object Loss : 122.8501 | Class Loss : 0.45706 Epoch : 10 | Iteration : 210 | Learning Rate : 1.9448e-06 | Total Loss : 122.9245 | Box Loss : 2.1818 | Object Loss : 120.316 | Class Loss : 0.42669 Epoch : 10 | Iteration : 211 | Learning Rate : 1.9821e-06 | Total Loss : 120.8997 | Box Loss : 1.5668 | Object Loss : 118.8077 | Class Loss : 0.52523 Epoch : 10 | Iteration : 212 | Learning Rate : 2.02e-06 | Total Loss : 118.3063 | Box Loss : 2.2124 | Object Loss : 115.522 | Class Loss : 0.57182 Epoch : 10 | Iteration : 213 | Learning Rate : 2.0583e-06 | Total Loss : 113.0302 | Box Loss : 1.4393 | Object Loss : 111.2402 | Class Loss : 0.35071 Epoch : 10 | Iteration : 214 | Learning Rate : 2.0973e-06 | Total Loss : 111.292 | Box Loss : 1.5763 | Object Loss : 109.3507 | Class Loss : 0.36501 Epoch : 10 | Iteration : 215 | Learning Rate : 2.1368e-06 | Total Loss : 110.2187 | Box Loss : 3.697 | Object Loss : 105.9979 | Class Loss : 0.52385 Epoch : 10 | Iteration : 216 | Learning Rate : 2.1768e-06 | Total Loss : 107.9202 | Box Loss : 2.8203 | Object Loss : 104.4144 | Class Loss : 0.68554 Epoch : 10 | Iteration : 217 | Learning Rate : 2.2174e-06 | Total Loss : 105.8905 | Box Loss : 1.6596 | Object Loss : 103.7231 | Class Loss : 0.50786 Epoch : 10 | Iteration : 218 | Learning Rate : 2.2585e-06 | Total Loss : 103.5545 | Box Loss : 2.885 | Object Loss : 99.9706 | Class Loss : 0.6989 Epoch : 10 | Iteration : 219 | Learning Rate : 2.3003e-06 | Total Loss : 97.551 | Box Loss : 0.8408 | Object Loss : 96.1815 | Class Loss : 0.52869 Epoch : 10 | Iteration : 220 | Learning Rate : 2.3426e-06 | Total Loss : 96.6629 | Box Loss : 0.75809 | Object Loss : 95.4954 | Class Loss : 0.40937 Epoch : 10 | Iteration : 221 | Learning Rate : 2.3854e-06 | Total Loss : 96.9953 | Box Loss : 4.1857 | Object Loss : 92.1798 | Class Loss : 0.6298 Epoch : 10 | Iteration : 222 | Learning Rate : 2.4289e-06 | Total Loss : 93.509 | Box Loss : 2.001 | Object Loss : 90.8811 | Class Loss : 0.62687 Epoch : 10 | Iteration : 223 | Learning Rate : 2.473e-06 | Total Loss : 91.9346 | Box Loss : 1.7513 | Object Loss : 89.7099 | Class Loss : 0.47336 Epoch : 10 | Iteration : 224 | Learning Rate : 2.5176e-06 | Total Loss : 88.4763 | Box Loss : 2.1265 | Object Loss : 85.839 | Class Loss : 0.5108 Epoch : 10 | Iteration : 225 | Learning Rate : 2.5629e-06 | Total Loss : 85.629 | Box Loss : 0.98446 | Object Loss : 84.2665 | Class Loss : 0.3781 Epoch : 10 | Iteration : 226 | Learning Rate : 2.6088e-06 | Total Loss : 86.2569 | Box Loss : 2.1277 | Object Loss : 83.5847 | Class Loss : 0.54448 Epoch : 10 | Iteration : 227 | Learning Rate : 2.6552e-06 | Total Loss : 83.1799 | Box Loss : 1.347 | Object Loss : 81.3644 | Class Loss : 0.46843 Epoch : 10 | Iteration : 228 | Learning Rate : 2.7023e-06 | Total Loss : 81.1243 | Box Loss : 1.4545 | Object Loss : 78.8732 | Class Loss : 0.79659 Epoch : 10 | Iteration : 229 | Learning Rate : 2.7501e-06 | Total Loss : 81.2041 | Box Loss : 2.5103 | Object Loss : 78.0889 | Class Loss : 0.60492 Epoch : 10 | Iteration : 230 | Learning Rate : 2.7984e-06 | Total Loss : 75.1246 | Box Loss : 0.27143 | Object Loss : 74.5554 | Class Loss : 0.29782 Epoch : 11 | Iteration : 231 | Learning Rate : 2.8474e-06 | Total Loss : 79.0376 | Box Loss : 3.4272 | Object Loss : 75.0811 | Class Loss : 0.52923 Epoch : 11 | Iteration : 232 | Learning Rate : 2.897e-06 | Total Loss : 73.4143 | Box Loss : 0.50453 | Object Loss : 72.4067 | Class Loss : 0.50303 Epoch : 11 | Iteration : 233 | Learning Rate : 2.9473e-06 | Total Loss : 74.4846 | Box Loss : 1.8336 | Object Loss : 71.9536 | Class Loss : 0.69742 Epoch : 11 | Iteration : 234 | Learning Rate : 2.9982e-06 | Total Loss : 72.1833 | Box Loss : 1.3446 | Object Loss : 70.3828 | Class Loss : 0.45593 Epoch : 11 | Iteration : 235 | Learning Rate : 3.0498e-06 | Total Loss : 70.0606 | Box Loss : 2.1287 | Object Loss : 67.4821 | Class Loss : 0.44973 Epoch : 11 | Iteration : 236 | Learning Rate : 3.102e-06 | Total Loss : 69.5047 | Box Loss : 2.2682 | Object Loss : 66.7052 | Class Loss : 0.53131 Epoch : 11 | Iteration : 237 | Learning Rate : 3.155e-06 | Total Loss : 67.5093 | Box Loss : 1.4033 | Object Loss : 65.7894 | Class Loss : 0.31664 Epoch : 11 | Iteration : 238 | Learning Rate : 3.2085e-06 | Total Loss : 65.0801 | Box Loss : 1.0371 | Object Loss : 63.5287 | Class Loss : 0.51429 Epoch : 11 | Iteration : 239 | Learning Rate : 3.2628e-06 | Total Loss : 65.2496 | Box Loss : 1.5765 | Object Loss : 63.0764 | Class Loss : 0.59679 Epoch : 11 | Iteration : 240 | Learning Rate : 3.3178e-06 | Total Loss : 64.575 | Box Loss : 1.7407 | Object Loss : 62.1388 | Class Loss : 0.69552 Epoch : 11 | Iteration : 241 | Learning Rate : 3.3734e-06 | Total Loss : 64.5196 | Box Loss : 2.1123 | Object Loss : 61.7703 | Class Loss : 0.63696 Epoch : 11 | Iteration : 242 | Learning Rate : 3.4297e-06 | Total Loss : 61.7999 | Box Loss : 2.0481 | Object Loss : 59.1433 | Class Loss : 0.60848 Epoch : 11 | Iteration : 243 | Learning Rate : 3.4868e-06 | Total Loss : 59.071 | Box Loss : 1.6958 | Object Loss : 56.9139 | Class Loss : 0.46125 Epoch : 11 | Iteration : 244 | Learning Rate : 3.5445e-06 | Total Loss : 60.6312 | Box Loss : 2.3208 | Object Loss : 57.7375 | Class Loss : 0.57292 Epoch : 11 | Iteration : 245 | Learning Rate : 3.603e-06 | Total Loss : 56.0652 | Box Loss : 0.81476 | Object Loss : 54.8619 | Class Loss : 0.38854 Epoch : 11 | Iteration : 246 | Learning Rate : 3.6622e-06 | Total Loss : 55.6922 | Box Loss : 0.96189 | Object Loss : 54.3759 | Class Loss : 0.35436 Epoch : 11 | Iteration : 247 | Learning Rate : 3.7221e-06 | Total Loss : 54.9431 | Box Loss : 1.6455 | Object Loss : 52.815 | Class Loss : 0.48265 Epoch : 11 | Iteration : 248 | Learning Rate : 3.7827e-06 | Total Loss : 54.0564 | Box Loss : 1.4288 | Object Loss : 52.3493 | Class Loss : 0.27834 Epoch : 11 | Iteration : 249 | Learning Rate : 3.8441e-06 | Total Loss : 54.5192 | Box Loss : 1.6075 | Object Loss : 52.3839 | Class Loss : 0.52773 Epoch : 11 | Iteration : 250 | Learning Rate : 3.9063e-06 | Total Loss : 50.9148 | Box Loss : 0.64433 | Object Loss : 49.8191 | Class Loss : 0.45141 Epoch : 11 | Iteration : 251 | Learning Rate : 3.9691e-06 | Total Loss : 49.9751 | Box Loss : 1.0272 | Object Loss : 48.508 | Class Loss : 0.4399 Epoch : 11 | Iteration : 252 | Learning Rate : 4.0328e-06 | Total Loss : 51.7999 | Box Loss : 1.8292 | Object Loss : 49.2302 | Class Loss : 0.74052 Epoch : 11 | Iteration : 253 | Learning Rate : 4.0972e-06 | Total Loss : 53.6939 | Box Loss : 3.1018 | Object Loss : 49.5866 | Class Loss : 1.0054 Epoch : 12 | Iteration : 254 | Learning Rate : 4.1623e-06 | Total Loss : 48.7768 | Box Loss : 0.98468 | Object Loss : 47.3193 | Class Loss : 0.47286 Epoch : 12 | Iteration : 255 | Learning Rate : 4.2283e-06 | Total Loss : 49.4233 | Box Loss : 1.9577 | Object Loss : 46.9834 | Class Loss : 0.48223 Epoch : 12 | Iteration : 256 | Learning Rate : 4.295e-06 | Total Loss : 45.1711 | Box Loss : 0.52608 | Object Loss : 44.2151 | Class Loss : 0.42999 Epoch : 12 | Iteration : 257 | Learning Rate : 4.3625e-06 | Total Loss : 47.404 | Box Loss : 1.4689 | Object Loss : 45.4146 | Class Loss : 0.52055 Epoch : 12 | Iteration : 258 | Learning Rate : 4.4308e-06 | Total Loss : 45.8223 | Box Loss : 1.727 | Object Loss : 43.6486 | Class Loss : 0.44666 Epoch : 12 | Iteration : 259 | Learning Rate : 4.4999e-06 | Total Loss : 44.0125 | Box Loss : 1.4235 | Object Loss : 42.2035 | Class Loss : 0.38551 Epoch : 12 | Iteration : 260 | Learning Rate : 4.5698e-06 | Total Loss : 43.6457 | Box Loss : 1.0148 | Object Loss : 42.264 | Class Loss : 0.36687 Epoch : 12 | Iteration : 261 | Learning Rate : 4.6405e-06 | Total Loss : 42.8426 | Box Loss : 1.0523 | Object Loss : 41.2832 | Class Loss : 0.50707 Epoch : 12 | Iteration : 262 | Learning Rate : 4.712e-06 | Total Loss : 42.7025 | Box Loss : 1.6951 | Object Loss : 40.5779 | Class Loss : 0.42952 Epoch : 12 | Iteration : 263 | Learning Rate : 4.7844e-06 | Total Loss : 41.9598 | Box Loss : 1.1832 | Object Loss : 40.2526 | Class Loss : 0.52401 Epoch : 12 | Iteration : 264 | Learning Rate : 4.8575e-06 | Total Loss : 42.3087 | Box Loss : 1.4446 | Object Loss : 40.2068 | Class Loss : 0.65728 Epoch : 12 | Iteration : 265 | Learning Rate : 4.9316e-06 | Total Loss : 42.6846 | Box Loss : 2.1949 | Object Loss : 39.9024 | Class Loss : 0.58729 Epoch : 12 | Iteration : 266 | Learning Rate : 5.0064e-06 | Total Loss : 40.3707 | Box Loss : 1.7943 | Object Loss : 38.0154 | Class Loss : 0.56103 Epoch : 12 | Iteration : 267 | Learning Rate : 5.0821e-06 | Total Loss : 38.4586 | Box Loss : 0.97707 | Object Loss : 37.0044 | Class Loss : 0.4772 Epoch : 12 | Iteration : 268 | Learning Rate : 5.1587e-06 | Total Loss : 38.25 | Box Loss : 0.83939 | Object Loss : 37.0145 | Class Loss : 0.39606 Epoch : 12 | Iteration : 269 | Learning Rate : 5.2361e-06 | Total Loss : 36.7243 | Box Loss : 0.36276 | Object Loss : 35.9324 | Class Loss : 0.42916 Epoch : 12 | Iteration : 270 | Learning Rate : 5.3144e-06 | Total Loss : 36.9852 | Box Loss : 1.5355 | Object Loss : 35.0721 | Class Loss : 0.37763 Epoch : 12 | Iteration : 271 | Learning Rate : 5.3936e-06 | Total Loss : 37.2974 | Box Loss : 1.3626 | Object Loss : 35.5152 | Class Loss : 0.41965 Epoch : 12 | Iteration : 272 | Learning Rate : 5.4736e-06 | Total Loss : 35.8527 | Box Loss : 0.80992 | Object Loss : 34.4731 | Class Loss : 0.56959 Epoch : 12 | Iteration : 273 | Learning Rate : 5.5546e-06 | Total Loss : 37.5231 | Box Loss : 1.6429 | Object Loss : 35.2527 | Class Loss : 0.62757 Epoch : 12 | Iteration : 274 | Learning Rate : 5.6364e-06 | Total Loss : 35.3775 | Box Loss : 1.3198 | Object Loss : 33.6527 | Class Loss : 0.40501 Epoch : 12 | Iteration : 275 | Learning Rate : 5.7191e-06 | Total Loss : 34.1811 | Box Loss : 1.5352 | Object Loss : 32.298 | Class Loss : 0.34784 Epoch : 12 | Iteration : 276 | Learning Rate : 5.8028e-06 | Total Loss : 32.1206 | Box Loss : 0.072668 | Object Loss : 31.8403 | Class Loss : 0.20765 Epoch : 13 | Iteration : 277 | Learning Rate : 5.8873e-06 | Total Loss : 32.9848 | Box Loss : 0.83384 | Object Loss : 31.6276 | Class Loss : 0.52341 Epoch : 13 | Iteration : 278 | Learning Rate : 5.9728e-06 | Total Loss : 33.6007 | Box Loss : 1.1468 | Object Loss : 31.9988 | Class Loss : 0.45515 Epoch : 13 | Iteration : 279 | Learning Rate : 6.0592e-06 | Total Loss : 32.4009 | Box Loss : 1.6988 | Object Loss : 30.308 | Class Loss : 0.39409 Epoch : 13 | Iteration : 280 | Learning Rate : 6.1466e-06 | Total Loss : 32.788 | Box Loss : 1.4912 | Object Loss : 30.8687 | Class Loss : 0.4282 Epoch : 13 | Iteration : 281 | Learning Rate : 6.2348e-06 | Total Loss : 33.6716 | Box Loss : 1.5219 | Object Loss : 31.6115 | Class Loss : 0.53822 Epoch : 13 | Iteration : 282 | Learning Rate : 6.3241e-06 | Total Loss : 31.028 | Box Loss : 1.098 | Object Loss : 29.5333 | Class Loss : 0.3967 Epoch : 13 | Iteration : 283 | Learning Rate : 6.4142e-06 | Total Loss : 30.2471 | Box Loss : 0.81254 | Object Loss : 29.06 | Class Loss : 0.37458 Epoch : 13 | Iteration : 284 | Learning Rate : 6.5054e-06 | Total Loss : 30.5616 | Box Loss : 1.4204 | Object Loss : 28.7683 | Class Loss : 0.37296 Epoch : 13 | Iteration : 285 | Learning Rate : 6.5975e-06 | Total Loss : 29.9051 | Box Loss : 1.154 | Object Loss : 28.4039 | Class Loss : 0.34721 Epoch : 13 | Iteration : 286 | Learning Rate : 6.6906e-06 | Total Loss : 29.5435 | Box Loss : 1.1525 | Object Loss : 27.906 | Class Loss : 0.48489 Epoch : 13 | Iteration : 287 | Learning Rate : 6.7847e-06 | Total Loss : 28.0475 | Box Loss : 0.90402 | Object Loss : 26.8044 | Class Loss : 0.33906 Epoch : 13 | Iteration : 288 | Learning Rate : 6.8797e-06 | Total Loss : 30.9531 | Box Loss : 1.9343 | Object Loss : 28.4668 | Class Loss : 0.55198 Epoch : 13 | Iteration : 289 | Learning Rate : 6.9758e-06 | Total Loss : 29.5509 | Box Loss : 1.6881 | Object Loss : 27.4405 | Class Loss : 0.4222 Epoch : 13 | Iteration : 290 | Learning Rate : 7.0728e-06 | Total Loss : 27.9723 | Box Loss : 1.0452 | Object Loss : 26.4545 | Class Loss : 0.4726 Epoch : 13 | Iteration : 291 | Learning Rate : 7.1709e-06 | Total Loss : 27.0647 | Box Loss : 0.92261 | Object Loss : 25.8066 | Class Loss : 0.33554 Epoch : 13 | Iteration : 292 | Learning Rate : 7.2699e-06 | Total Loss : 26.2642 | Box Loss : 0.7257 | Object Loss : 25.2021 | Class Loss : 0.33634 Epoch : 13 | Iteration : 293 | Learning Rate : 7.3701e-06 | Total Loss : 26.0426 | Box Loss : 0.84798 | Object Loss : 24.8537 | Class Loss : 0.3409 Epoch : 13 | Iteration : 294 | Learning Rate : 7.4712e-06 | Total Loss : 25.1769 | Box Loss : 0.34435 | Object Loss : 24.4557 | Class Loss : 0.37682 Epoch : 13 | Iteration : 295 | Learning Rate : 7.5734e-06 | Total Loss : 25.8779 | Box Loss : 0.73083 | Object Loss : 24.5958 | Class Loss : 0.55121 Epoch : 13 | Iteration : 296 | Learning Rate : 7.6766e-06 | Total Loss : 25.0045 | Box Loss : 0.76468 | Object Loss : 23.8644 | Class Loss : 0.37537 Epoch : 13 | Iteration : 297 | Learning Rate : 7.7808e-06 | Total Loss : 27.1041 | Box Loss : 1.8114 | Object Loss : 24.7396 | Class Loss : 0.55318 Epoch : 13 | Iteration : 298 | Learning Rate : 7.8862e-06 | Total Loss : 27.4797 | Box Loss : 1.6207 | Object Loss : 25.301 | Class Loss : 0.55801 Epoch : 13 | Iteration : 299 | Learning Rate : 7.9925e-06 | Total Loss : 26.4747 | Box Loss : 0.94607 | Object Loss : 25.2766 | Class Loss : 0.25199 Epoch : 14 | Iteration : 300 | Learning Rate : 8.1e-06 | Total Loss : 23.4735 | Box Loss : 0.65068 | Object Loss : 22.5667 | Class Loss : 0.2561 Epoch : 14 | Iteration : 301 | Learning Rate : 8.2085e-06 | Total Loss : 25.7068 | Box Loss : 1.0861 | Object Loss : 24.149 | Class Loss : 0.47174 Epoch : 14 | Iteration : 302 | Learning Rate : 8.3182e-06 | Total Loss : 23.1165 | Box Loss : 0.90589 | Object Loss : 21.9181 | Class Loss : 0.29255 Epoch : 14 | Iteration : 303 | Learning Rate : 8.4289e-06 | Total Loss : 22.5633 | Box Loss : 0.73095 | Object Loss : 21.4559 | Class Loss : 0.37652 Epoch : 14 | Iteration : 304 | Learning Rate : 8.5407e-06 | Total Loss : 24.2982 | Box Loss : 1.4886 | Object Loss : 22.3874 | Class Loss : 0.4222 Epoch : 14 | Iteration : 305 | Learning Rate : 8.6537e-06 | Total Loss : 22.2776 | Box Loss : 0.94406 | Object Loss : 20.998 | Class Loss : 0.33558 Epoch : 14 | Iteration : 306 | Learning Rate : 8.7677e-06 | Total Loss : 22.1669 | Box Loss : 1.0104 | Object Loss : 20.851 | Class Loss : 0.30545 Epoch : 14 | Iteration : 307 | Learning Rate : 8.8829e-06 | Total Loss : 23.0523 | Box Loss : 1.2233 | Object Loss : 21.1282 | Class Loss : 0.70076 Epoch : 14 | Iteration : 308 | Learning Rate : 8.9992e-06 | Total Loss : 22.5736 | Box Loss : 0.86523 | Object Loss : 21.3164 | Class Loss : 0.392 Epoch : 14 | Iteration : 309 | Learning Rate : 9.1166e-06 | Total Loss : 23.4343 | Box Loss : 1.4781 | Object Loss : 21.4404 | Class Loss : 0.51575 Epoch : 14 | Iteration : 310 | Learning Rate : 9.2352e-06 | Total Loss : 22.5019 | Box Loss : 0.90839 | Object Loss : 21.13 | Class Loss : 0.46357 Epoch : 14 | Iteration : 311 | Learning Rate : 9.355e-06 | Total Loss : 22.6707 | Box Loss : 1.0287 | Object Loss : 21.2311 | Class Loss : 0.41088 Epoch : 14 | Iteration : 312 | Learning Rate : 9.4759e-06 | Total Loss : 20.3037 | Box Loss : 0.7972 | Object Loss : 19.2077 | Class Loss : 0.29872 Epoch : 14 | Iteration : 313 | Learning Rate : 9.5979e-06 | Total Loss : 24.9406 | Box Loss : 2.2247 | Object Loss : 22.0054 | Class Loss : 0.7105 Epoch : 14 | Iteration : 314 | Learning Rate : 9.7212e-06 | Total Loss : 21.6491 | Box Loss : 1.3531 | Object Loss : 19.9613 | Class Loss : 0.33469 Epoch : 14 | Iteration : 315 | Learning Rate : 9.8456e-06 | Total Loss : 19.9248 | Box Loss : 0.77617 | Object Loss : 18.7892 | Class Loss : 0.35945 Epoch : 14 | Iteration : 316 | Learning Rate : 9.9712e-06 | Total Loss : 18.9517 | Box Loss : 0.53695 | Object Loss : 18.188 | Class Loss : 0.22678 Epoch : 14 | Iteration : 317 | Learning Rate : 1.0098e-05 | Total Loss : 22.1042 | Box Loss : 1.7879 | Object Loss : 19.8364 | Class Loss : 0.47995 Epoch : 14 | Iteration : 318 | Learning Rate : 1.0226e-05 | Total Loss : 18.7485 | Box Loss : 0.62062 | Object Loss : 17.7331 | Class Loss : 0.39474 Epoch : 14 | Iteration : 319 | Learning Rate : 1.0355e-05 | Total Loss : 20.3975 | Box Loss : 0.87357 | Object Loss : 19.0656 | Class Loss : 0.45827 Epoch : 14 | Iteration : 320 | Learning Rate : 1.0486e-05 | Total Loss : 19.8063 | Box Loss : 1.4676 | Object Loss : 17.9057 | Class Loss : 0.43309 Epoch : 14 | Iteration : 321 | Learning Rate : 1.0617e-05 | Total Loss : 19.4166 | Box Loss : 0.75374 | Object Loss : 18.2976 | Class Loss : 0.36532 Epoch : 14 | Iteration : 322 | Learning Rate : 1.075e-05 | Total Loss : 24.5432 | Box Loss : 1.4762 | Object Loss : 22.4664 | Class Loss : 0.60069 Epoch : 15 | Iteration : 323 | Learning Rate : 1.0885e-05 | Total Loss : 18.4276 | Box Loss : 0.98014 | Object Loss : 17.1027 | Class Loss : 0.34474 Epoch : 15 | Iteration : 324 | Learning Rate : 1.102e-05 | Total Loss : 20.2738 | Box Loss : 1.2267 | Object Loss : 18.6558 | Class Loss : 0.39124 Epoch : 15 | Iteration : 325 | Learning Rate : 1.1157e-05 | Total Loss : 17.5261 | Box Loss : 0.7839 | Object Loss : 16.4204 | Class Loss : 0.32173 Epoch : 15 | Iteration : 326 | Learning Rate : 1.1295e-05 | Total Loss : 20.0305 | Box Loss : 1.0768 | Object Loss : 18.4377 | Class Loss : 0.51609 Epoch : 15 | Iteration : 327 | Learning Rate : 1.1434e-05 | Total Loss : 18.1276 | Box Loss : 1.084 | Object Loss : 16.5841 | Class Loss : 0.45952 Epoch : 15 | Iteration : 328 | Learning Rate : 1.1574e-05 | Total Loss : 16.9899 | Box Loss : 0.52159 | Object Loss : 16.25 | Class Loss : 0.21836 Epoch : 15 | Iteration : 329 | Learning Rate : 1.1716e-05 | Total Loss : 17.2788 | Box Loss : 0.79391 | Object Loss : 16.2221 | Class Loss : 0.26281 Epoch : 15 | Iteration : 330 | Learning Rate : 1.1859e-05 | Total Loss : 16.7677 | Box Loss : 0.59361 | Object Loss : 15.8916 | Class Loss : 0.28244 Epoch : 15 | Iteration : 331 | Learning Rate : 1.2004e-05 | Total Loss : 17.8247 | Box Loss : 0.71991 | Object Loss : 16.5339 | Class Loss : 0.57095 Epoch : 15 | Iteration : 332 | Learning Rate : 1.2149e-05 | Total Loss : 17.9062 | Box Loss : 0.95895 | Object Loss : 16.52 | Class Loss : 0.42724 Epoch : 15 | Iteration : 333 | Learning Rate : 1.2296e-05 | Total Loss : 17.3879 | Box Loss : 0.81765 | Object Loss : 16.1917 | Class Loss : 0.37862 Epoch : 15 | Iteration : 334 | Learning Rate : 1.2445e-05 | Total Loss : 15.6942 | Box Loss : 0.55038 | Object Loss : 14.9074 | Class Loss : 0.23641 Epoch : 15 | Iteration : 335 | Learning Rate : 1.2594e-05 | Total Loss : 15.7281 | Box Loss : 0.60656 | Object Loss : 14.9006 | Class Loss : 0.22098 Epoch : 15 | Iteration : 336 | Learning Rate : 1.2746e-05 | Total Loss : 16.8435 | Box Loss : 0.81891 | Object Loss : 15.6955 | Class Loss : 0.32907 Epoch : 15 | Iteration : 337 | Learning Rate : 1.2898e-05 | Total Loss : 20.7239 | Box Loss : 1.6438 | Object Loss : 18.4175 | Class Loss : 0.66266 Epoch : 15 | Iteration : 338 | Learning Rate : 1.3052e-05 | Total Loss : 15.5238 | Box Loss : 0.49358 | Object Loss : 14.7686 | Class Loss : 0.2616 Epoch : 15 | Iteration : 339 | Learning Rate : 1.3207e-05 | Total Loss : 16.3684 | Box Loss : 0.87811 | Object Loss : 15.1227 | Class Loss : 0.36764 Epoch : 15 | Iteration : 340 | Learning Rate : 1.3363e-05 | Total Loss : 15.784 | Box Loss : 0.90518 | Object Loss : 14.5701 | Class Loss : 0.30873 Epoch : 15 | Iteration : 341 | Learning Rate : 1.3521e-05 | Total Loss : 18.1031 | Box Loss : 1.9281 | Object Loss : 15.805 | Class Loss : 0.36997 Epoch : 15 | Iteration : 342 | Learning Rate : 1.3681e-05 | Total Loss : 17.0571 | Box Loss : 1.5809 | Object Loss : 15.1142 | Class Loss : 0.36199 Epoch : 15 | Iteration : 343 | Learning Rate : 1.3841e-05 | Total Loss : 16.2661 | Box Loss : 0.96523 | Object Loss : 14.9404 | Class Loss : 0.36045 Epoch : 15 | Iteration : 344 | Learning Rate : 1.4003e-05 | Total Loss : 15.5492 | Box Loss : 0.8949 | Object Loss : 14.3923 | Class Loss : 0.26203 Epoch : 15 | Iteration : 345 | Learning Rate : 1.4167e-05 | Total Loss : 20.312 | Box Loss : 1.527 | Object Loss : 18.1565 | Class Loss : 0.62849 Epoch : 16 | Iteration : 346 | Learning Rate : 1.4332e-05 | Total Loss : 17.2633 | Box Loss : 1.634 | Object Loss : 15.2527 | Class Loss : 0.37669 Epoch : 16 | Iteration : 347 | Learning Rate : 1.4498e-05 | Total Loss : 14.8747 | Box Loss : 0.7295 | Object Loss : 13.7303 | Class Loss : 0.41487 Epoch : 16 | Iteration : 348 | Learning Rate : 1.4666e-05 | Total Loss : 14.2806 | Box Loss : 0.53956 | Object Loss : 13.4684 | Class Loss : 0.27264 Epoch : 16 | Iteration : 349 | Learning Rate : 1.4835e-05 | Total Loss : 16.0981 | Box Loss : 1.0712 | Object Loss : 14.523 | Class Loss : 0.50388 Epoch : 16 | Iteration : 350 | Learning Rate : 1.5006e-05 | Total Loss : 13.002 | Box Loss : 0.43276 | Object Loss : 12.3231 | Class Loss : 0.24609 Epoch : 16 | Iteration : 351 | Learning Rate : 1.5178e-05 | Total Loss : 14.86 | Box Loss : 1.3465 | Object Loss : 13.2465 | Class Loss : 0.26695 Epoch : 16 | Iteration : 352 | Learning Rate : 1.5352e-05 | Total Loss : 13.5714 | Box Loss : 0.93982 | Object Loss : 12.4149 | Class Loss : 0.21666 Epoch : 16 | Iteration : 353 | Learning Rate : 1.5527e-05 | Total Loss : 14.0531 | Box Loss : 0.75712 | Object Loss : 12.9685 | Class Loss : 0.32748 Epoch : 16 | Iteration : 354 | Learning Rate : 1.5704e-05 | Total Loss : 12.627 | Box Loss : 0.40763 | Object Loss : 12.0022 | Class Loss : 0.21726 Epoch : 16 | Iteration : 355 | Learning Rate : 1.5882e-05 | Total Loss : 13.1038 | Box Loss : 0.32031 | Object Loss : 12.563 | Class Loss : 0.2205 Epoch : 16 | Iteration : 356 | Learning Rate : 1.6062e-05 | Total Loss : 15.1351 | Box Loss : 1.3416 | Object Loss : 13.4434 | Class Loss : 0.35006 Epoch : 16 | Iteration : 357 | Learning Rate : 1.6243e-05 | Total Loss : 13.5998 | Box Loss : 0.86842 | Object Loss : 12.5723 | Class Loss : 0.15913 Epoch : 16 | Iteration : 358 | Learning Rate : 1.6426e-05 | Total Loss : 17.9834 | Box Loss : 2.2705 | Object Loss : 15.0911 | Class Loss : 0.62189 Epoch : 16 | Iteration : 359 | Learning Rate : 1.661e-05 | Total Loss : 13.2651 | Box Loss : 0.77707 | Object Loss : 12.1661 | Class Loss : 0.32198 Epoch : 16 | Iteration : 360 | Learning Rate : 1.6796e-05 | Total Loss : 12.363 | Box Loss : 0.56516 | Object Loss : 11.603 | Class Loss : 0.1949 Epoch : 16 | Iteration : 361 | Learning Rate : 1.6984e-05 | Total Loss : 16.1314 | Box Loss : 1.521 | Object Loss : 14.0555 | Class Loss : 0.55489 Epoch : 16 | Iteration : 362 | Learning Rate : 1.7173e-05 | Total Loss : 12.5865 | Box Loss : 0.60568 | Object Loss : 11.7713 | Class Loss : 0.20958 Epoch : 16 | Iteration : 363 | Learning Rate : 1.7363e-05 | Total Loss : 15.2939 | Box Loss : 0.98848 | Object Loss : 13.8377 | Class Loss : 0.46774 Epoch : 16 | Iteration : 364 | Learning Rate : 1.7555e-05 | Total Loss : 14.0186 | Box Loss : 1.3655 | Object Loss : 12.2908 | Class Loss : 0.3623 Epoch : 16 | Iteration : 365 | Learning Rate : 1.7749e-05 | Total Loss : 11.411 | Box Loss : 0.53581 | Object Loss : 10.6625 | Class Loss : 0.21268 Epoch : 16 | Iteration : 366 | Learning Rate : 1.7944e-05 | Total Loss : 16.9061 | Box Loss : 2.0613 | Object Loss : 14.1356 | Class Loss : 0.70913 Epoch : 16 | Iteration : 367 | Learning Rate : 1.8141e-05 | Total Loss : 12.6763 | Box Loss : 0.65758 | Object Loss : 11.7863 | Class Loss : 0.23245 Epoch : 16 | Iteration : 368 | Learning Rate : 1.834e-05 | Total Loss : 10.0711 | Box Loss : 0.1887 | Object Loss : 9.6617 | Class Loss : 0.22069 Epoch : 17 | Iteration : 369 | Learning Rate : 1.854e-05 | Total Loss : 12.3867 | Box Loss : 0.68877 | Object Loss : 11.4522 | Class Loss : 0.24571 Epoch : 17 | Iteration : 370 | Learning Rate : 1.8742e-05 | Total Loss : 15.6693 | Box Loss : 1.4483 | Object Loss : 13.7836 | Class Loss : 0.43749 Epoch : 17 | Iteration : 371 | Learning Rate : 1.8945e-05 | Total Loss : 11.2525 | Box Loss : 0.38393 | Object Loss : 10.5911 | Class Loss : 0.27744 Epoch : 17 | Iteration : 372 | Learning Rate : 1.915e-05 | Total Loss : 12.624 | Box Loss : 1.0968 | Object Loss : 11.2567 | Class Loss : 0.2705 Epoch : 17 | Iteration : 373 | Learning Rate : 1.9357e-05 | Total Loss : 14.1601 | Box Loss : 1.9424 | Object Loss : 11.6739 | Class Loss : 0.54376 Epoch : 17 | Iteration : 374 | Learning Rate : 1.9565e-05 | Total Loss : 13.8942 | Box Loss : 1.4335 | Object Loss : 12.0368 | Class Loss : 0.42392 Epoch : 17 | Iteration : 375 | Learning Rate : 1.9775e-05 | Total Loss : 11.7365 | Box Loss : 0.51757 | Object Loss : 10.9588 | Class Loss : 0.2601 Epoch : 17 | Iteration : 376 | Learning Rate : 1.9987e-05 | Total Loss : 13.3418 | Box Loss : 1.1792 | Object Loss : 11.7613 | Class Loss : 0.40132 Epoch : 17 | Iteration : 377 | Learning Rate : 2.0201e-05 | Total Loss : 12.5316 | Box Loss : 0.5521 | Object Loss : 11.662 | Class Loss : 0.31745 Epoch : 17 | Iteration : 378 | Learning Rate : 2.0416e-05 | Total Loss : 11.6554 | Box Loss : 0.71192 | Object Loss : 10.7293 | Class Loss : 0.21421 Epoch : 17 | Iteration : 379 | Learning Rate : 2.0633e-05 | Total Loss : 10.5197 | Box Loss : 0.45873 | Object Loss : 9.8577 | Class Loss : 0.20335 Epoch : 17 | Iteration : 380 | Learning Rate : 2.0851e-05 | Total Loss : 10.8727 | Box Loss : 0.6144 | Object Loss : 10.0022 | Class Loss : 0.25605 Epoch : 17 | Iteration : 381 | Learning Rate : 2.1072e-05 | Total Loss : 12.827 | Box Loss : 1.5994 | Object Loss : 10.7265 | Class Loss : 0.50119 Epoch : 17 | Iteration : 382 | Learning Rate : 2.1294e-05 | Total Loss : 13.5798 | Box Loss : 1.0056 | Object Loss : 12.0783 | Class Loss : 0.49589 Epoch : 17 | Iteration : 383 | Learning Rate : 2.1518e-05 | Total Loss : 10.2467 | Box Loss : 0.38574 | Object Loss : 9.653 | Class Loss : 0.20797 Epoch : 17 | Iteration : 384 | Learning Rate : 2.1743e-05 | Total Loss : 9.5454 | Box Loss : 0.31727 | Object Loss : 9.0364 | Class Loss : 0.19168 Epoch : 17 | Iteration : 385 | Learning Rate : 2.1971e-05 | Total Loss : 10.7994 | Box Loss : 1.0524 | Object Loss : 9.5408 | Class Loss : 0.20626 Epoch : 17 | Iteration : 386 | Learning Rate : 2.22e-05 | Total Loss : 13.8463 | Box Loss : 1.1893 | Object Loss : 12.236 | Class Loss : 0.42102 Epoch : 17 | Iteration : 387 | Learning Rate : 2.2431e-05 | Total Loss : 9.5131 | Box Loss : 0.33435 | Object Loss : 8.9877 | Class Loss : 0.19104 Epoch : 17 | Iteration : 388 | Learning Rate : 2.2663e-05 | Total Loss : 11.7341 | Box Loss : 0.82945 | Object Loss : 10.6175 | Class Loss : 0.28709 Epoch : 17 | Iteration : 389 | Learning Rate : 2.2898e-05 | Total Loss : 12.5049 | Box Loss : 1.1493 | Object Loss : 10.9918 | Class Loss : 0.3639 Epoch : 17 | Iteration : 390 | Learning Rate : 2.3134e-05 | Total Loss : 10.0721 | Box Loss : 0.78613 | Object Loss : 9.0417 | Class Loss : 0.24434 Epoch : 17 | Iteration : 391 | Learning Rate : 2.3373e-05 | Total Loss : 12.3075 | Box Loss : 1.3411 | Object Loss : 10.7143 | Class Loss : 0.25203 Epoch : 18 | Iteration : 392 | Learning Rate : 2.3613e-05 | Total Loss : 9.7277 | Box Loss : 0.45647 | Object Loss : 8.966 | Class Loss : 0.30527 Epoch : 18 | Iteration : 393 | Learning Rate : 2.3854e-05 | Total Loss : 13.1086 | Box Loss : 1.666 | Object Loss : 11.1439 | Class Loss : 0.2987 Epoch : 18 | Iteration : 394 | Learning Rate : 2.4098e-05 | Total Loss : 12.9978 | Box Loss : 0.88292 | Object Loss : 11.6079 | Class Loss : 0.50705 Epoch : 18 | Iteration : 395 | Learning Rate : 2.4344e-05 | Total Loss : 10.7919 | Box Loss : 0.53646 | Object Loss : 9.9745 | Class Loss : 0.28095 Epoch : 18 | Iteration : 396 | Learning Rate : 2.4591e-05 | Total Loss : 9.7264 | Box Loss : 0.7848 | Object Loss : 8.6938 | Class Loss : 0.24779 Epoch : 18 | Iteration : 397 | Learning Rate : 2.4841e-05 | Total Loss : 10.4778 | Box Loss : 1.0495 | Object Loss : 9.2081 | Class Loss : 0.22026 Epoch : 18 | Iteration : 398 | Learning Rate : 2.5092e-05 | Total Loss : 9.169 | Box Loss : 0.57491 | Object Loss : 8.3962 | Class Loss : 0.1979 Epoch : 18 | Iteration : 399 | Learning Rate : 2.5345e-05 | Total Loss : 11.1855 | Box Loss : 1.0002 | Object Loss : 9.9185 | Class Loss : 0.26684 Epoch : 18 | Iteration : 400 | Learning Rate : 2.56e-05 | Total Loss : 10.7894 | Box Loss : 1.1357 | Object Loss : 9.1997 | Class Loss : 0.45402 Epoch : 18 | Iteration : 401 | Learning Rate : 2.5857e-05 | Total Loss : 9.2411 | Box Loss : 0.46813 | Object Loss : 8.5093 | Class Loss : 0.2637 Epoch : 18 | Iteration : 402 | Learning Rate : 2.6116e-05 | Total Loss : 10.2135 | Box Loss : 0.60753 | Object Loss : 9.2861 | Class Loss : 0.31993 Epoch : 18 | Iteration : 403 | Learning Rate : 2.6377e-05 | Total Loss : 11.366 | Box Loss : 0.78865 | Object Loss : 10.2008 | Class Loss : 0.37651 Epoch : 18 | Iteration : 404 | Learning Rate : 2.6639e-05 | Total Loss : 8.2499 | Box Loss : 0.39101 | Object Loss : 7.6322 | Class Loss : 0.22674 Epoch : 18 | Iteration : 405 | Learning ...

评估模型

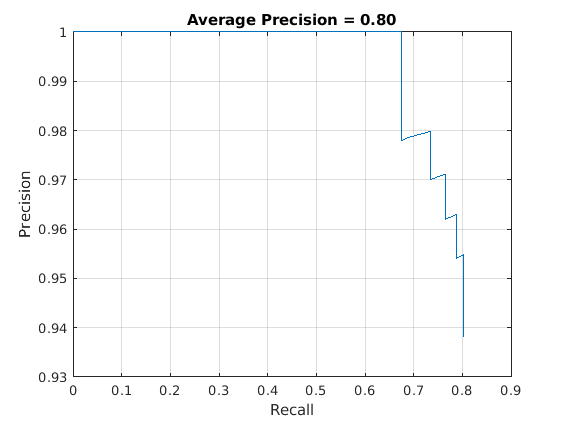

计算机Vision System Toolbox™提供对象检测器评估功能,以测量平均精度等常用度量(评估要求)和日志平均遗漏率(评估法律)。在该示例中,使用平均精度度量。平均精度提供单个数字,该编号包含检测器对探测器(精度)和检测器找到所有相关对象(召回)的能力的能力。

结果=检测(yolov3detector,testdata,“MiniBatchSize”,8);使用平均精度度量来评估物体探测器。[AP,召回,精度] =评估预选(结果,TestData);

精密召回(PR)曲线示出了检测器在变化级别的召回程度上的精确度。理想情况下,精度在所有召回级别都是1。

绘制精度-召回率曲线。图绘图(召回,精确)xlabel(“回忆”) ylabel ('精确') 网格在标题(Sprintf('平均精度=%.2f',ap))

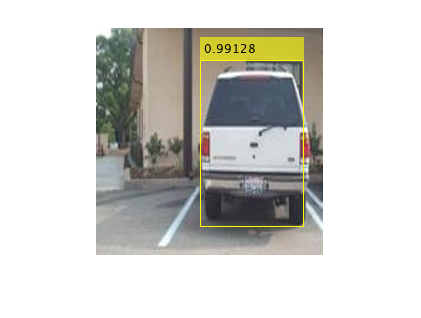

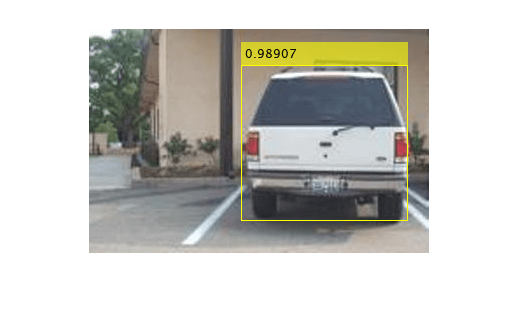

使用YOLO V3检测对象

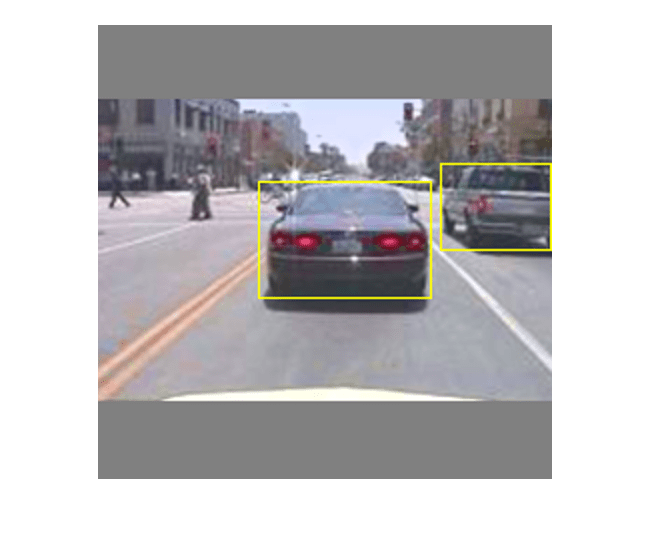

使用检测器进行对象检测。

%读取数据存储。数据=读取(testdata);%获取图像。我={1}数据;[bboxes、分数、标签]=检测(yolov3Detector,我);%显示图像的检测。i = InsertObjectAnnotation(i,'矩形',bboxes,得分);图imshow(i)

金宝app支持功能

模型梯度函数

功能MapicalGRADENTERS.以输入为例yolov3ObjectDetector对象,一个迷你批次输入数据XTrain与相应的地面真相盒ytrain,中指定的惩罚阈值作为输入参数,并返回损失相对于可学习参数的梯度yolov3ObjectDetector,相应的迷你批量丢失信息和当前批次的状态。

模型梯度函数通过执行这些操作来计算总损耗和渐变。

使用使用的图像输入批量生成预测

向前方法。收集对后处理CPU的预测。

将来自yolo v3网格单元的预测转换为边界框坐标,以便通过使用允许与地面真理数据轻松比较

锚箱是的方法yolov3ObjectDetector.通过使用转换后的预测和地面真实数据生成损耗计算的目标。为边界框位置(x,y,宽度,高度),对象置信度和类概率生成这些目标。请参阅支持功能金宝app

generatetargets..计算预测的边界盒坐标与目标盒的均方误差。请参阅支持功能金宝app

bboxoffsetloss..确定预测对象置信度得分的二进制交叉熵与目标对象置信度分数。请参阅支持功能金宝app

Objectnessloss..用目标确定预测对象类对象的二进制交叉熵。请参阅支持功能金宝app

classconfidenceLoss..计算总损失作为所有损失的总和。

在总损失方面计算学习栏的渐变。

功能[gradient, state, info] = modelGradients(detector, XTrain, YTrain, penaltyThreshold) inputImageSize = size(XTrain,1:2);%在CPU中收集地面真相进行后期处理YTrain =聚集(提取物(ytrain));%从检测器中提取预测。[收集预报,ypredcell,状态] =前进(探测器,XTrain);%生成从地面真实数据的预测的目标。[boxtarget,Objectnesstarget,Classtarget,ObjectMaskTarget,BoxerrorScale] = generateTargets(GrentedPredictions,...YTrain inputImageSize,探测器。AnchorBoxes penaltyThreshold);%计算丢失。boxloss = bboxoffsetloss(ypredcell(:,[2 3 7 8]),boxtarget,objectMasktarget,BoxerrorScale);objloss = Objectnessloss(ypredcell(:,1),objectnesstarget,ObjectMaskTarget);clsloss = classconfidenceLoss(ypredcell(:,6),classtarget,objectmasktarget);totalloss = boxloss + objloss + clsloss;info.boxloss = boxloss;info.objloss = objloss;info.clsloss = clsloss;info.totalloss = totalloss;在损失方面,从学习栏的%计算梯度。梯度= Dlgradient(Totalloss,Detector.Learnables);结尾功能boxloss = bboxoffsetloss(boxpredcell,boxdeltararget,boxmasktarget,boxerrorscaletarget)限定框位置的均方误差。lostx = sum(cellfun(@(a,b,c,d)mse(a。* c。* d,b。* c。* c。* c),boxpredcell(:,1),boxdeltarget(:1),boxmasktarget(:,1),boxerrorscaletarget));损失= sum(cellfun(@(a,b,c,d)mse(a。* c。* d,b。* c。* c。* d),boxpredcell(:,2),boxdeltatarget(:,2),boxmasktarget(:,1),boxerrorscaletarget));loctw = sum(cellfun(@(a,b,c,d)mse(a。* c。* d,b。* c。* c。* d),boxpredcell(:,3),boxdeltatarget(:,3),boxmasktarget(:,1),boxerrorscaletarget));losth = sum(cellfun(@(a,b,c,d)mse(a。* c。* d,b。* c。* d),boxpredcell(:,4),boxdeltatarget(:,4),boxmasktarget(:,1),boxerrorscaletarget));boxloss = lossx +损失+损失+损失;结尾功能objloss = Objectnessloss(Objectnesspredcell,ObjectnessDeltatarget,BoxMaskTarget)%客观分数的二元交叉熵损失。objLoss = sum(cellfun(@(a,b,c)) crossentropy(a.*c,b.*c,))“TargetCategories”那'独立的'),Objectnesspredcell,ObjectnessDeltatarget,BoxMaskTarget(:,2)));结尾功能clsloss = classconfidenceLoss(classpredcell,classtarget,boxmasktarget)课堂信心评分的%二进制交叉熵损失。clsloss = sum(cellfun(@(a,b,c)联语(a。* c,b。* c,“TargetCategories”那'独立的'),classpredcell,classtarget,boxmasktarget(:,3)));结尾

增强和数据处理功能

功能数据= upmentdata(a)%应用随机水平翻转,随机X / Y缩放。得到的盒子如果重叠大于0.25,则在边界外缩放的%被截断。同时,%抖动图像颜色。数据=单元格(大小(a));为了II = 1:尺寸(a,1)i = a {ii,1};bboxes = a {ii,2};标签= {II,3};sz =尺寸(i);如果numel(sz) == 3 && sz(3) == 3 I = jitterColorHSV(I,...'对比',0.0,...'色调',0.1,...“饱和”,0.2,...“亮度”,0.2);结尾%随机翻转图像。tform = ronstaffine2d(“XReflection”,真的,'规模'1.1 [1]);tform溃败= affineOutputView(深圳,'裸机'那'centeroutput');i = imwarp(i,tform,'OutputView',溃败);对方框应用相同的转换。[bboxes,indices] = bboxwarp(bboxes,tform,rest,“OverlapThreshold”,0.25);标签=标签(索引);只有在通过翘曲删除所有框时,才能返回原始数据。如果isempty(索引)数据(ii,:) = a(ii,:);别的数据(ii,:) = {i,bboxes,labels};结尾结尾结尾功能Data = PreprocessData(数据,TargetSize)%调整图像大小并将像素缩放到0到1.也缩放%对应的边界框。为了ii = 1:size(data,1) I = data{ii,1};imgSize =大小(I);%使用单通道转换输入图像到3个通道。如果numel(Imgsize)<3 i = repmat(i,1,1,3);结尾bboxes = data {ii,2};i = im2single(imresize(i,targetsize(1:2)));scale = targetsize(1:2)./ Imgsize(1:2);bboxes = bboxresize(bboxes,scale);数据(II,1:2)= {i,bboxes};结尾结尾功能[xtrain,ytrain] = createbatchdata(数据,地区,地区,地区,classNames)%返回沿XTrain的批处理维度组合的图像用ClaseDS在YTrain上串联的%归一化边界框%沿批处理尺寸连接图像。XTrain = cat(4, data{:,1});%从类名中获取类ID。ClassNames = Repmat({分类(ClassNames')},大小(地面基条));[〜,classIndices] = Cellfun(@(a,b)是member(a,b),地区,classnames,“UniformOutput”, 错误的);%将标签索引和培训图像大小附加到缩放边界框中%并创建一个响应单元格数组。contistresponses = cellfun(@(bbox,classid)[bbox,classid],toundtruthboxes,classindices,“UniformOutput”, 错误的);len = max(cellfun(@(x)大小(x,1),contistresponses));PaddedBboxes = Cellfun(@(v)padarray(v,[len size(v,1),0],0,'邮政'),合并的,“UniformOutput”、假);YTrain = cat(4, paddeddbboxes {:,1});结尾

学习率计划函数

功能currenttlr = piecewiselearningretwithwarmup (iteration, epoch, learningRate, warmupPeriod, numEpochs)%典型的InnownrateWithWarmup功能计算当前基于迭代号的百分比学习率。执着的warmUpEpoch;如果迭代<= harmupperiod%增加了预热期间迭代次数的学习率。currentlr =学会*((迭代/ harmupperiod)^ 4);harmupepoch = epoch;elseif迭代> = Harmupperiod && Epoch预热期后%,如果剩余的时期数量小于60%,则保持学习率常数。currentlr =学会;elseifepoch >= warmUpEpoch+floor(0.6*(numEpochs-warmUpEpoch)) && epoch < warmUpEpoch+floor(0.9*(numEpochs-warmUpEpoch))%剩余纪元数超过60%但少于60%90%以上的学习率乘以0.1。currentLR = learningRate * 0.1;别的%如果剩余的时期超过90%,则乘以学习%率达0.01。currentLR = learningRate * 0.01;结尾结尾

效用函数

功能[失败,学习翻板] = ConfiguretRingProgressplotter(F)%创建子图来显示丢失和学习率。图(f);CLF子图(2,1,1);ylabel(学习速率的);Xlabel('迭代');学习平板=动画线;子图(2,1,2);ylabel('总体损耗');Xlabel('迭代');lockplotter =动画线;结尾功能displayLossInfo(epoch, iteration, currenttlr, lossInfo)显示%显示每个迭代的损失信息。DISP(”时代:“+ epoch +“|迭代:”+迭代+“|学习率:”+ currentLR +...“|总损失:”+双(收集(extractdata (lossInfo.totalLoss))) +...“|箱体损失:”+双(收集(extractdata (lossInfo.boxLoss))) +...“|对象损失:”+ DOUBLE(收集(提取数据(loctionInfo.objloss)))+...“|丢失:”+ DOUBLE(收集(提取数据(loctInfo.clsloss))))));结尾功能updateplot (lossPlotter, learningrateful plotter, iteration, currenttlr, totalLoss)%更新丢失和学习率图。addpoints (lossPlotter、迭代、双(extractdata(收集(totalLoss))));addpoints (learningRatePlotter迭代,currentLR);drawnow结尾功能探测器= downloadPRETRAYYOLOV3DETCORER()%下载普里尔般的yolov3探测器。如果~ ('yolov3squeezenetvehicleexample_21aspkg.mat'那“文件”)如果~ (“yolov3SqueezeNetVehicleExample_21aSPKG.zip”那“文件”)disp('下载掠夺探测器...');pretrainedURL =“https://ssd.mathworks.com/金宝appsupportfiles/vision/data/yolov3SqueezeNetVehicleExample_21aSPKG.zip”;websave (“yolov3SqueezeNetVehicleExample_21aSPKG.zip”,pretrowsurl);结尾解压缩(“yolov3SqueezeNetVehicleExample_21aSPKG.zip”);结尾pretry = load(“yolov3squeezenetvehicleexample_21apkg.mat”);探测器= pretrination.detector;结尾

参考

雷蒙德,约瑟夫和阿里·法哈迪。“YOLOv3:一个渐进的改进。”预印本,2018年4月8日提交。https://arxiv.org/abs/1804.02767。

也可以看看

检测|评估法律|评估要求|向前|预处理|yolov3ObjectDetector|分析(深度学习工具箱)