关联向量机(RVM)

相关性矢量机器的Matlab代码

2.1版本,31日- 8月- 2021

电子邮件:iqiukp@outlook.com

主要特点

- 二进制分类(RVC)或回归的RVM模型(RVR)

- 多种核函数(线性、高斯、多项式、s型、拉普拉斯)

- 混合内核功能(K = W1×K1 + W2×K2 + ... + WN×KN)

- 参数优化使用贝叶斯优化,遗传算法,粒子群优化

通知

- 此版本的代码不兼容低于R2016b的版本。

- 详细的应用程序请参见演示。

- 此代码仅供参考。

如何使用

01.使用RVM (RVC)进行分类

使用RVM分类的演示

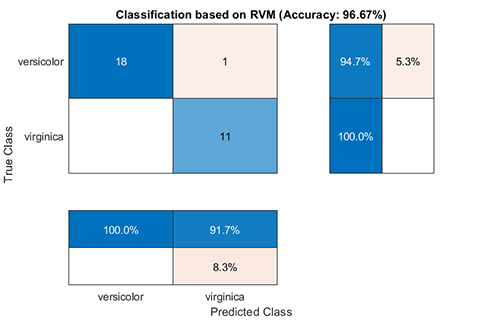

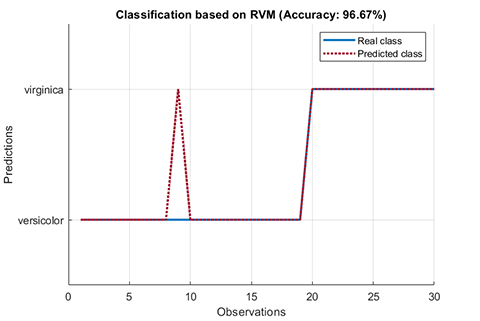

CLC clear all close all addpath(genpath(pwd)) % use fishiris dataset load fishiris inds = ~strcmp(species, 'setosa');data__ = meas(inds, 3:4);label_ =物种(第1);cvIndices = crossvalind('HoldOut', length(data_), 0.3);trainData = data_(cvIndices,:);trainLabel = label_(cvIndices,:);testData = data_(~cvIndices,:); / /数据testLabel = label_(~cvIndices,:);% kernel = kernel ('type', '高斯','gamma', 0.2);%参数参数= struct('display', 'on',… 'type', 'RVC',... 'kernelFunc', kernel); rvm = BaseRVM(parameter); % RVM model training, testing, and visualization rvm.train(trainData, trainLabel); results = rvm.test(testData, testLabel); rvm.draw(results)结果:

* * * RVM模型(分类)列车运行时间完成* * * = 0.1604秒迭代= 20 = 70数量的样本数量的旅游房车= 2旅游房车的比例= 2.8571%的准确率= 94.2857% * * * RVM模型(分类)测试完成* * *运行时间= 0.0197秒的样本数量= 30精度= 96.6667%02.使用RVM(RVR)的回归

使用RVM进行回归的演示

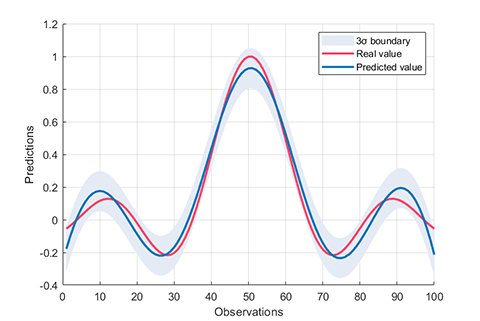

clc clear all close all addpath(genpath(pwd)) % sinc function load sinc_data trainData = x;trainLabel = y;testData = xt;testLabel =欧美;% kernel函数kernel = kernel ('type', '高斯','gamma', 0.1);%参数参数= struct('display', 'on',…“类型”、“RVR’,……kernelFunc,内核);rvm = BaseRVM(参数);%RVM模型培训,测试和可视化RVM.Train(TrainData,TrainLabel); results = rvm.test(testData, testLabel); rvm.draw(results)结果:

*** RVM模型(回归)列车完成了***运行时间= 0.1757秒迭代= 76样品数量= 100个RVS = 6比率RVS = 6.0000%RMSE = 0.1260 R2 = 0.8821 MAE = 0.0999 *** RVM模型(回归)测试完成***运行时间= 0.0026秒样品数= 50 RMSE = 0.1424 R2 = 0.8553 MAE = 0.110603.内核函数

一个类命名核心定义为计算核函数矩阵。

%{类型-线性:k (x, y) = x ' * y多项式:k (x, y) =(γ* x ' * y + c) ^ d高斯:k (x, y) = exp(-γ* | | x - y | | ^ 2)乙状结肠:k (x, y) =双曲正切(γ* x ' * y + c)拉普拉斯算子:k (x, y) = exp(-γ* | | x - y | |)学位- d抵消cγ-γ%}内核=内核(“类型”、“高斯”、“伽马”,值);kernel = kernel ('type', '多项式','degree', value);kernel = kernel ('type', 'linear');kernel = kernel ('type', 'sigmoid', 'gamma', value);kernel = kernel ('type', 'laplacian', 'gamma', value);例如,计算之间的核矩阵X和Y

X = rand(5, 2);Y = rand(3,2);kernel = kernel ('type', '高斯','gamma', 2);kernelMatrix = kernel.computeMatrix(X, Y);>> kernelMatrix kernelMatrix = 0.5684 0.5607 0.4007 0.4651 0.8383 0.5091 0.8392 0.7116 0.9834 0.4731 0.8816 0.8052 0.5034 0.9807 0.727404.混合的内核

一个使用RVM与hybrid_kernel的回归演示(K =w1×K1+w2×K2+…+wn×Kn)

clc clear all close all addpath(genpath(pwd)) % sinc function load sinc_data trainData = x;trainLabel = y;testData = xt;testLabel =欧美;%内核函数kernel_1 =内核('类型','高斯','gamma',0.3);kernel_2 = Kernel('type', '多项式','degree', 2);Kernelweight = [0.5,0.5];%参数参数= struct('display','on',...'type','rvr',...'kernelfunc',[kernelfunc',[kernel_1,kernel_2],...'kernelweight',Kernelwight);rvm = BaseRVM(参数);%RVM模型培训,测试和可视化RVM.Train(TrainData,TrainLabel); results = rvm.test(testData, testLabel); rvm.draw(results)05.单核-RVM的参数优化

具有参数优化的RVM模型的演示

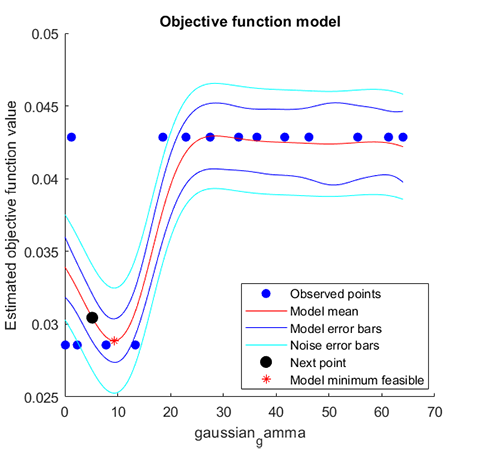

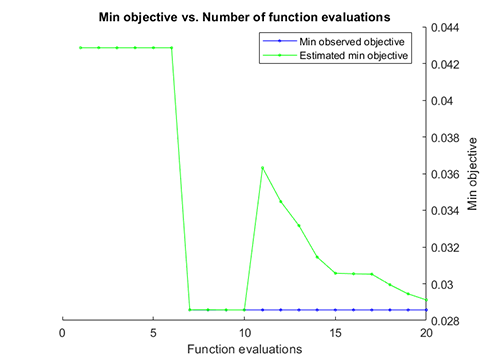

CLC clear all close all addpath(genpath(pwd)) % use fishiris dataset load fishiris inds = ~strcmp(species, 'setosa');data__ = meas(inds, 3:4);label_ =物种(第1);cvIndices = crossvalind('HoldOut', length(data_), 0.3);trainData = data_(cvIndices,:);trainLabel = label_(cvIndices,:);testData = data_(~cvIndices,:); / /数据testLabel = label_(~cvIndices,:);% kernel = kernel ('type', '高斯','gamma', 5);%参数优化option .method = 'bayes'; % bayes, ga, pso opt.display = 'on'; opt.iteration = 20; % parameter parameter = struct( 'display', 'on',... 'type', 'RVC',... 'kernelFunc', kernel,... 'optimization', opt); rvm = BaseRVM(parameter); % RVM model training, testing, and visualization rvm.train(trainData, trainLabel); results = rvm.test(trainData, trainLabel); rvm.draw(results)结果:

* * * RVM模型(分类)训练完成* * *迭代运行时间= 13.3356秒= 88的样品数量= 70旅游房车的数量= 4旅游房车的比例= 5.7143%的准确率= 97.1429% gaussian_gamma优化参数表 ______________ 7.8261 * * * RVM模型(分类)测试完成* * *运行时间样本的数量= 70 = 0.0195秒精度= 97.1429%06. Hybrid-Kernel-RVM的参数优化

具有参数优化的RVM模型的演示

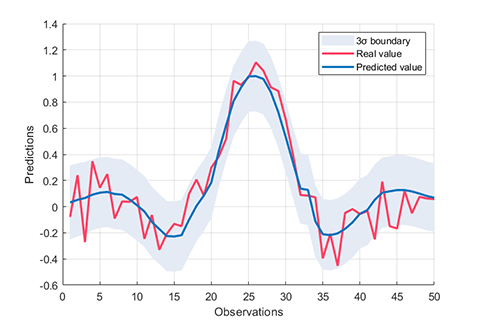

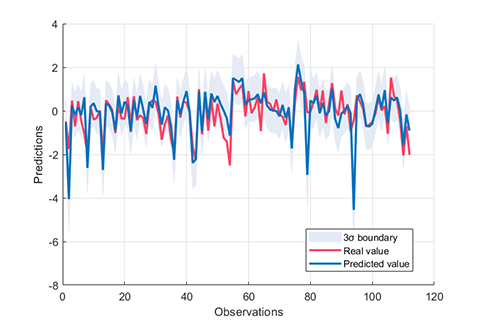

%{一个带有参数优化的混合内核RVM模型演示%}clc clear all close all addpath(genpath(pwd)) % data load UCI_data trainData = x;trainLabel = y;testData = xt;testLabel =欧美;% kernel函数kernel_1 = kernel ('type', '高斯','gamma', 0.5);kernel_2 = Kernel('type', '多项式','degree', 2);%参数优化option .method = 'bayes';% bayes, ga, pso opt.display = 'on';opt.iteration = 30;%参数参数= struct('display', 'on',… 'type', 'RVR',... 'kernelFunc', [kernel_1, kernel_2],... 'optimization', opt); rvm = BaseRVM(parameter); % RVM model training, testing, and visualization rvm.train(trainData, trainLabel); results = rvm.test(testData, testLabel); rvm.draw(results)结果:

* * * RVM模型(回归)训练完成* * *迭代运行时间= 24.4042秒= 377的样品数量= 264旅游房车的数量= 22旅游房车的比例= 8.3333% RMSE R2 = 0.4864 = 0.7719美= 0.3736优化参数表1×6 gaussian_gamma polynomial_gamma polynomial_offset polynomial_degree gaussian_weight polynomial_weight ______________________________ _________________ _________________ _______________ _________________ 22.315 13.595 44.83 0.042058 - 0.95794 6 * * * RVM模型(回归)测试完成* * *运行时间样本的数量= 112 = 0.0008秒RMSE R2 = 0.7400 = 0.6668美= 0.486707.交叉验证

在这段代码中,支持两种交叉验证方法:'K-Folds'和'Holdout'。金宝app例如,5- fold的交叉验证是

struct('display', 'on',…“类型”、“RVC’,……kernelFunc,内核,…“KFold”,5);例如,比值为0.3的Holdout方法的交叉验证为

参数= struct('display','on',...'type','rvc',...'kernelfunc',内核,...'holdout',0.3);08.其他选择

%%自定义优化选项%{opt.method ='贝叶斯';% bayes, ga, pso opt.display = 'on';opt.iteration = 20;opt.point = 10;%高斯内核函数opt.gaussian.parametername = {'gamma'};opt.gaussian.parametertype = {'真实'};opt.gaussian.lowerbound = 2 ^ -6;opt.gaussian.upperbound = 2 ^ 6;%laplacian内核函数opt.laplacian.parametername = {'gamma'};opt.laplacian.parametertype = {'真实'}; opt.laplacian.lowerBound = 2^-6; opt.laplacian.upperBound = 2^6; % polynomial kernel function opt.polynomial.parameterName = {'gamma'; 'offset'; 'degree'}; opt.polynomial.parameterType = {'real'; 'real'; 'integer'}; opt.polynomial.lowerBound = [2^-6; 2^-6; 1]; opt.polynomial.upperBound = [2^6; 2^6; 7]; % sigmoid kernel function opt.sigmoid.parameterName = {'gamma'; 'offset'}; opt.sigmoid.parameterType = {'real'; 'real'}; opt.sigmoid.lowerBound = [2^-6; 2^-6]; opt.sigmoid.upperBound = [2^6; 2^6]; %} %% RVM model parameter %{ 'display' : 'on', 'off' 'type' : 'RVR', 'RVC' 'kernelFunc' : kernel function 'KFolds' : cross validation, for example, 5 'HoldOut' : cross validation, for example, 0.3 'freeBasis' : 'on', 'off' 'maxIter' : max iteration, for example, 1000 %}Matlab释放兼容性

创建R2021a

兼容R2016b及后续版本

平台的兼容性

视窗 macOS Linux标签

RvmModel

SB2_Release_200

要查看或报告这个GitHub插件中的问题,请访问GitHub库.

要查看或报告这个GitHub插件中的问题,请访问GitHub库.